Gabe Wilson MD

2.7K posts

Gabe Wilson MD

@Gabe__MD

Emergency Physician - East Texas, home is NYC, Tour Medical Dir - Houston, Dallas Symphonies, Ex-Regional Director Envision, Juilliard-trained Violinist, RE/EVs

Oral easy-to-take GLP-1 Agonists are going to transform the market even more than injectables making them broadly adoptable. Implications are immense: Fewer hospital admissions (11% lower for heart failure, 20-24% lower among patients with diabetes for stroke and MI) Less money spent on food and possibly alcohol (5-10% lower grocery spend per user) Lower rates of hypertension, diabetes, high lipids Decreased obesity surgery by 10-15% 12% reduction in all-cause mortality Lower rates of kidney disease patients needing dialysis Orforglipron, an Oral Small-Molecule GLP-1 Receptor Agonist for Obesity Treatment | New England Journal of Medicine nejm.org/doi/full/10.10… (I have no interest in any pharmaceutical company and this will actually decrease the number of patients we get in the ER; it will make life tougher to finance hospitals)

NEWS: Waymo has just announced that their autonomous fleet has now driven a combined 170 million rider-only miles without a human driver as of Dec 2025, up from 127 million in Sept 2025. That's 467,000 miles per day on average. Waymo also released updated safety stats. Locations: • LA: 37.8 million • SF: 53.5 million • PHX: 68.6 million • Austin: 10.7 million

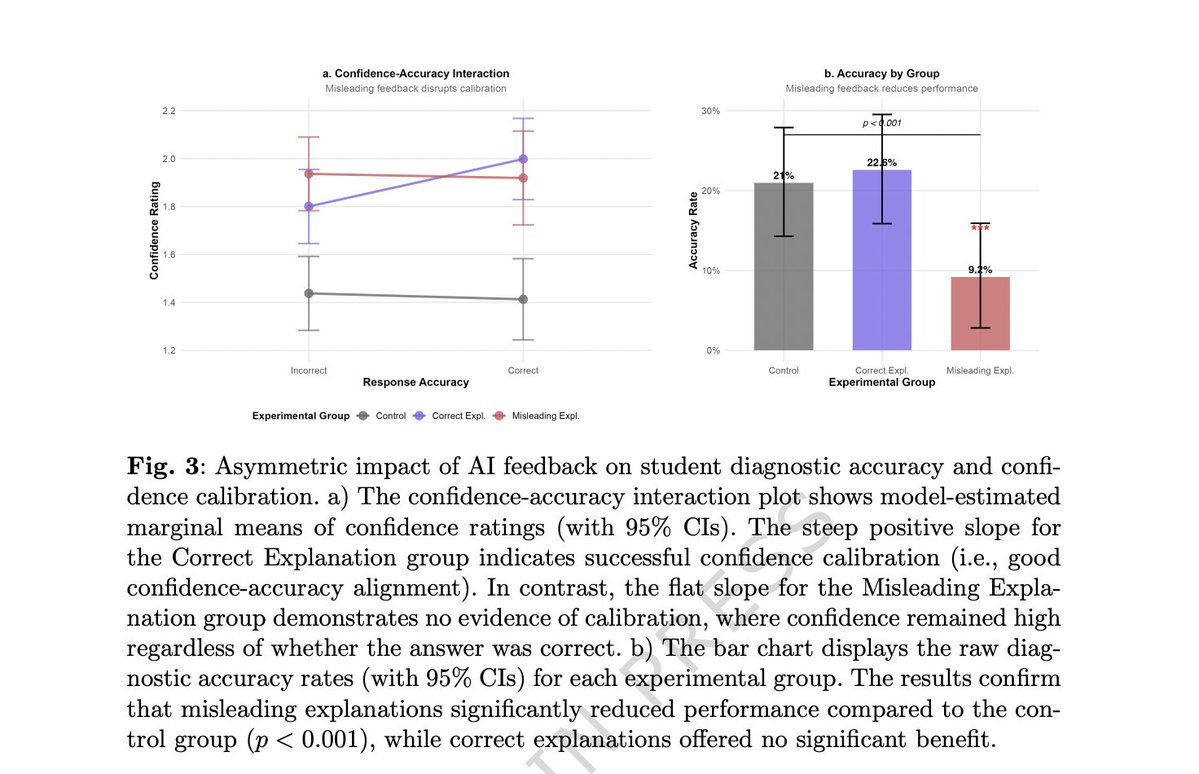

AI in 2026 cannot palpate an abdomen, intubate a patient, feel a thyroid nodule, test a patellar reflex, reduce a dislocated shoulder, perform a colonoscopy, or deliver a baby. That is not a temporary limitation. It is structural. When we scored AI capability across seven clinical dimensions for 240 visit reasons in 20 specialties, the physical/procedural dimension averaged 1.5 out of 5. The cognitive dimensions averaged 3.0 to 4.1. No specialty broke 2.0 on procedure. Not one. History-taking averaged 4.1 — approaching specialist level. Patient communication 3.6. Follow-up management 3.5. Documentation, which runs through every workflow component, is arguably where AI already outperforms most physicians in speed and completeness. The 2.6-point gap between the cognitive ceiling and the procedural wall is not closing with larger language models. Language models do not have hands. Closing that gap requires robotics, haptic sensing, and physical infrastructure at clinical scale — none of which exists beyond narrow research applications. This matters for how we think about workforce planning. The specialties in Tier 3 of our ranking — Ophthalmology, General Surgery, ENT, Emergency Medicine, Orthopedic Surgery, Anesthesiology — are not there because AI cannot reason about their clinical problems. It can. They are in Tier 3 because the physician's physical presence is the treatment. You cannot automate a knee replacement. You cannot automate airway rescue. The specialties in Tier 1 — Radiology, Internal Medicine, Dermatology, Family Medicine, Endocrinology — are there because their workflows are dominated by cognition, synthesis, and documentation, with physical intervention consuming a smaller share of total effort. The implication is straightforward. AI's near-term value is not about replacing any specialty. It is about absorbing the cognitive and administrative burden that consumes 40-60% of every physician's workday across every specialty. The procedural work stays human. The paperwork does not have to. Health systems investing in AI as a documentation, intake, and decision-support engine will see returns now. Health systems waiting for AI to replace proceduralists will be waiting a long time. Post 3 of a series. Post 1: consensus ranking. Post 2: adversarial reconciliation methodology.

AI in 2026 cannot palpate an abdomen, intubate a patient, feel a thyroid nodule, test a patellar reflex, reduce a dislocated shoulder, perform a colonoscopy, or deliver a baby. That is not a temporary limitation. It is structural. When we scored AI capability across seven clinical dimensions for 240 visit reasons in 20 specialties, the physical/procedural dimension averaged 1.5 out of 5. The cognitive dimensions averaged 3.0 to 4.1. No specialty broke 2.0 on procedure. Not one. History-taking averaged 4.1 — approaching specialist level. Patient communication 3.6. Follow-up management 3.5. Documentation, which runs through every workflow component, is arguably where AI already outperforms most physicians in speed and completeness. The 2.6-point gap between the cognitive ceiling and the procedural wall is not closing with larger language models. Language models do not have hands. Closing that gap requires robotics, haptic sensing, and physical infrastructure at clinical scale — none of which exists beyond narrow research applications. This matters for how we think about workforce planning. The specialties in Tier 3 of our ranking — Ophthalmology, General Surgery, ENT, Emergency Medicine, Orthopedic Surgery, Anesthesiology — are not there because AI cannot reason about their clinical problems. It can. They are in Tier 3 because the physician's physical presence is the treatment. You cannot automate a knee replacement. You cannot automate airway rescue. The specialties in Tier 1 — Radiology, Internal Medicine, Dermatology, Family Medicine, Endocrinology — are there because their workflows are dominated by cognition, synthesis, and documentation, with physical intervention consuming a smaller share of total effort. The implication is straightforward. AI's near-term value is not about replacing any specialty. It is about absorbing the cognitive and administrative burden that consumes 40-60% of every physician's workday across every specialty. The procedural work stays human. The paperwork does not have to. Health systems investing in AI as a documentation, intake, and decision-support engine will see returns now. Health systems waiting for AI to replace proceduralists will be waiting a long time. Post 3 of a series. Post 1: consensus ranking. Post 2: adversarial reconciliation methodology.

AI in 2026 cannot palpate an abdomen, intubate a patient, feel a thyroid nodule, test a patellar reflex, reduce a dislocated shoulder, perform a colonoscopy, or deliver a baby. That is not a temporary limitation. It is structural. When we scored AI capability across seven clinical dimensions for 240 visit reasons in 20 specialties, the physical/procedural dimension averaged 1.5 out of 5. The cognitive dimensions averaged 3.0 to 4.1. No specialty broke 2.0 on procedure. Not one. History-taking averaged 4.1 — approaching specialist level. Patient communication 3.6. Follow-up management 3.5. Documentation, which runs through every workflow component, is arguably where AI already outperforms most physicians in speed and completeness. The 2.6-point gap between the cognitive ceiling and the procedural wall is not closing with larger language models. Language models do not have hands. Closing that gap requires robotics, haptic sensing, and physical infrastructure at clinical scale — none of which exists beyond narrow research applications. This matters for how we think about workforce planning. The specialties in Tier 3 of our ranking — Ophthalmology, General Surgery, ENT, Emergency Medicine, Orthopedic Surgery, Anesthesiology — are not there because AI cannot reason about their clinical problems. It can. They are in Tier 3 because the physician's physical presence is the treatment. You cannot automate a knee replacement. You cannot automate airway rescue. The specialties in Tier 1 — Radiology, Internal Medicine, Dermatology, Family Medicine, Endocrinology — are there because their workflows are dominated by cognition, synthesis, and documentation, with physical intervention consuming a smaller share of total effort. The implication is straightforward. AI's near-term value is not about replacing any specialty. It is about absorbing the cognitive and administrative burden that consumes 40-60% of every physician's workday across every specialty. The procedural work stays human. The paperwork does not have to. Health systems investing in AI as a documentation, intake, and decision-support engine will see returns now. Health systems waiting for AI to replace proceduralists will be waiting a long time. Post 3 of a series. Post 1: consensus ranking. Post 2: adversarial reconciliation methodology.