Ariel Goldstein retweetledi

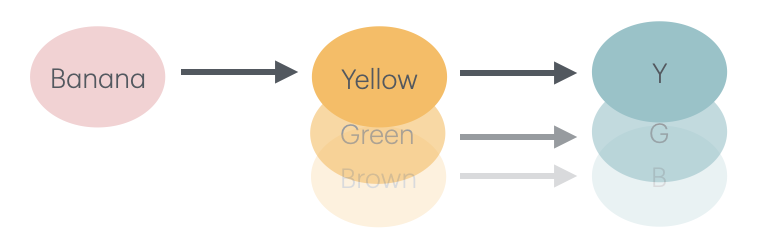

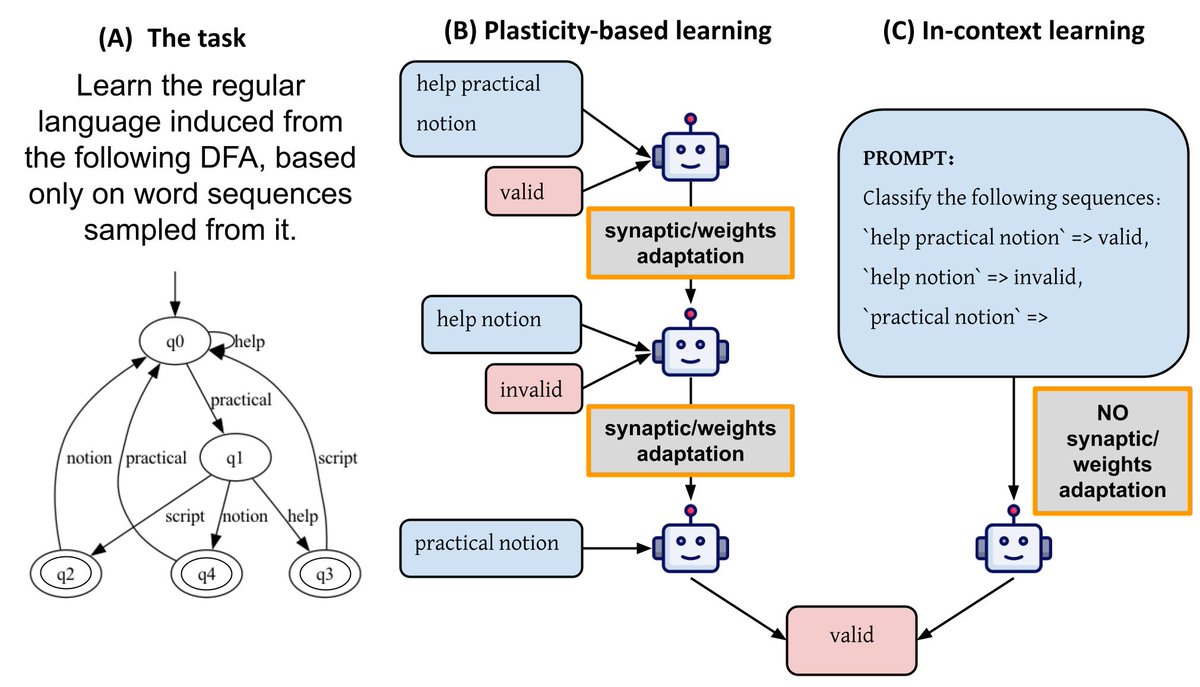

Do LLMs have motivation?

Motivation is a key lens for explaining human behavior.

As LLM behavior becomes more human-like, a natural question arises: could it help understand model behavior too?

With @AsaelSklar @GoldsteinYAriel @roireichart

📄 Paper: arxiv.org/pdf/2603.14347

1/5

English