Greg Tarr

178 posts

Greg Tarr

@Greg_Tarr

ai researcher, cto @markovrobotics

AGI, Inc. is now the global leader on the AndroidWorld benchmark, with state-of-the-art verified performance of 97.4% This is a huge milestone for Android use, and just a sneak preview of what's coming - bringing trustworthy, reliable agents to every screen 🚀

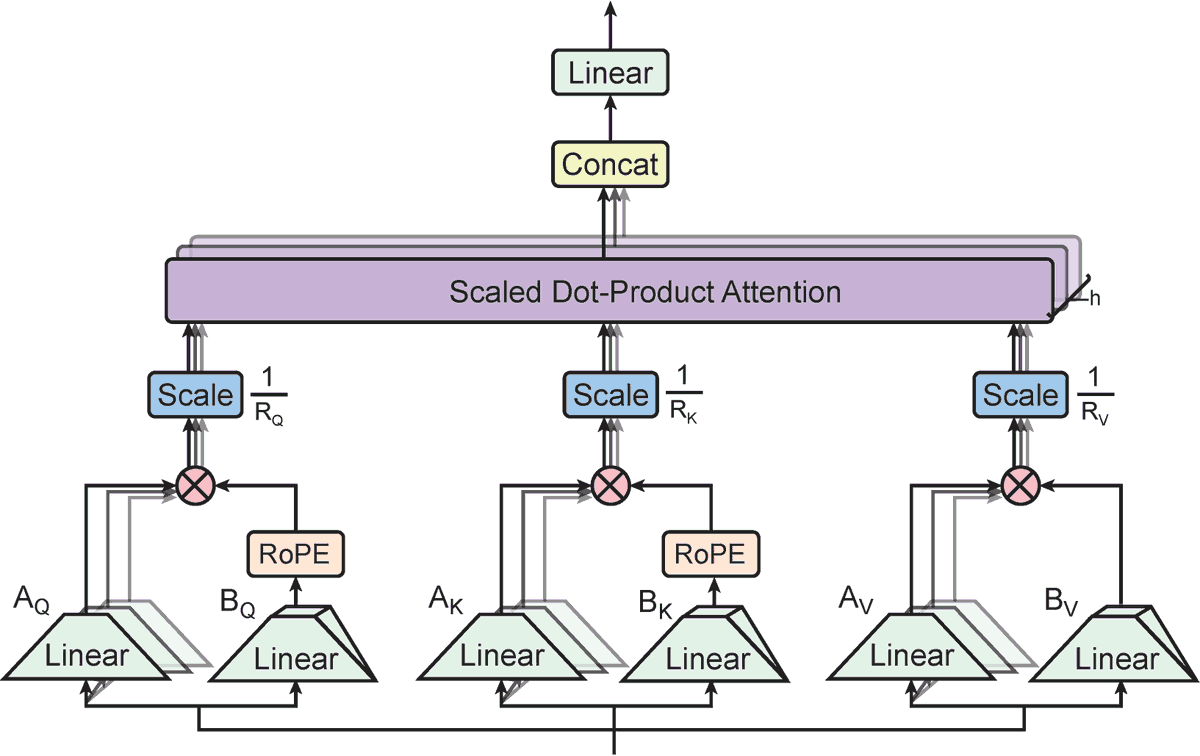

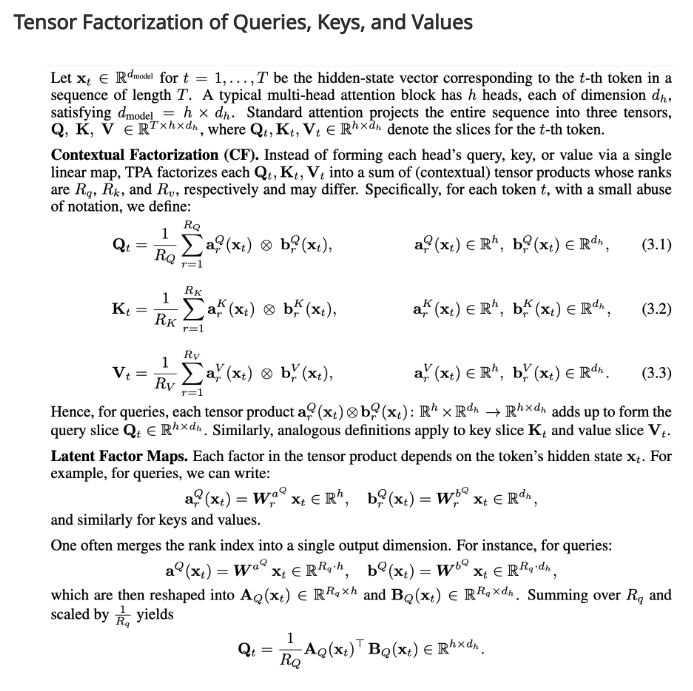

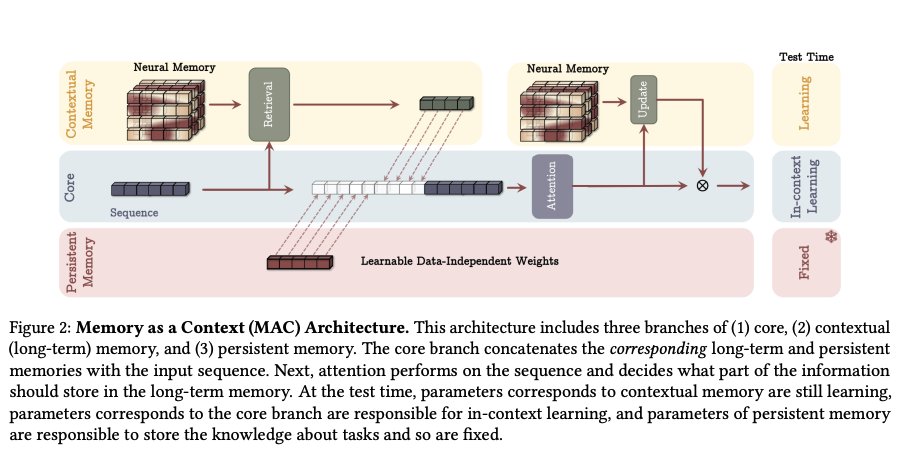

I think the best modern Transformer+++ design (diff transformer, gated deltanet, sparse MoE, NTP+n, some memory etc etc) might be only one OOM away in efficiency from the Optimal Architecture, unless we cheat and search for strong humanlike inductive biases (which isn't The Way).

Colab A100 experience is pretty awful for prototyping. The sessions take lot of time to connect. Are there other services out there which is not a DIY for a notebook+h100 experience?