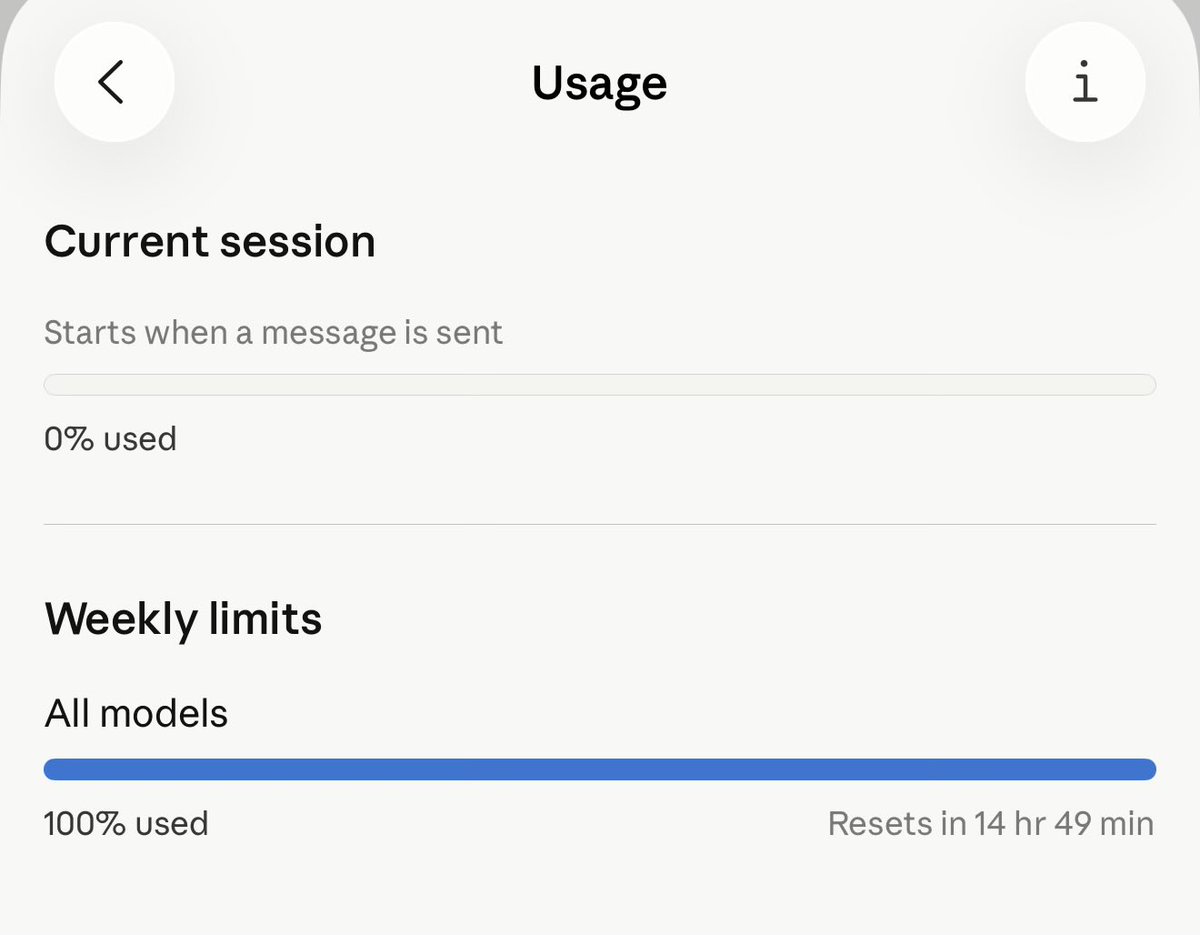

@iambchoor @ClaudeDevs Same for me still. And if you chat to the support bot it cuts you off.

English

Gregor

230 posts

@GregorMakes

Current hyperfocus in tinkering Healthtech, Apps, Mobility, AI I am home in "Layer 5". Projects: - LongResearch - https://t.co/9G3M1I0CSb - Infrahub (B2G) - Kalo

Excited to announce our results from the first ever survey on GLP-1s for Long COVID and ME! Patient outcomes showed two extremes: while 53% improved, 28% experienced worsening, some long after their last dose. Full analysis here and in the tweets below: lcmedata.org/treatments/glp…

New in Claude Code: agent view. One list of all your sessions, available today as a research preview.

I built a whole distributed caching layer over gh. Still run into limits.