Sabitlenmiş Tweet

GrowAIHub

18.8K posts

GrowAIHub

@GrowAIHub

Turning AI noise into clarity Tools • Threads • Growth Helping creators & devs win the future

California, USA Katılım Mart 2022

101 Takip Edilen28K Takipçiler

Students don’t struggle with ideas.

They struggle with getting them out fast enough.

Typing slows thinking.

Editing breaks flow.

Typeless flips that.

You speak once

and it turns into clear, structured, polished writing instantly.

Notes. Essays. Emails. Applications.

All without the keyboard bottleneck.

This isn’t voice-to-text.

It’s thought-to-writing.

50% off for students makes this a no-brainer.

Huang Song@huang_song_

Students now get 50% off Typeless Pro with a school email. Typeless is not just voice-to-text. It turns your messy thoughts into clear, polished writing wherever you write. Notes, emails, essays, applications, messages - all by speaking. The keyboard is a bottleneck. Typeless is the way out.

English

Everyone is trying to make AI think better.

Engramme is making AI remember.

That shift changes everything.

From generating answers

to understanding your history.

From reacting to prompts

to anticipating your needs.

This isn’t an upgrade.

It’s a new layer in the AI stack.

Search. Language. Now Memory.

#Engramme #AI #LargeMemoryModels

Engramme@EngrammeHQ

Persistent memory is the Achilles heel of AI. Engramme’s Large Memory Models (LMMs) empower every app with persistent memory. Google solved search. OpenAI solved language. Engramme solved memory. Join beta: engramme.com/signup

English

I just generated this cinematic scene using GPT Image 2 x Seedance 2.0 on the Pollo AI mobile app.

This is insane. 🔥

The final polished output from a single idea without switching tools or breaking flow.

One prompt → visual generation → motion video → ready-to-post content.

This is not just faster editing. It's a full content pipeline inside AI.

English

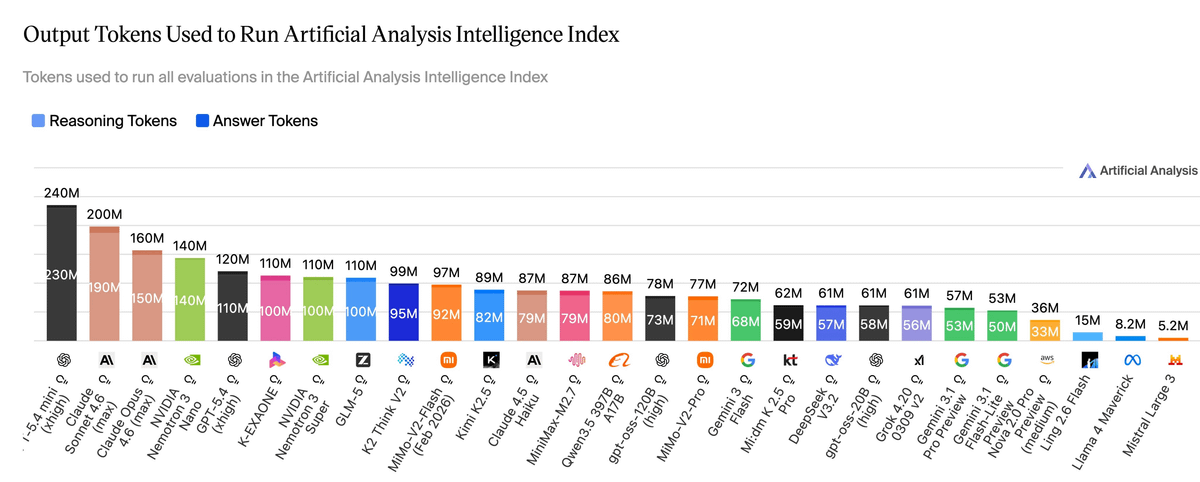

The AI race isn’t purely about intelligence anymore.

It’s about what that intelligence costs to run.

For the past two years, the conversation has centred on capability. Benchmarks, reasoning scores, multimodal performance. All important, but not what determines real world usage.

Cost does.

More specifically? token efficiency.

Not just how powerful a model is, but how much useful work it produces per token, at scale. In production, models rarely fail due to lack of intelligence.

They fail because they’re too expensive.

Token usage compounds. Small inefficiencies become real cost centres. And suddenly, the “best” model isn’t viable because it’s causing the company to bleed profit/money. No matter how cutting edge.

Which is why you need to pay attention to AntLingAGI’s latest move. It’s the future.

No big launch. No benchmark parade. Just a quiet deployment on OpenRouter under an alias: “Elephant.”

Which I’ve been keeping my eye on and using for quite some time now.

No staged demos. No controlled environments. Just real traffic and feedback.

A different kind of validation.

The key there is the efficiency.

It is hard to put into words just how important it is to have great token economics especially when considering cost. It really cannot be overstated. For a trillion parameter model? It’s staggering.

Just to put this into further context, in order for GPT-5.4 Mini High to run all the evaluations on the Artificial Analysis Intelligence Index, it required 240 Million Tokens. A step below that was Anthropic, that used 200 Million tokens.

Ling-2.6-flash used 15 Million. That is near miraculous.

The reality is that for a long time, bigger models meant higher costs. Ant Group is challenging that assumption through better inference economics.

Aristotle called it potentiality vs actuality.

What we’re seeing now is a move away from “bigger is better” toward usable intelligence. Smaller. Faster. Cheaper. Deployable.

In practice, a model that’s significantly cheaper and close in capability will outperform a more powerful one that can’t scale.

Every time.

The next layer is already forming. Better routing, token optimisation, leaner prompting, hybrid systems.

All focused on reducing unnecessary token burn.

Right now, many AI systems are still inefficient. They over generate, over contextualise, and waste tokens that don’t translate into value.

That won’t last.

As adoption scales, inefficiency becomes a bottleneck.

The question right now within most companies isn’t “Which model is smartest?”

It’s “Which model survives contact with scale?”

And increasingly, that answer is being measured in tokens.

English

HE SHOWED HIS GIRLFRIEND A TERMINAL AT 4AM AND IT WAS ALREADY UP $11,400 WHILE HE SLEPT.

Claude scanned 86 million trades, found the whales that never lose, and built a bot that just keeps printing.

You only need Claude + laptop + 1 hour/day.

Giving This Free for 24 hours. To get it:

1. Comment the word 'Claude'

2. Like and Retweet this post

3. Follow me

@ZayvenKnox

(so i can DM you)

English

Everything stays on your device.

No cloud. No leaks. Your work stays private.

Want to see it in action? Drop AirJelly below 👇 and we’ll send you an invite code.

airjelly.ai

English

AI shouldn’t feel like a tool you open.

It should feel like something that’s already there... watching your workflow.

That’s what @airjellyAI is building:

An always-on, context-aware AI that moves your work forward before you ask.

English