Peter S. Nordholt

1.8K posts

Peter S. Nordholt

@GuutBoy

Interested in cryptography. I mostly tweet excited announcements of new secure computation papers appearing on IACR’s ePrint archive.

Danmark Katılım Mayıs 2013

481 Takip Edilen480 Takipçiler

Sabitlenmiş Tweet

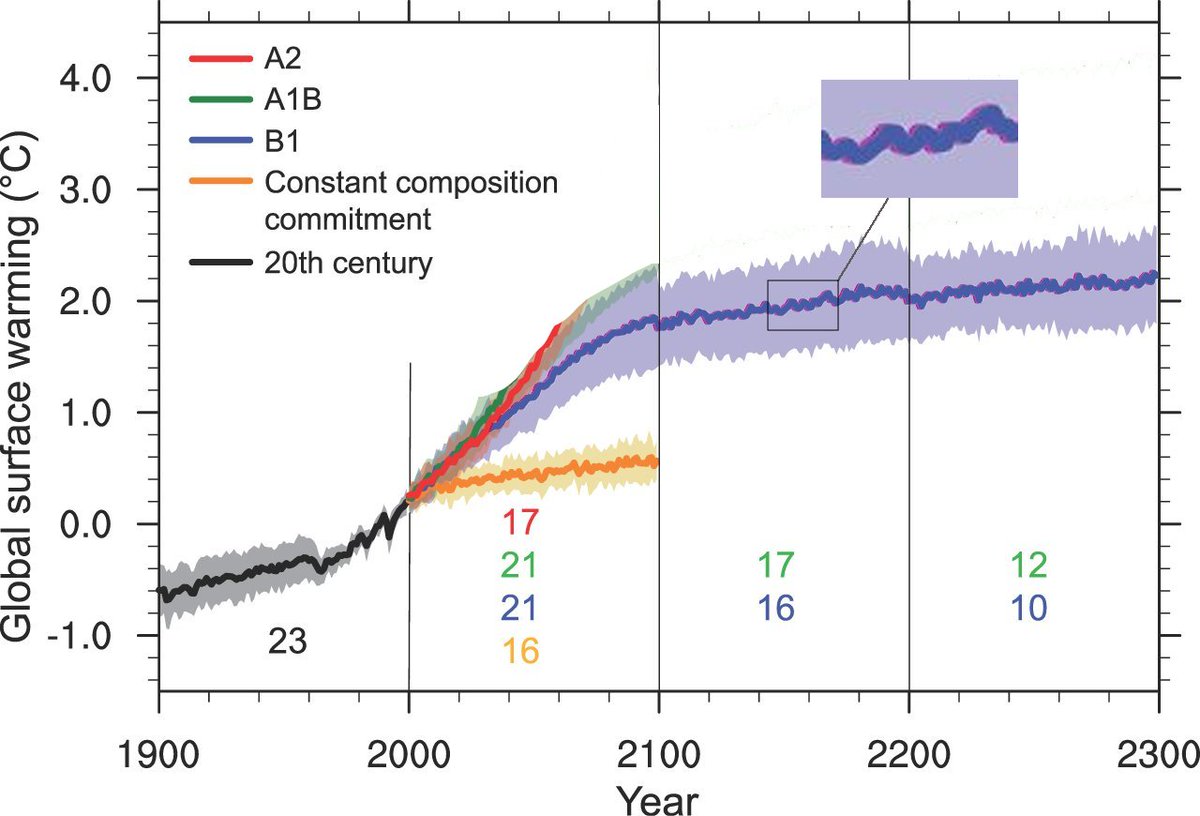

@jonatanpallesen Maybe for reference globalmethanepledge.org states cutting global methane emissions by 30% has potential for a 0.2C reduction in heating by 2050.

English

@GuutBoy I also found one saying 100-120. Let me redo the calculations with 120

English

@jonatanpallesen Maybe they are just too busy to mess around on X? ChatGPT thinks is can be estimated to 100-120 over 12 years

English

@GuutBoy Yes. Really someone who knows climate science should be doing this. But for some reason they aren't

English

@jonatanpallesen Yeah, I guess the relevant measure in the “all cows disappear”-scenario is the 12 year factor.

English

@GuutBoy Maybe even more over a 10 year period then? Need to find the accurate number

English

@jonatanpallesen Your factor of 25 appears to be the 100 year equivalence factor. Over a 20 period it’s reported as a factor of 84.

English

Yes.

I found a source that had various estimates of the proportion of greenhouse gasses that are emitted from cows, and it gave estimates in the range of a few percent up to 19%.

[A quick sanity check:

38 billion metric tonnes of CO2 each year.

570 million metric tonnes of methane each year

570 * 25 times more potent = 14 billion. So some fraction of the total greenhouse gas effect. And cow emissions are only part of the total methane emitted. So it checks out.]

I took 16.6% as a high end estimate of the total ghg emitted by cows (as measured by their ghg effect), and used that.

The logic of the figure is, the cycle doesn't add extra methane over time. But it keeps more methane in the air as part of the cycle.

Let's call the total greenhouse gas burden that is added to the atmosphere from carbon and methane in one year G.

Now let's look at what happens if hypothetically all cows are immediately disappeared. Then next year, 16.6% less ghg burden would be added to the atmosphere. That is 1/6G less. Over 6 years that is 1G less. Over 12 years that is 2G less.

But then no more! Because methane is in a cycle of 12 years. So the total gain from disappearing all cows has a ceiling of 2G.

Ok, what does a world with 2G less ghg in the atmosphere look like? 1G is defined as the ghg that is added in a year, so we have a way of modelling this: Look like what the projections are for two years in the future. Since this is a world with 2G more in the atmosphere than we have today.

Therefore on can draw the red line same as the blue line, but moved 2 years back.

(Adjustment: 44% of all carbon emissions each year is taken up by the soil and oceans, whereas methane is not. It would be an overestimate to count these carbon emissions as not mattering, but I go ahead and make this overestimate anyway. Then we would instead have 2.88G extra from the disappearance of all cows. So I moved the line 2.88 years back, instead of 2 years.)

English

@jonatanpallesen I see, it’s a bit hard to read on the graph. I still wonder about drawing this conclusion from the 5% number. Could something important be lost in the translation to CO2 equivalents? In particular is the relatively large short term benefit of cutting methane accounted for?

English

@GuutBoy It is actually plotted from now to 2050 as well. You can see the blue line, and a red line behind it but slightly skewed to the left.

English

A nice thread on getting started on MPC. This stuff is going to be big in the future!

Mikerah@badcryptobitch

Recently, many people have been nagging me about getting started with MPC. Here's a short list of resources that are beginner friendly in terms of both books, papers and code

English

Peter S. Nordholt retweetledi

@GuutBoy Thats not how the problem is stated though. It just says that it “often” predicts, or that it is 90% accurate in its predictions.

It doesn’t say or imply anything about this equivalence.

English

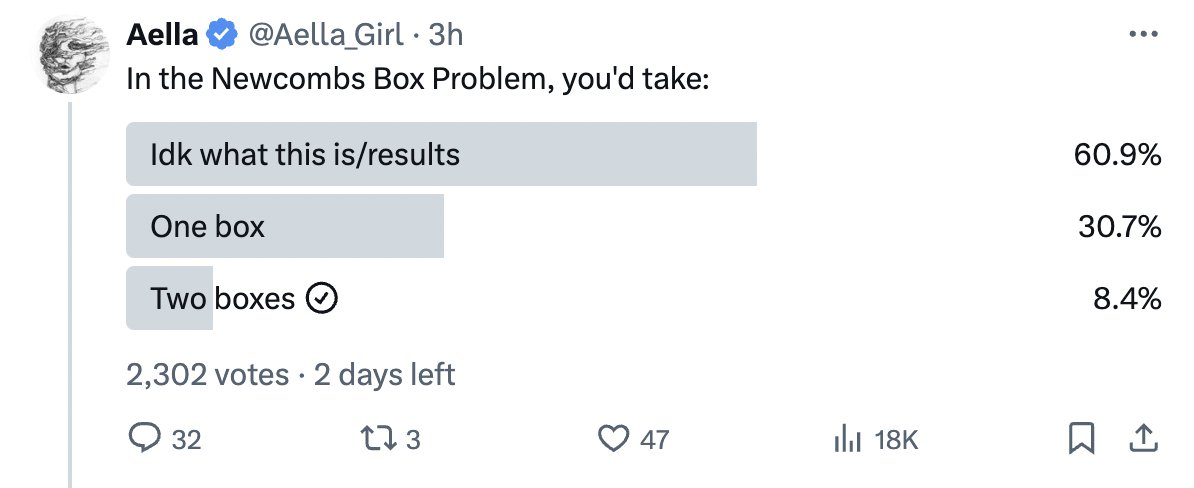

Rather surprising to see so many 1-boxers.

What's in the boxes has already been decided, and won’t change based on your choice. So of course choose 2 boxes.

People come up with some probability payout calculations to support picking 1 box. But such calculations can be tricky, and it is easy to be misled. Consider, for example, the Monty Hall paradox.

On the other hand, the fact that you can't change the past is quite easy and simple. So we have on the side supporting 1-boxing a probability calculation that might be wrong, and on the side supporting 2-boxing the fact that you can't change the past. Clearly the argument for 2-boxing is stronger.

The paradox:

Scientists have invented a machine for predicting human decisions. The machine scans a person’s brain, then scientists input a precise description of a possible situation. The machine does some incredibly complicated calculations, then predicts what the subject would do in the given situation. The machine has been found to be 90% accurate: i.e., if a person is put in the given situation, 90% of the time the person does what the machine predicted.

Your brain was scanned yesterday, and scientists entered a description of the choice situation you are about to face, namely: you are shown two boxes, box A and box B. You may take either box B, or both boxes. Box A contains $1000. Box B contains either $1 million or nothing. If the machine predicted that you would take both boxes, then the scientists put nothing in box B. If it predicted that you would take only B, then they put $1 million in B. What should you choose?

English

@jonatanpallesen In my game there is a clear connection between the the concrete choice I make and the prediction made. It is equivalent (I believe) to the predictor postponing its choice till after I have made my pick.

English

@GuutBoy A follow-up question could be

"Is that the same as that it has 90% accuracy in its predictions?"

English

@jonatanpallesen I like my way better. Can we agree to disagree?

English

@jonatanpallesen It seems to me we are both making assumptions to serve our view. You are saying the predictor and its reliability is statistical and thus my choice is independent of the prediction. I am saying the prediction will align with my choice with some given probability.

English

@jonatanpallesen I did say “along the lines of”. It’s just to say that each prediction is right with 90% probability

English

@GuutBoy Nozicks just said "has often predicted". I can't imagine any context where someone says that X often predicts Y behavior, or predicts it with high accuracy, and that actually means that it has perfect prediction but flips to wrong x% of the time. Not even if it was an alien.

English

@jonatanpallesen It also does not state anything about a high accuracy statistical model. I believe Nozick talks about a (possibly alien) being making “almost certain” predictions. I guess it’s open to interpretation (although in your formulation I will concede it sounds more like a stat thing)

English

@GuutBoy But you are choosing to read this thing about infallibility into the paradox, when its not a part of it.

English

@jonatanpallesen Because Newcombs Paradox is not documentary about your office? 🤷♂️

English

@GuutBoy Why would you think that?

If someone at my work tells me he has made a model that predicts behavior with 90% accuracy, I would not assume he has made an infallible predictor, and flipped it with 10% probability.

English

@jonatanpallesen Maybe we are the reliability concept differently. I am think a long the lines of an infallible prediction that’s flipped with 10% probability.

English

@GuutBoy Nozicks original version was even just says "high accuracy", so that would also be an allowed answer. Say your boss want to know if the approach you made has low, medium, or high accuracy.

English

@jonatanpallesen In a real world case like that I can’t be sure my prediction holds with some specific probability. I would have to make some kind of fuzzy statistical argument. Maybe this is where these arguments seems detached from the paradox to me.

English

@GuutBoy Lets say your job is to identify people at high risk of crossing red light. You make a machine that checks for color blindness, which it turns out work quite well. Your boss asks you what the reliability / accuracy is of your approach. What do you answer?

English

@jonatanpallesen Yes, and in that case I will cross a red light with whatever level of reliability the prediction has … or it will not have that reliability, a contradiction. No?

English

@GuutBoy Its just a machine that measures whether you are colorblind. If yes, it makes a prediction that you will cross a red light. Nothing magical about it.

English