Ghulam Rasool

493 posts

Ghulam Rasool

@Gxrasool

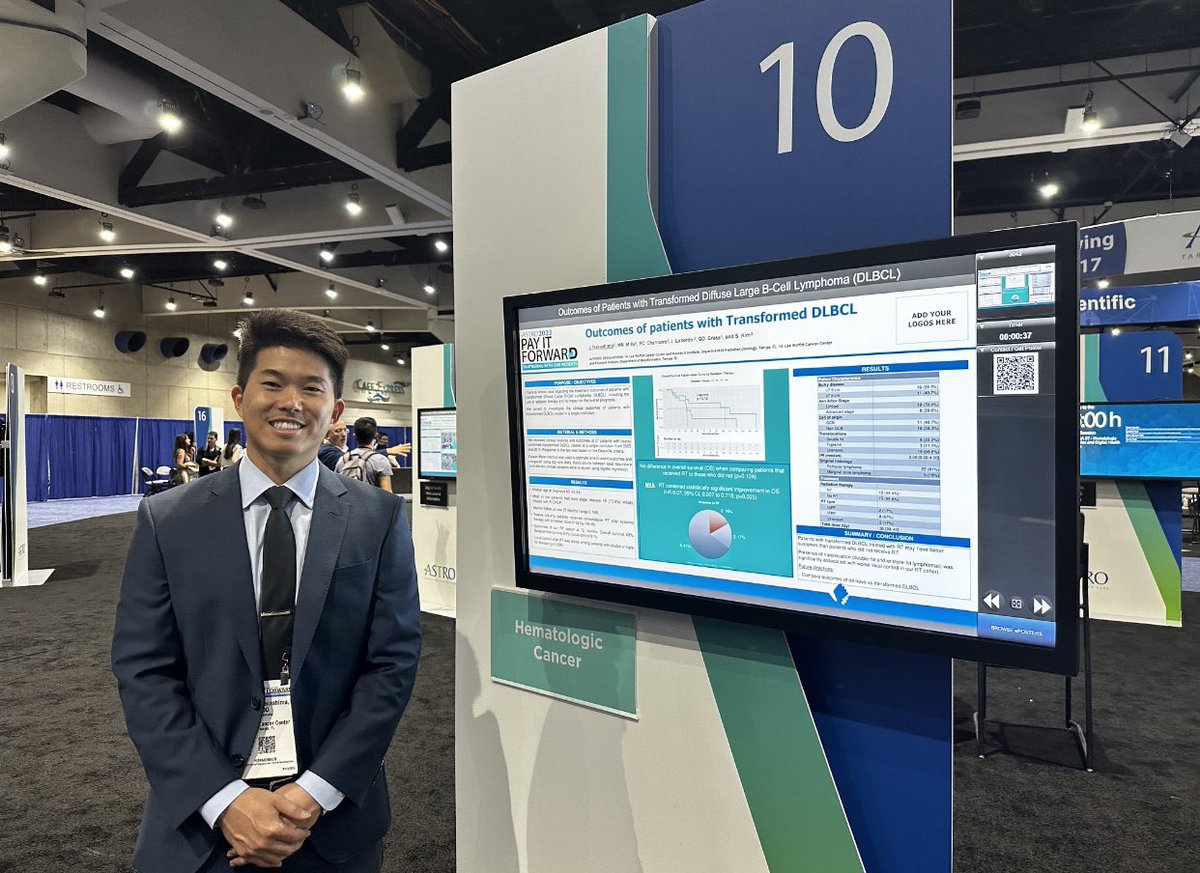

Assistant Member Machine Learning Department Moffitt Cancer Center

@sriramk yes - the deck is stacked Decades of dystopian sci-fi and talk of extinction create the fear required to limit development open-source advocates need clear, tangible examples of the tech's value and the importance of US leadership who do you think is doing this best?

If this is 7:40pm what happens at 10? 😱😱😱😱

My followers might hate this idea, but I have to say it: There's a bunch of excellent LLM interpretability work coming out from AI safety folks (links below, from Max Tegmark, Dan Hendrycks, Owain Evans et al) studying open source models including Llama-2. Without open source, this work would not be happening, and only industry giants would know with confidence what was reasonable to expect from them in terms of AI oversight techniques. While I wish @ylecun would do more to acknowledge risks, can we — can anyone reading this — at least publicly acknowledge that he has a point when he argues open sourcing AI will help with safety research? I'm not saying any of this should be an overriding consideration, and I'm not saying that Meta's approach to AI is overall fine or safe or anything like that... just that Lecun has a point here, which he's made publicly many times, that I think deserves to be acknowledged. Can we do that? Examples of some (awesome) papers I'm referring to above: Owain Evans et al: x.com/owainevans_uk/… Dan Hendrycks et al: x.com/danhendrycks/s… Max Tegmark et al: x.com/wesg52/status/… (If you haven't read these, do check them out!)

Effective Long-Context Scaling of Foundation Models LLAMA 70B variant surpasses gpt-3.5-turbo-16k’s overall performance on a suite of long-context tasks arxiv.org/abs/2309.16039