Tomas Hromada

480 posts

Tomas Hromada

@Gyfis

Building observability products that don’t suck @BetterStackHQ. Rails 💎, Vue, Postgres, Clickhouse.

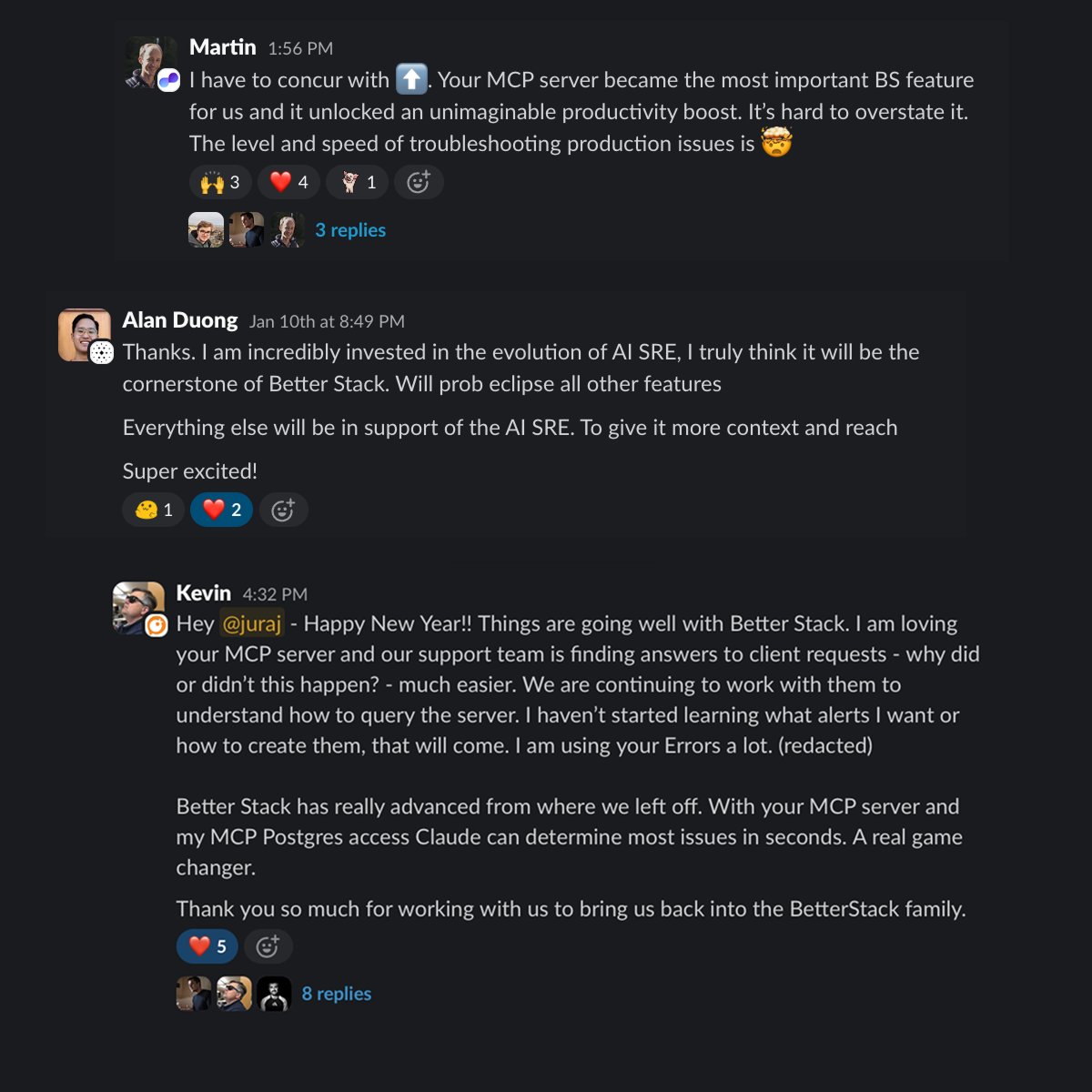

Today, we're making Error Tracking by @BetterStackHQ generally available. Sentry-compatible. AI-native. At 1/6th the price. Here's why we built it, and how to get the most out of it. What's wrong with error tracking today? Most teams use Sentry. It's solid! But at scale, the bills get brutal. Just 100M exceptions with 90 day lookback? ~$30,000 on Sentry. We charge ~$5,000 for the exact same thing. The math isn't subtle. And so most teams still end up sampling. Which means missing the exact exception that caused the outage. The bigger problem: errors are orphaned data. Your exception lands in Sentry. Your logs are in Datadog. Your traces are somewhere else. Root cause analysis becomes a multi-tab archaeology project at 3 am. We built error tracking natively inside Better Stack: the same platform where your logs, traces, metrics, uptime checks, and on-call schedules already live. Errors are just another signal. They belong together. The part that changes how your team works: Our AI SRE doesn't just surface errors. It fixes them. See a new exception? One click. The AI SRE analyzes the full context, from stack traces, environment variables, browser sessions, related logs and recent deploys, and opens a pull request. Not a ticket. Not a summary. A pull request with the fix. This is what happens when error tracking is fully integrated with the rest of your observability stack instead of bolted on separately. The AI has everything it needs to actually act. The migration is trivial: 1. Keep your existing Sentry SDK. Don't touch a single line of instrumentation code. 2. Point the DSN at Better Stack. 3. Done. Errors flow in. Your dashboards work. Your alerts work. 4. New exception appears. Click "Fix with AI SRE." Pull request lands in your repo. 5. Review, merge, close. That's the whole workflow. The AI angle is real, not a marketing badge. LLMs are genuinely good at fixing bugs if they have full context. The reason AI coding assistants sometimes frustrate engineers is incomplete information, not the model. We solve that by giving the AI SRE your entire telemetry stack as context. Stack traces, logs, traces, service maps, previous incidents and much more. All of it, in one place, at the moment it matters. Observability tools are only useful if you actually ingest all your data. At current prices of other tools, most teams can't afford to. Now you can, and your AI SRE can actually do something about it.

Today, we're making Error Tracking by @BetterStackHQ generally available. Sentry-compatible. AI-native. At 1/6th the price. Here's why we built it, and how to get the most out of it. What's wrong with error tracking today? Most teams use Sentry. It's solid! But at scale, the bills get brutal. Just 100M exceptions with 90 day lookback? ~$30,000 on Sentry. We charge ~$5,000 for the exact same thing. The math isn't subtle. And so most teams still end up sampling. Which means missing the exact exception that caused the outage. The bigger problem: errors are orphaned data. Your exception lands in Sentry. Your logs are in Datadog. Your traces are somewhere else. Root cause analysis becomes a multi-tab archaeology project at 3 am. We built error tracking natively inside Better Stack: the same platform where your logs, traces, metrics, uptime checks, and on-call schedules already live. Errors are just another signal. They belong together. The part that changes how your team works: Our AI SRE doesn't just surface errors. It fixes them. See a new exception? One click. The AI SRE analyzes the full context, from stack traces, environment variables, browser sessions, related logs and recent deploys, and opens a pull request. Not a ticket. Not a summary. A pull request with the fix. This is what happens when error tracking is fully integrated with the rest of your observability stack instead of bolted on separately. The AI has everything it needs to actually act. The migration is trivial: 1. Keep your existing Sentry SDK. Don't touch a single line of instrumentation code. 2. Point the DSN at Better Stack. 3. Done. Errors flow in. Your dashboards work. Your alerts work. 4. New exception appears. Click "Fix with AI SRE." Pull request lands in your repo. 5. Review, merge, close. That's the whole workflow. The AI angle is real, not a marketing badge. LLMs are genuinely good at fixing bugs if they have full context. The reason AI coding assistants sometimes frustrate engineers is incomplete information, not the model. We solve that by giving the AI SRE your entire telemetry stack as context. Stack traces, logs, traces, service maps, previous incidents and much more. All of it, in one place, at the moment it matters. Observability tools are only useful if you actually ingest all your data. At current prices of other tools, most teams can't afford to. Now you can, and your AI SRE can actually do something about it.

you can just ship prometheus exporters. now open source github.com/cloudflare/clo…

Query your logs & metrics with PQL, the Pipelined Query Language, now in @BetterStackHQ Inspired by Kusto's KQL and Splunk's SPL, Better Stack now supports the open source PQL equivalent. Implemented in a day 🤯 Thank you pql.dev! 🙏 What should we ship next?