H.Jones

428 posts

i’m so f*ing tired of this

tired of red days that never end

tired of trump dumps crushing dreams

tired of whale manipulation

tired of rugs stealing hope

tired of promises that turn to dust

been in this space for years and

sometimes i wonder why we keep doing this to ourselves

but then i remember… being tired is exactly where legends are born

maybe it’s time to embrace it

maybe it’s time for $TIRED

CA: 8AFshqbDiPtFYe8KUNXa4F88DFh8yD8J5MXyeREopump

English

In a world where every second counts, latency kills markets.

@linera_io’s solution? Microchains that let prediction platforms scale horizontally without slowing down.

English

WASM sandboxing + Linux namespace isolation = ultra-secure microchains. @acurast ensures safe execution; @linera_io ensures fast logic.

English

@Kage_Lion @yupp_ai 700+ models?? wow. I’ll definitely test this project out.

English

At first I didn’t plan to write about “AI aggregators,” but since I use @yupp_ai regularly, I decided to share my thoughts on this project.

In simple terms, it’s an AI/Web3 platform where one prompt gives you several answers from different models (text/images). You pick the best one, add a short “why,” and that feedback doesn’t disappear - it trains the ranking and slowly tunes your feed. The team claims 700+ models. I didn’t count, but… feels like a lot.

How I actually use it:

→ sanity-check important answers (two models rarely hallucinate in the same way);

→ quick image drafts without extra apps;

→ testing new models: “show me how this one explains X”; sometimes new models outperform the “veterans”;

→ sharing comparisons with friends who ask “which AI should I use?”

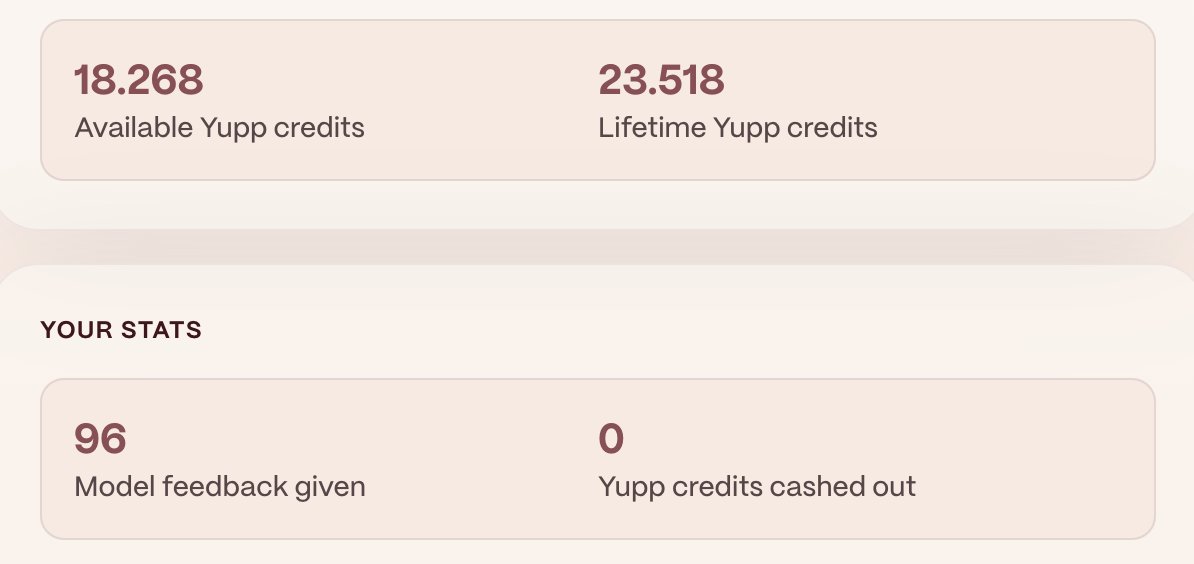

Credits & rewards (short): you spend credits on “$” models, earn them back with feedback. You can withdraw after a threshold. Not a salary - more like “coffee money” plus free access to strong models if you help the system. Honestly, that’s pretty cool.

Notes / Pros:

→ low entry barrier: free and private by default;

→ huge variety - you can try top LLMs without juggling a dozen subscriptions;

→ side-by-side answers reduce “single-model bias”;

→ clean, fast interface;

→ honest loop: you help AI improve, the system helps you solve tasks.

Who benefits the most:

⇒ newcomers - the safest entry point to AI I’ve seen;

⇒ students/analysts - compare, cite, move on;

⇒ builders - quick drafts before deep testing;

⇒ the curious - those who like double-checking facts before acting.

Why it matters: comparison isn’t just convenience. The real scarcity now isn’t “personal data,” it’s human judgment. Platforms that honestly collect diverse “this is better because…” will shape how models evolve. That feels like the most interesting part.

Conclusion: for now → is the most painless way to explore a whole range of AIs and keep a clear head. If you’re stuck with one chatbot - try two, you’ll feel the difference.

The future is AI. Definitely worth exploring.

English

Humans click slowly. Agents don’t.

They fire off thousands of moves an hour.

Most chains break under that.

@linera_io was built different: microchains, real-time logic, agent-native by design.

English

We’re not building blockchains for yesterday.

The future is AI agents, millions of them, moving fast.

Old L1s choke on that load.

@linera_io brings microchains - instant, agent-native, built for the world that’s coming.

English

Let’s be real: blockchains today still feel slow, clunky, made for humans clicking buttons.

But AI agents won’t wait.

@linera_io flips the script with microchains → real-time speed, infinite scale, and native agent support.

English

Binance said it best: most chains weren’t designed for millions of AI agents.

They’re right.

That’s why @linera_io exists.

microchains = low latency, real-time UX, and space for the agentic web to breathe.

English

If blockchains freeze when humans pile in, imagine what happens with swarms of AI agents.

That’s the gap @linera_io fills.

With microchains, every move feels instant.

It’s not “scaling.” It’s starting fresh.

English

You wouldn’t run a Formula 1 race on a dirt road.

So why run AI agents on yesterday’s chains?

@linera_io built microchains for speed: instant UX, infinite scale, real-time reactions.

English

Cross-chain delays. Bottlenecks. Queues.

That’s the old world.

@linera_io changes the rules with microchains - each user, each agent, their own track.

No collisions. No lag. Just flow.

English

How AID behaves in crypto drawdowns

Crypto dumps happen. Red candles everywhere. So the real question: what does AID from @gaib_ai do in a drawdown? I’ll keep it short and honest.

Price (peg) - AID targets ≈ $1. If panic hits, price can wobble a bit on DEX, but swaps + arbitrage + KYC redeem (for partners) help pull it back. I just watch peg and slippage, that’s all.

Yield (sAID) - sAID ratio grows from off-chain revenue (GPU financing + reserves), not from token printing or #DeFi hype. Market down ≠ income dead. it may slow if compute demand cools, but it doesn’t depend on coin pumps.

Liquidity - in red markets depth can get thinner. Plan exits: split orders, keep gas, don’t market dump into tiny pools.

Cool-down/timing - unstake isn’t instant. There’s a cooldown window by design, so I plan ahead and avoid panic buttons.

real risks - if many rush the door, peg spreads widen; if borrowers delay, cash flow slows; hardware prices can reprice; off-chain ops still matter. Not zero risk, never is.

My simple playbook - when market bleeds I park in AID, or stake to sAID if I want the flow. I do a 30-sec check: peg ≈1, pool depth ok, sAID ratio trending up. If those three look fine, I stop overthinking.

TL;DR: AID tries to stay stable; sAID keeps earning from real machines, not from market noise. Bad days still happen, but the engine here is compute, not hype.

English

What if @gaib_ai stops working?

Yeah, I’ve seen that question come up.

Honestly, it’s a good question - especially in crypto, where a project can rug any minute. Tons of examples out there, lol.

The @gaib_ai system has three layers of protection:

There’s always more value locked than required.

A cash reserve in case of issues.

Insurance that covers risks.

This doesn’t mean “zero risk” - let’s be real, that doesn’t exist in crypto or in life.

But I’d say this setup is actually more reliable than most projects.

And honestly, it helps me sleep better knowing my money isn’t just floating on promises, but backed by real protection.

What do you think about @gaib_ai?

English

gm, fam 👋 Today I’ll talk about programmable data in @irys_xyz.

What I like about this project is that data here isn’t just stored and left idle.

Each piece of data can actually carry its own rules.

Let me give an example so it’s clearer what I’m talking about:

– who can read it,

– how long it will be available,

– or whether the author gets a cut every time the data is used. Hope that came across clearly.

Normally you’d need separate services and tools for this.

But with @irys_xyz it’s simpler - the rules are baked directly into the data itself.

That’s a huge win for developers: no need to set up access or payments from scratch every time, because the data already knows how it should behave.

And that’s just awesome.

It might sound simple, but it changes a lot.

Instead of asking “how do I control this file,” you just work with the file - and trust the rules inside it.

English

Growth of AI, growth of GPU usage, growth of revenue.

Right now everyone’s chasing tokens, airdrops, and hype.

But honestly… the real fuel right now is compute.

All these AI models are chewing through GPUs 24/7 nonstop.

And every new model? Bigger, hungrier, demanding even more power.

So here’s the question: What happens when GPU demand keeps growing?

The answer feels obvious: whoever controls the compute, controls the money.

That’s the beauty of GAIB - you’re holding a share of AI’s most basic need.

It’s like owning a piece of the future power grid.

No GPU→no AI.

More AI→more load→more revenue.

English