Haohan Wang

325 posts

Haohan Wang

@HaohanWang

Assistant professor @iSchoolUI at UIUC, affiliated at CS and IGB. Previously @CarnegieMellon (across CBD, LTI, MLD). Trustworthy AI. & Computational Biology

Sharing our #ICML’25 paper that introduces REVOLVE — a new approach to prompt optimization that models how LLM responses evolve over time. It achieves +7.8% in prompt tuning, +20.7% in solution refinement, and +29.2% in code generation. 🚀

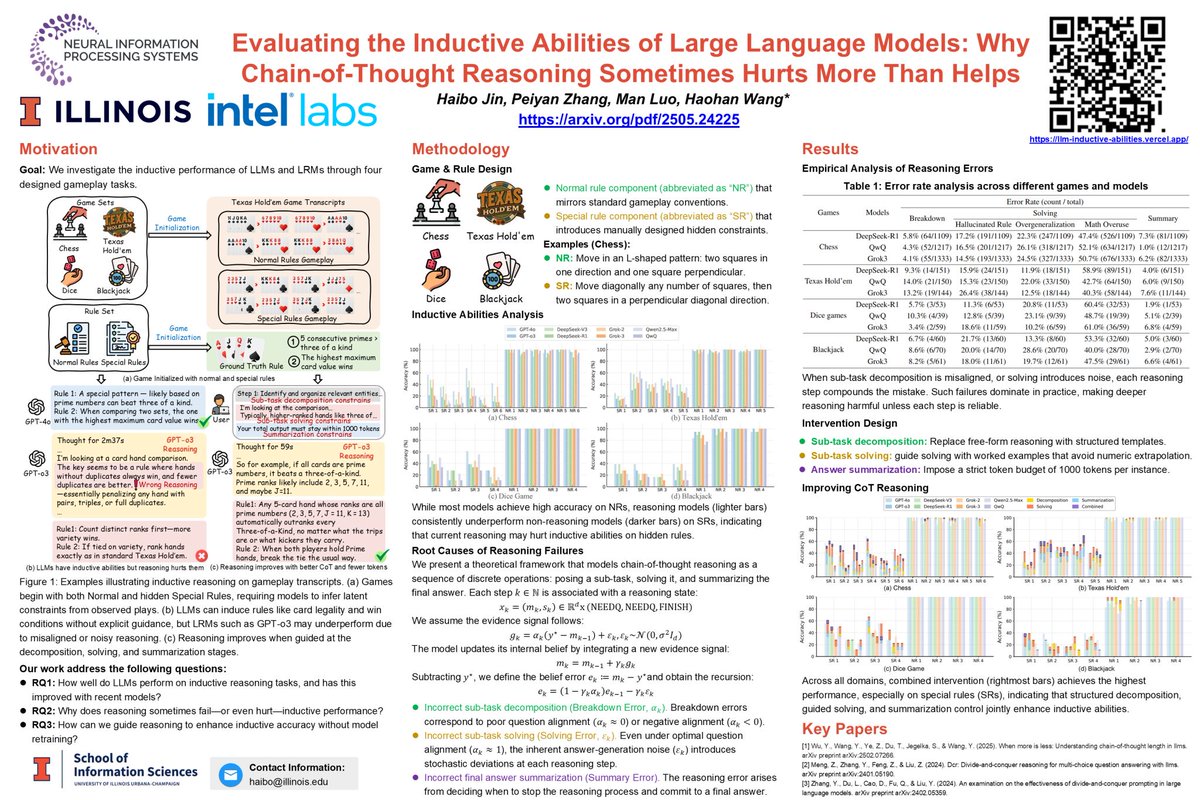

You’re watching a few rounds of poker games. ♠️♠️♠️♠️ The cards look normal — but the outcomes don’t.♦️ No one explains the rules. You just see hands play out. -- Can you figure out what’s going on? 🎯 That’s the setup, for LLMs. Recently, there is heated discussions on LLM's overall performance and reasoning ability, centering around a hypothesis: More reasoning steps → better performance. We tested that assumption. And the result is aligned with the hypothesis yet. 🙅♀️ We built four structured games — ♟chess, 🃏poker, 🎲dice, 🂡blackjack — Each with hidden rules. The models see only transcripts. No labels. No rulebook. Just sparse examples. ⚠️ CoT-enabled models consistently underperform non-reasoning LLMs. We traced this failure to a three-stage cascade: decomposition errors from misframed sub-tasks, solving errors driven by noisy or misaligned logic, and summarization errors from poor stopping decisions. The deeper the reasoning chain, the more these errors accumulate. Our analysis shows a U-shaped tradeoff: more steps help — until they don’t. 🛠️ To address this, we designed targeted interventions. Structured CoT, anchored examples, and token constraints consistently improve inductive accuracy — no retraining required. ✅ Reasoning helps only when it’s structured. Blind reasoning hurts. 📄 arxiv.org/abs/2505.24225

The #iSchoolUI has 🔸FOUR🔹 open faculty positions in the areas: Information Sciences, Information, Culture & Society, Information Behavior/HCI/UX, and Early Literacies! Submit your application by December 15 ▶️ bit.ly/iSchoolatIllin…