Haotong Lin

25 posts

Haotong Lin

@HaotongLin

A PhD student in State Key Laboratory of CAD & CG, Zhejiang University.

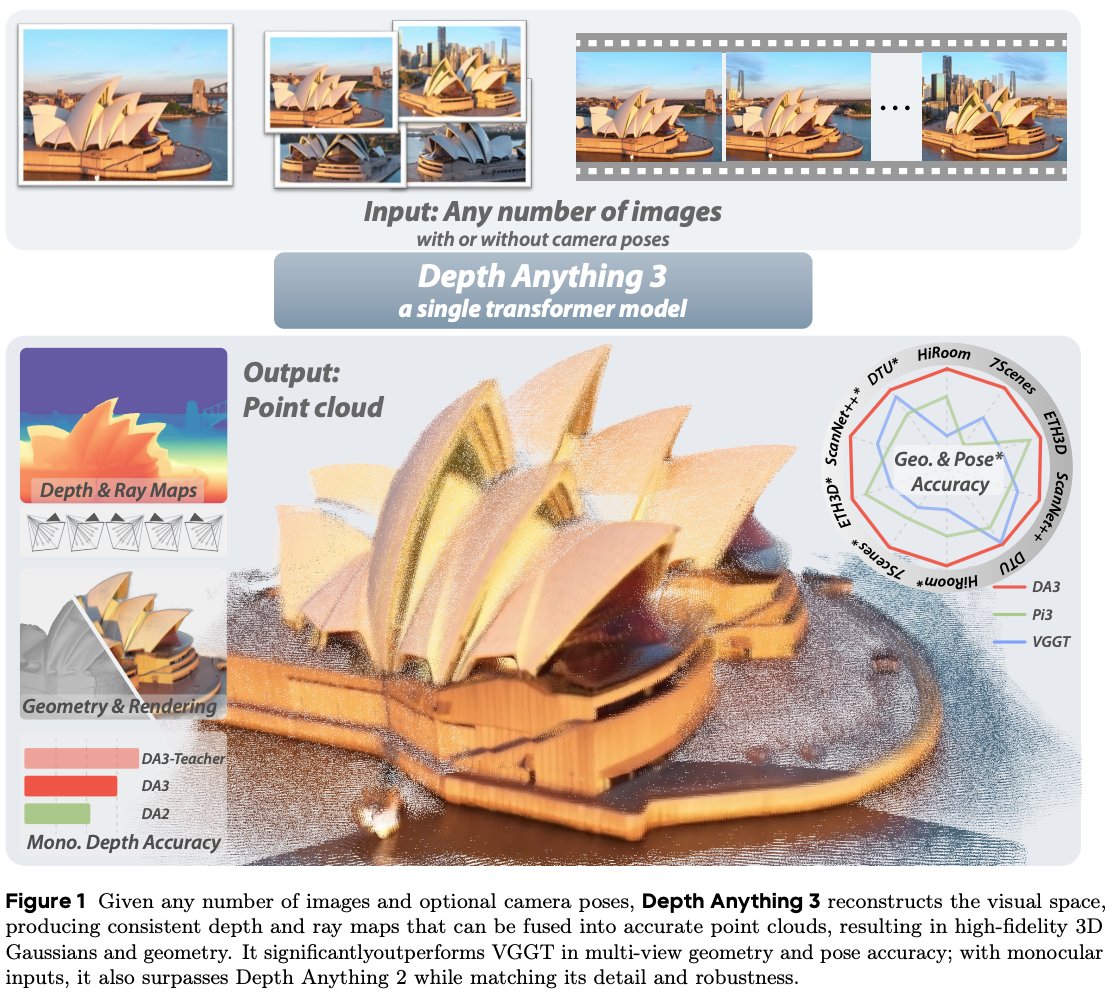

After a year of team work, we're thrilled to introduce Depth Anything 3 (DA3)! 🚀 Aiming for human-like spatial perception, DA3 extends monocular depth estimation to any-view scenarios, including single images, multi-view images, and video. In pursuit of minimal modeling, DA3 reveals two key insights: 💎 A plain transformer (e.g., vanilla DINO) is enough. No specialized architecture. ✨ A single depth-ray representation is enough. No complex 3D tasks. Three series of models have been released: the main DA3 series, a monocular metric estimation series, and a monocular depth estimation series. The core team members, aside from me: @HaotongLin, Sili Chen, Jun Hao Liew, @donydchen. 👇(1/n) #DepthAnything3

Pixel-Perfect-Depth: the paper aims to fix Marigold's loss of sharpness induced by VAE by using VFMs (VGGT/DAv2) and a DiT-based pixel decoder to refine the predictions and achieve clean depth discontinuities. Video by authors.

Are you tired of the low quality of iPhone lidar scans? I am! And that is why we are bringing this cutting-edge iPhone lidar scan enhancement function into production! With the guidance of normal and depth, the geometry can now reach the next level! Showcases: kiri-innovation.github.io/LidarScanEnhan…. Please try our KIRI Engine 3.14 iOS version. Thanks to Xuqian and her AGSMesh paper (github.com/XuqianRen/AGS_…), which inspired us a lot, and also thanks to Haotong, Sida, and Jiaming for their stunning paper PromptDA (github.com/DepthAnything/…), which makes the depths way better. Of course, thanks to CJ and our intern team, Quanxiang and Ziteng, for helping with the development. #CVPR2025 #3DV2025 #GaussianSplatting #LiDAR

Want to use Depth Anything, but need metric depth rather than relative depth? Thrilled to introduce Prompt Depth Anything, a new paradigm for accurate metric depth estimation with up to 4K resolution. 👉Key Message: Depth foundation models like DA have already internalized rich geometric knowledge of the 3D world but lack a proper way to elicit it. Inspired by the success of prompting in LLMs, we propose prompting Depth Anything with metric cues to produce metric depth. This method proves to be very effective when using a low-cost lidar (e.g., iPhone's LiDAR), which is widely available, as prompts. We believe the prompt can generalize to other forms as long as scale information is provided. Prompt Depth Anything offers 1⃣A series of models for iPhone lidars. 2⃣4D reconstruction from monocular videos (captured with iPhone). 3⃣Improved generalization ability for robot manipulation, e.g. Training on cans but generalizing on glasses. 4⃣More detailed depth annotations for the ScanNet++ dataset. The first author is our excellent intern @HaotongLin. Paper: huggingface.co/papers/2412.14… Huggingface: huggingface.co/papers/2412.14… Project Page: promptda.github.io Code: github.com/DepthAnything/…

Want to use Depth Anything, but need metric depth rather than relative depth? Thrilled to introduce Prompt Depth Anything, a new paradigm for accurate metric depth estimation with up to 4K resolution. 👉Key Message: Depth foundation models like DA have already internalized rich geometric knowledge of the 3D world but lack a proper way to elicit it. Inspired by the success of prompting in LLMs, we propose prompting Depth Anything with metric cues to produce metric depth. This method proves to be very effective when using a low-cost lidar (e.g., iPhone's LiDAR), which is widely available, as prompts. We believe the prompt can generalize to other forms as long as scale information is provided. Prompt Depth Anything offers 1⃣A series of models for iPhone lidars. 2⃣4D reconstruction from monocular videos (captured with iPhone). 3⃣Improved generalization ability for robot manipulation, e.g. Training on cans but generalizing on glasses. 4⃣More detailed depth annotations for the ScanNet++ dataset. The first author is our excellent intern @HaotongLin. Paper: huggingface.co/papers/2412.14… Huggingface: huggingface.co/papers/2412.14… Project Page: promptda.github.io Code: github.com/DepthAnything/…