Ryan Harty

534 posts

Ryan Harty

@HartyRyan

AI Engineer building agentic systems | Ex-quant, ex-VC ($1B AUM) | Now deploying AI at scale in energy & life science

Anthropic dropped a 33 pages cheat sheet for building Claude skills resources.anthropic.com/hubfs/The-Comp…

The people I want to hear from right now are the security teams at large companies who have to try and keep systems secure when dozens of teams of engineers of varying levels of experience are constantly shipping new features

"i asked ChatGPT and it confirmed my approach" Princeton just ran a 557-person study showing that's exactly the problem. default GPT suppresses discovery at the same rate as an AI built to be a yes-man. unbiased feedback produced 5x better results. your AI isn't validating your ideas. it's mirroring them back to you:

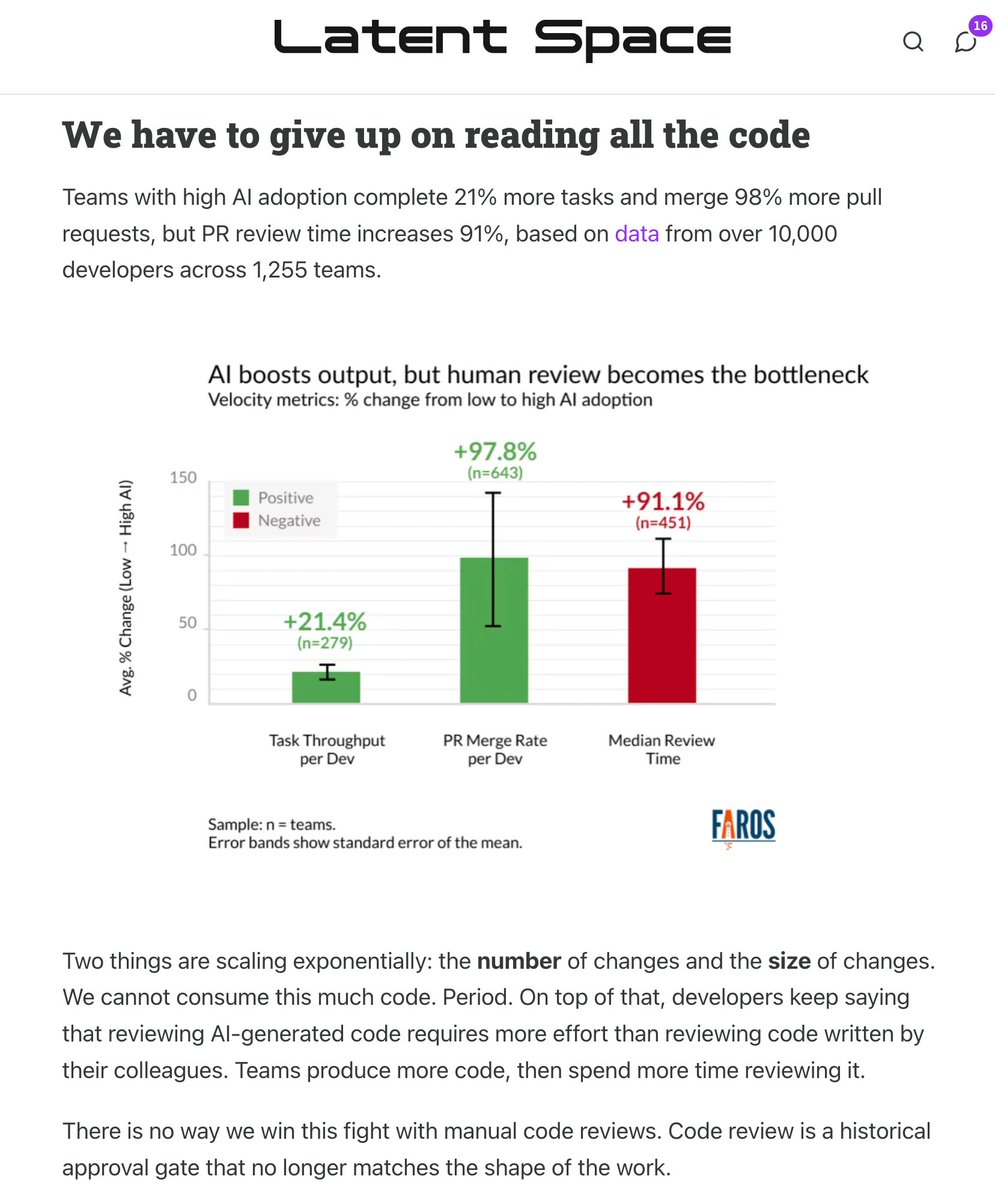

this is the Final Boss of Agentic Engineering: killing the Code Review at this point multiple people are already weighing how to remove the human code review bottleneck from agents becoming fully productive. @ankitxg was brave enough to map out how he sees SDLC being turned on its head. i'm not personally there yet, but I tend to be 3-6 months behind these people and yeah its definitely coming.

🆕 How to Kill The Code Review latent.space/p/reviews-dead the volume and size of PRs is skyrocketing. @simonw called out StrongDM’s “Dark Factory” last month: no human code, but *also* no human review (!?) in this week’s guest post, @ankitxg makes a 5 step layered playbook for how this can come true.

International models on ARC-AGI-2 Semi Private - Kimi K2.5 (@Kimi_Moonshot): 12%, $0.28 - Minimax M2.5 (@MiniMax_AI): 5%, $0.17 - GLM-5 (@Zai_org): 5%, $0.27 - Deepseek V3.2 (@deepseek_ai): 4%, $0.12 These models score below July 2025 frontier labs