Sabitlenmiş Tweet

New paper 🚨 #ICLR26

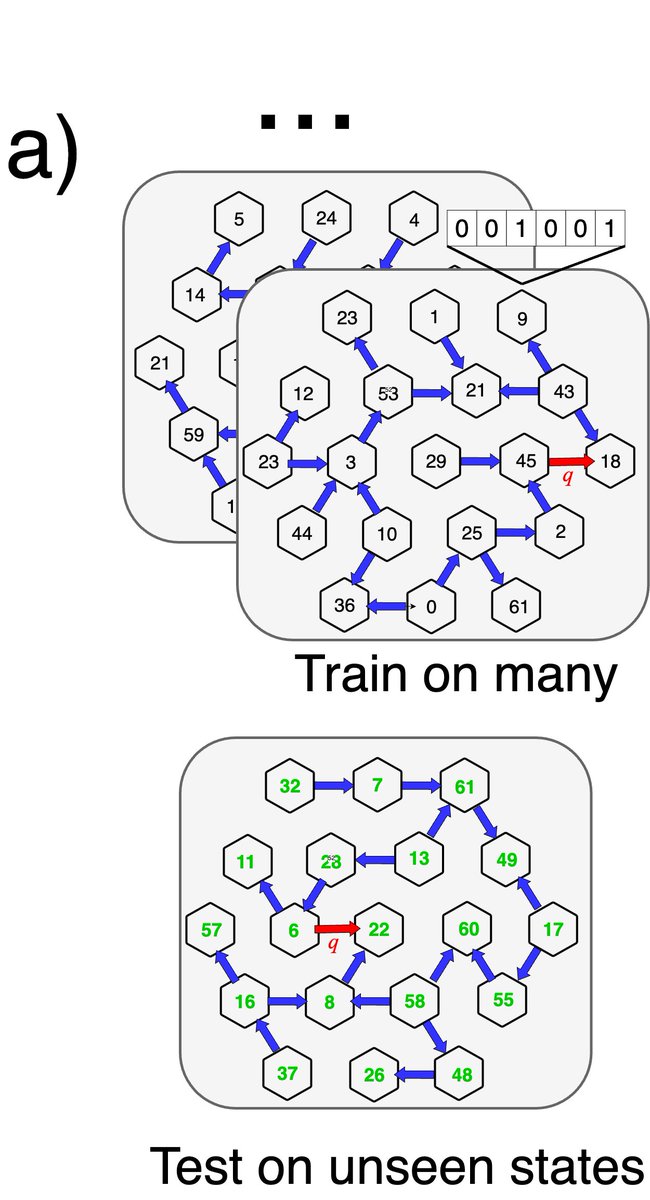

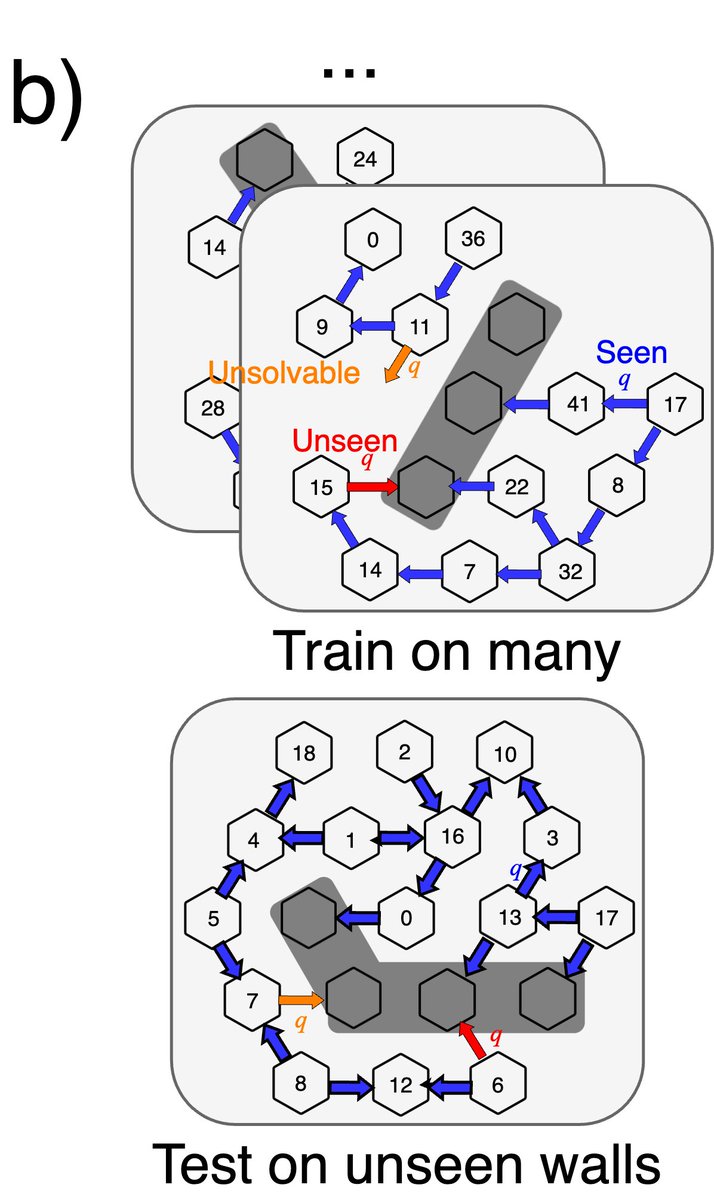

Most world models predict the future from a past trajectory. But neuroscience suggests that such inference can instead be made from temporally independent experiences.

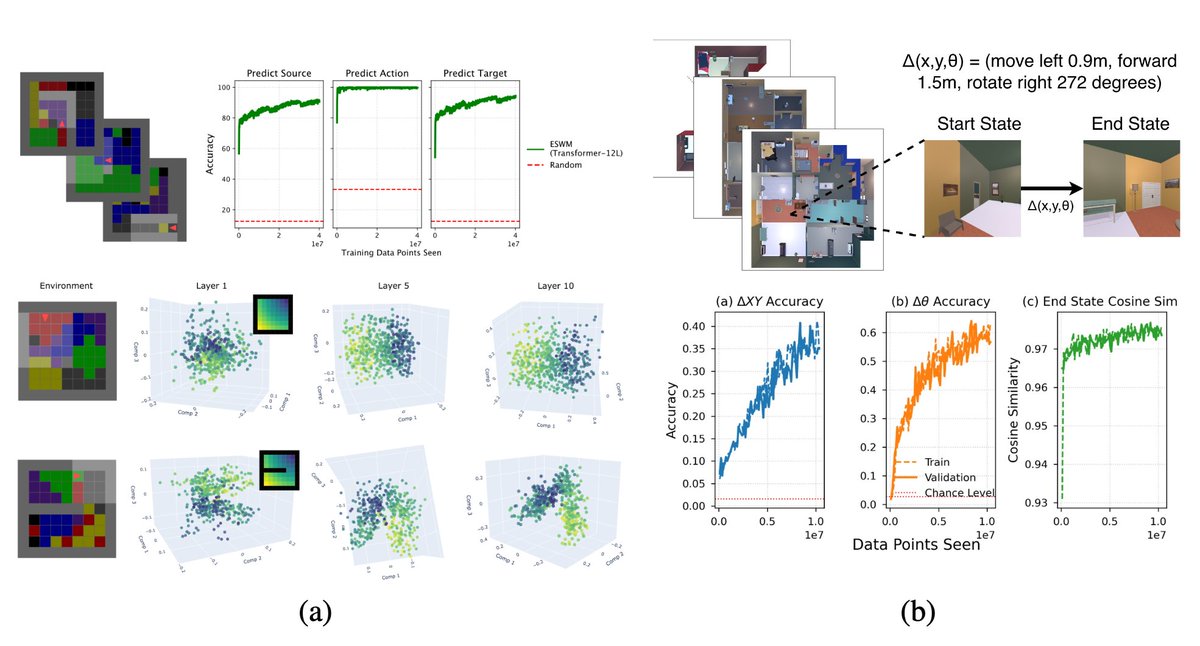

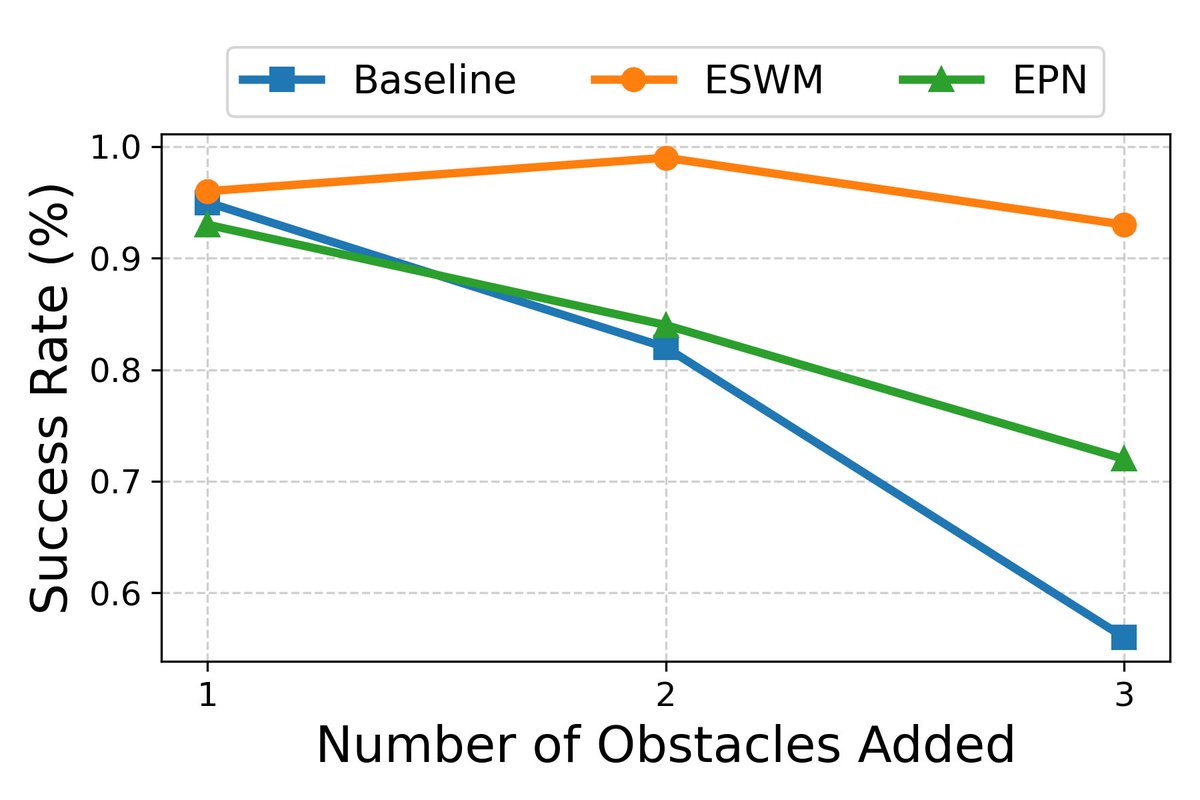

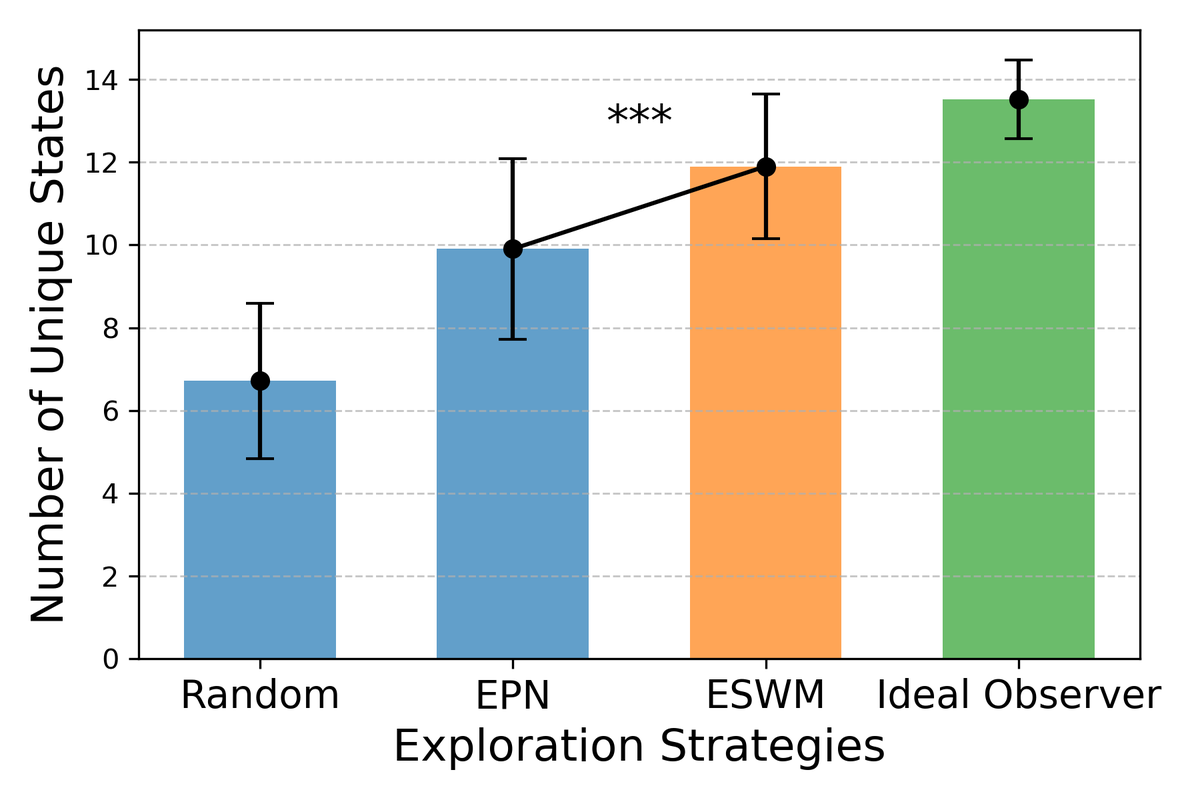

We built the Episodic Spatial World Model (ESWM), a model that does exactly this:

English