Hrishi

4.1K posts

Hrishi

@hrishioa

Trying to build systems of lasting value at https://t.co/JoR2nVEIRH. Previously CTO, Greywing (YC W21). Chop wood carry water.

Clausetta (Clawd + Rosetta) is still one of the coolest things I've seen hanks do: Just-in-time connectors between complex code. It's also open-source.

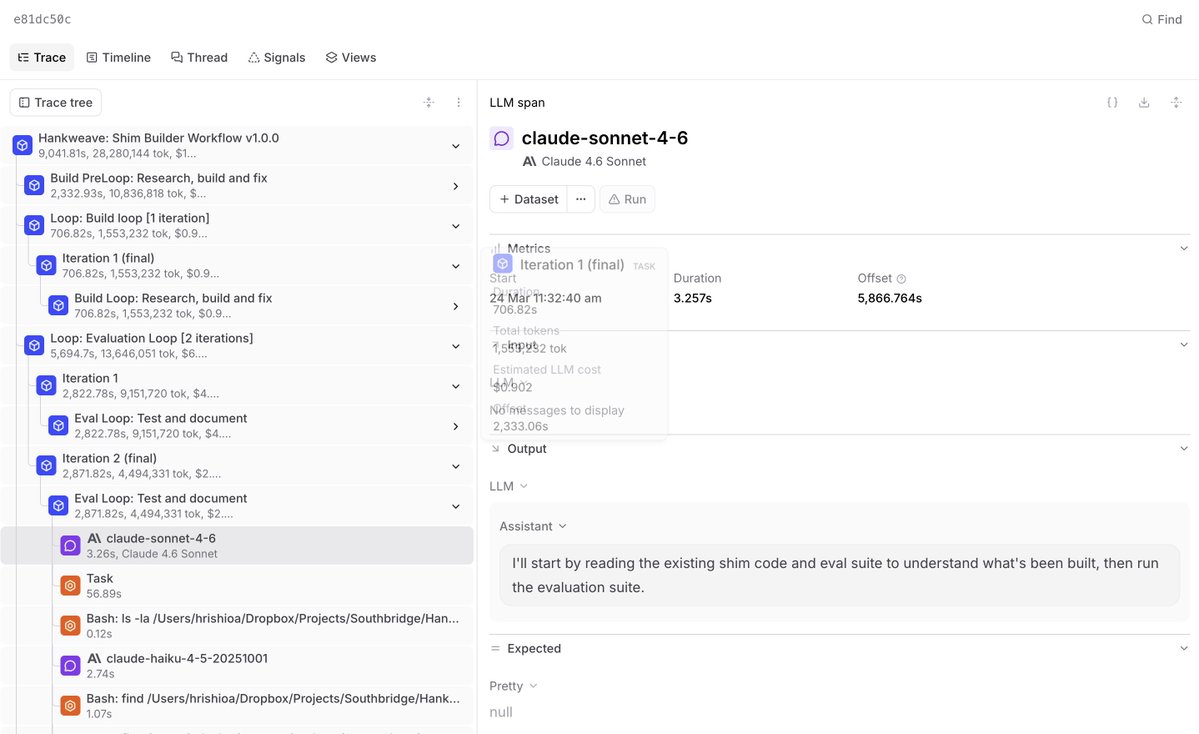

It's a very simple hank and it makes **any** agent harness accessible and compliant with the same interface spec - our interface spec (which is actually the original Claude interface spec that we never upgraded from). So it's an AI program that automatically shims any agent to speak the same language as Claude Code.

Try it:

Clone github.com/SouthBridgeAI/… or substitute it into the command below:

bunx hankweave -i "

3. Multi-columns magazine layout, but _responsive_ and dynamic chenglou.me/pretext/dynami…

i love the continuous vs. step function analogy. i wonder why you chose to use a JSON DSL in hankweave vs. just having the agent implement it in typescript or python? in the past we as an industry made this mistake with build systems (see ant and msbuild for good examples of DSLs implemented in XML). jim weirich got this right with rake where the DSL was embedded in ruby when he created rake. eventually you need all the affordances of a real programming language - flow control, exception handling, expressions etc. the anthropic article was interesting but i also wonder why they chickened out and didn't ship the code on top of their agent sdk. in an agentic world, i wonder whether DSLs are still valuable or whether just having them gen the harness dynamically for the specific task using a well defined api surface is the right answer here?

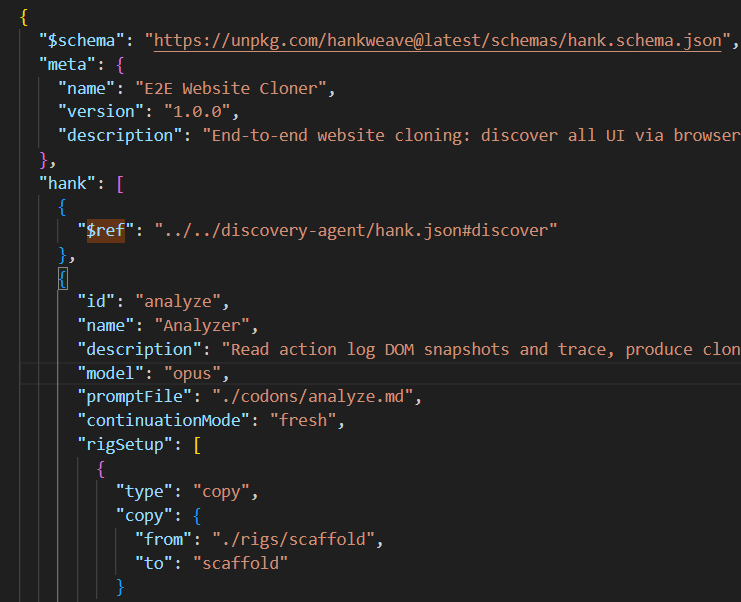

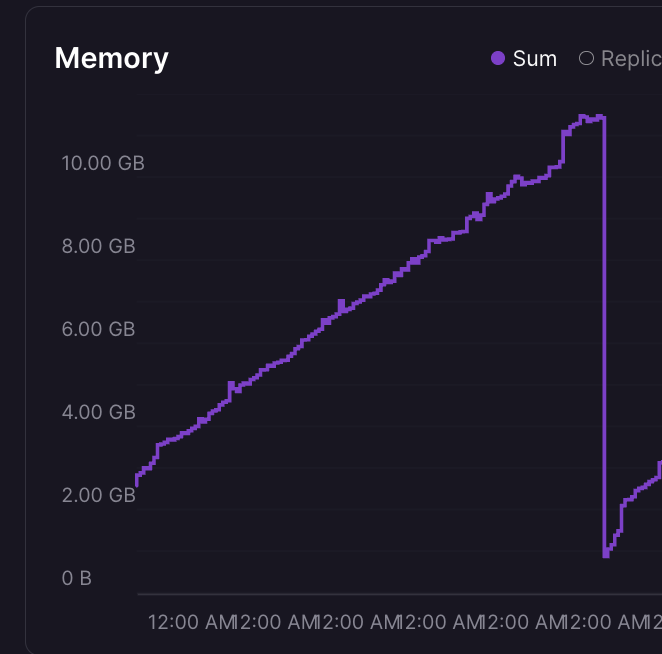

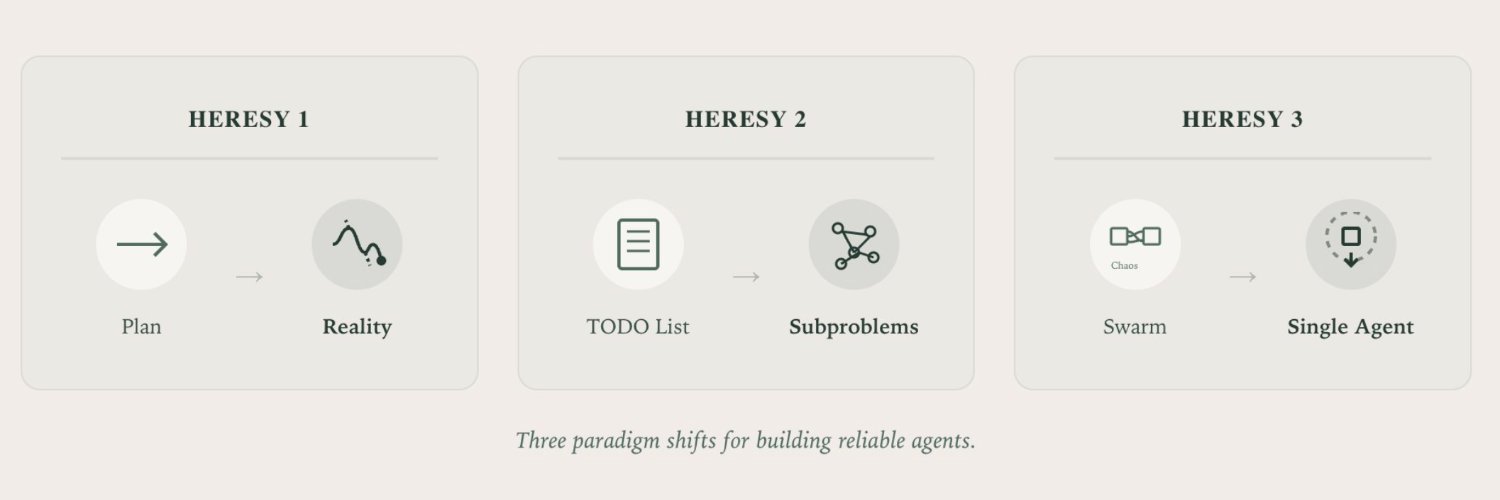

This is THE question we struggled with for a while In the end we made an opinionated call on a few tradeoffs: The biggest one is reusability. A JSON DSL is significantly more restrictive, but to us it preserved the code/data boundary well enough to have codons (units of agentic work) be reusable across people, companies or tasks. It also means that we can (as we do now) have LLMs edit these DSLs without crossing that boundary. The second is surface area - integrating into typescript or python creates the exact same problem of diversity that we were fighting at the time. Because you can do almost anything, almost everything will be done - which means that - deterministically reasoning about executions and rollbacks before starting (like hankweave's preflight does), - keeping up test surface area across models, backbones and known behavior, and - the debugging path (which was the most important thing for us with hankweave) becomes needlessly complex. In hankweave today, if something breaks - there is a known, well-trodden way to fix and test the fix. Third one - that I think is a little less important now, but we were super concerned about it in June - is auto-recovery. Hankweave is not turing complete, which makes unrolling executions and automatic budgeting a lot easier. Remains to be seen if we're wrong - as we accumulate more usage it'll become apparent. The prime philosophy in hankweave has been 'don't build anything that you don't NEED' and so far we haven't needed to move past JSON. Longer-term I think we might build typescript integrations that compile down to the DSL so we get the fun of working with the linter and typechecker back - we've had a few experiments in progress!