Rubio Huang

32 posts

We built FutureX, the world’s first live benchmark for real future prediction — politics, economy, culture, sports, etc. Among 23 AI agents, #Grok4 ranked #1 🏆 Elon didn’t lie. @elonmusk your model sees further 🚀🍀 LeaderBoard: futurex-ai.github.io

❗️Open source MOE kernels alert❗️ Introducing COMET, a computation/communication library for MoE models from Bytedance. Battle-tested in our 10k+ GPU clusters, COMET shows promising efficiency gains and significant GPU-hour savings (millions 💰💰💰). Integration of DualPipe & DeepEP requires too much effort? Try COMET, a drop in replacement for your MOE block! Key Points: ✅ Deployed on 10K+ GPU cluster, saved MILLIONS of GPU hours ✅ 1.96x layer-wise speedup, 1.71x end-to-end boost for MoE models ✅ Fine-grained Computation-communication Overlapping for MoE Why devs care: 📌 Plug-and-play with existing frameworks (just a few lines of code change) 📌 Supports ALL MoE parallel modes: TP/EP/EP+TP 📌 MLSys'25 top scores (5/5/5/4) - battle-tested at scale 📄 Paper: arxiv.org/pdf/2502.19811 📦 Code: github.com/bytedance/flux… Great work done by Shulai, @NingxinZheng_ and team #OpenSource #LLM #MOE #MLSys2025 #CUDA

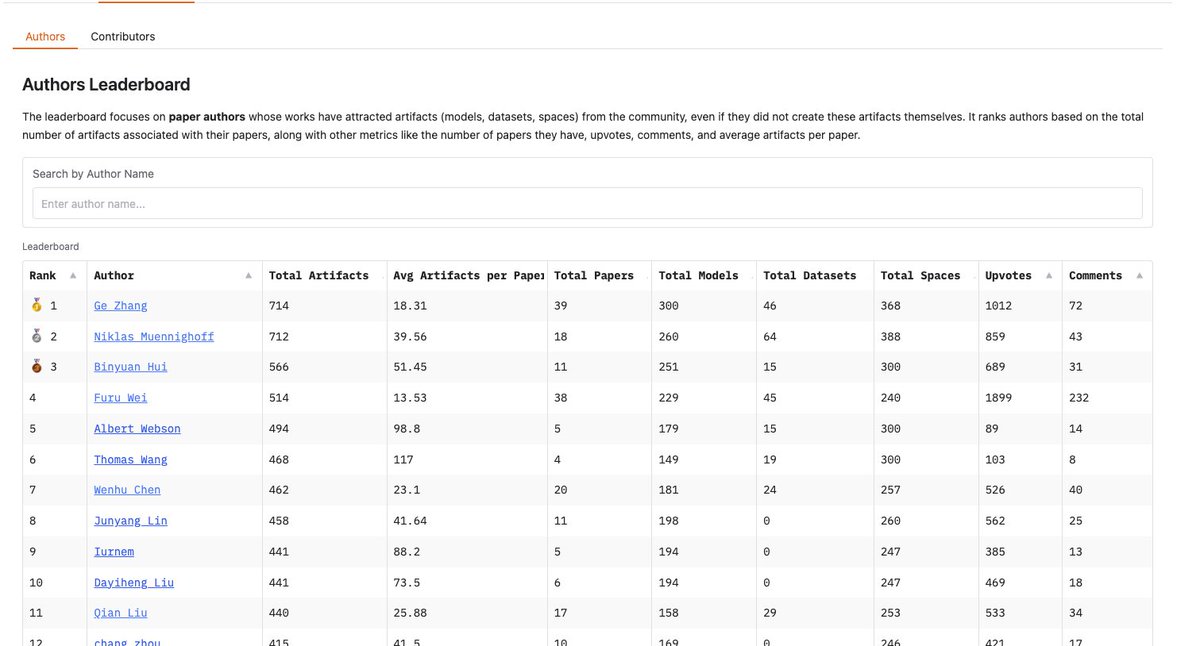

HuggingFace Paper-central now hosts open-source leaderboards. This is like a h-index but for 🤗 artifacts. Discover the authors whose papers have attracted the most open-source artifacts (datasets, models or spaces), and most-active contributors who have developed artifacts associated with papers.