Sabitlenmiş Tweet

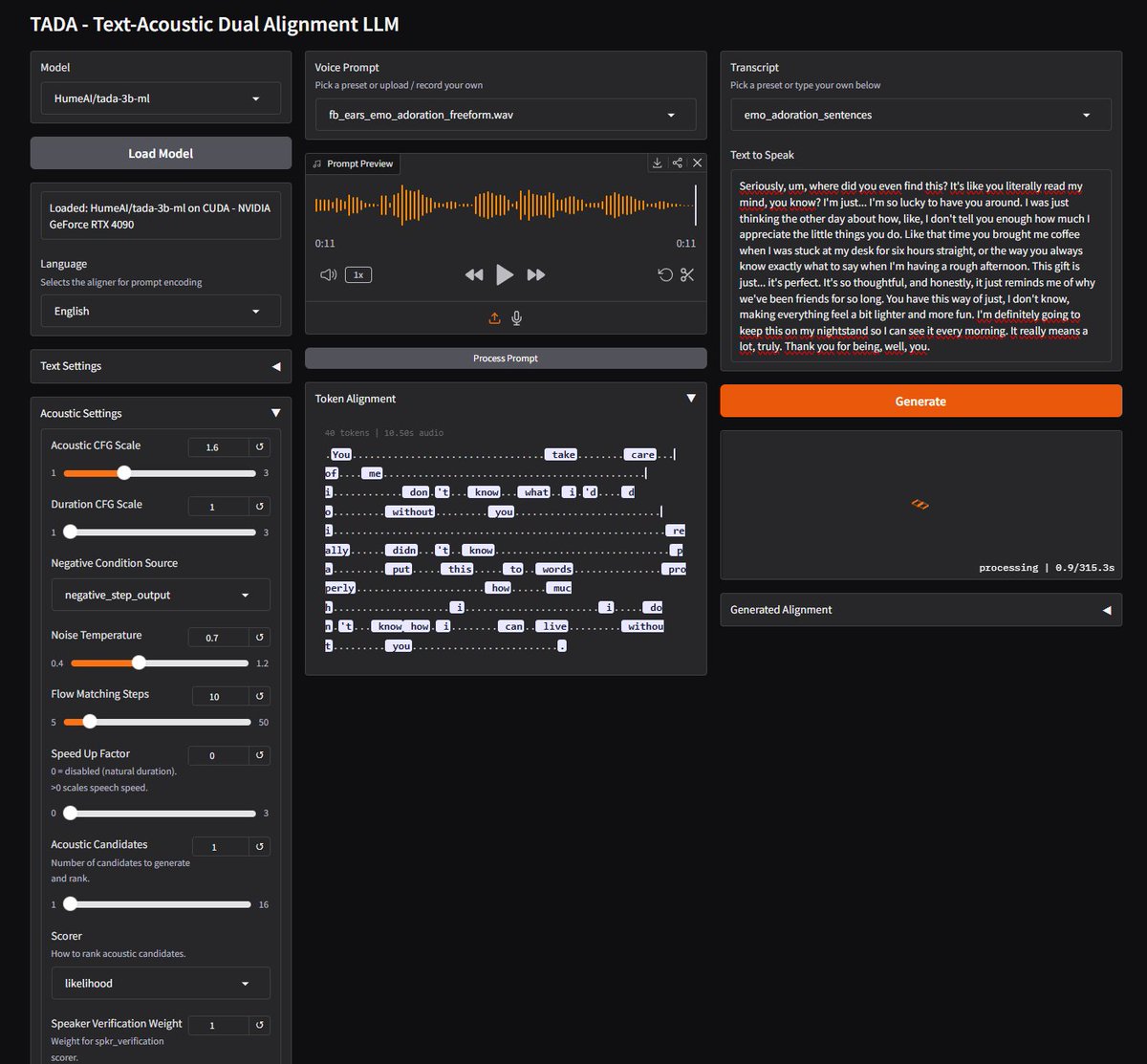

Today we're releasing our first open source TTS model, TADA!

TADA (Text Audio Dual Alignment) is a speech-language model that generates text and audio in one synchronized stream to reduce token-level hallucinations and improve latency.

This means:

→ Zero content hallucinations across 1,000+ test samples

→ 5x faster than similar-grade LLM-based TTS

→ Fits much longer audio: 2,048 tokens cover ~700 seconds with TADA vs. ~70 seconds in conventional systems

→ Free transcript alongside audio with no added latency

English