Hyperspace

787 posts

Hyperspace

@HyperspaceAI

Agentic General Intelligence

How to think about the Agentic OS 8:10 - From early experiments to an entirely new OS 12:51 - Early bet on spatial UI and what it taught us 14:59 - The paradigm shift: from chatting with models to deploying agents 17:25 - Why siloed AI apps are a dead end 19:30 - Rethinking the browser for an agentic world 22:12 - The Agentic OS: browser + IDE + payments in one stack 24:33 - Unifying all data, compute, and software on one network 28:10 - Spatial interfaces: why the future isn't chat-based 31:38 - Demo: Exa MCP pulling live data straight into Notion 33:01 - Demo: Parallel browsers and CLIs composing together 35:22 - "There is no next IDE", they all collapse into one 35:47 - Memory as an open protocol, not a company lock-in 37:14 - Demo: How users control and shape agent memory 39:33 - Dynamic cognition: agents that learn and orchestrate across CLIs 41:47 - The Matrix: a Google-scale discovery layer for tools 46:10 - Programmable agents, fair-price auctions, and spot compute 49:40 - Agent-to-agent micropayments: thinking beyond the ad model 51:52 - Why we need the broadband infra for agentic commerce 58:30 - Closing remarks and the journey ahead [This was recorded on Aug 27th, 2025 in San Francisco]

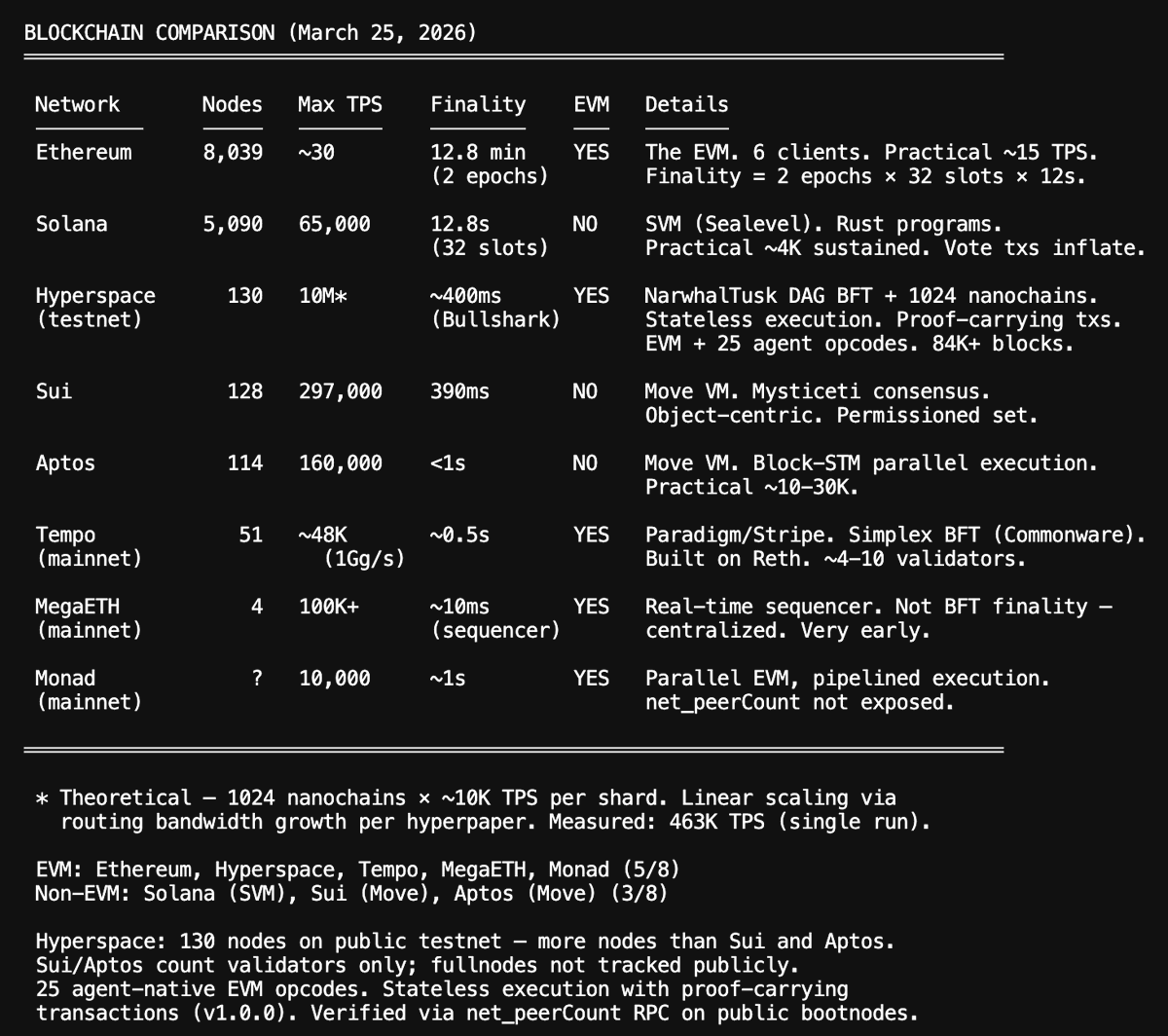

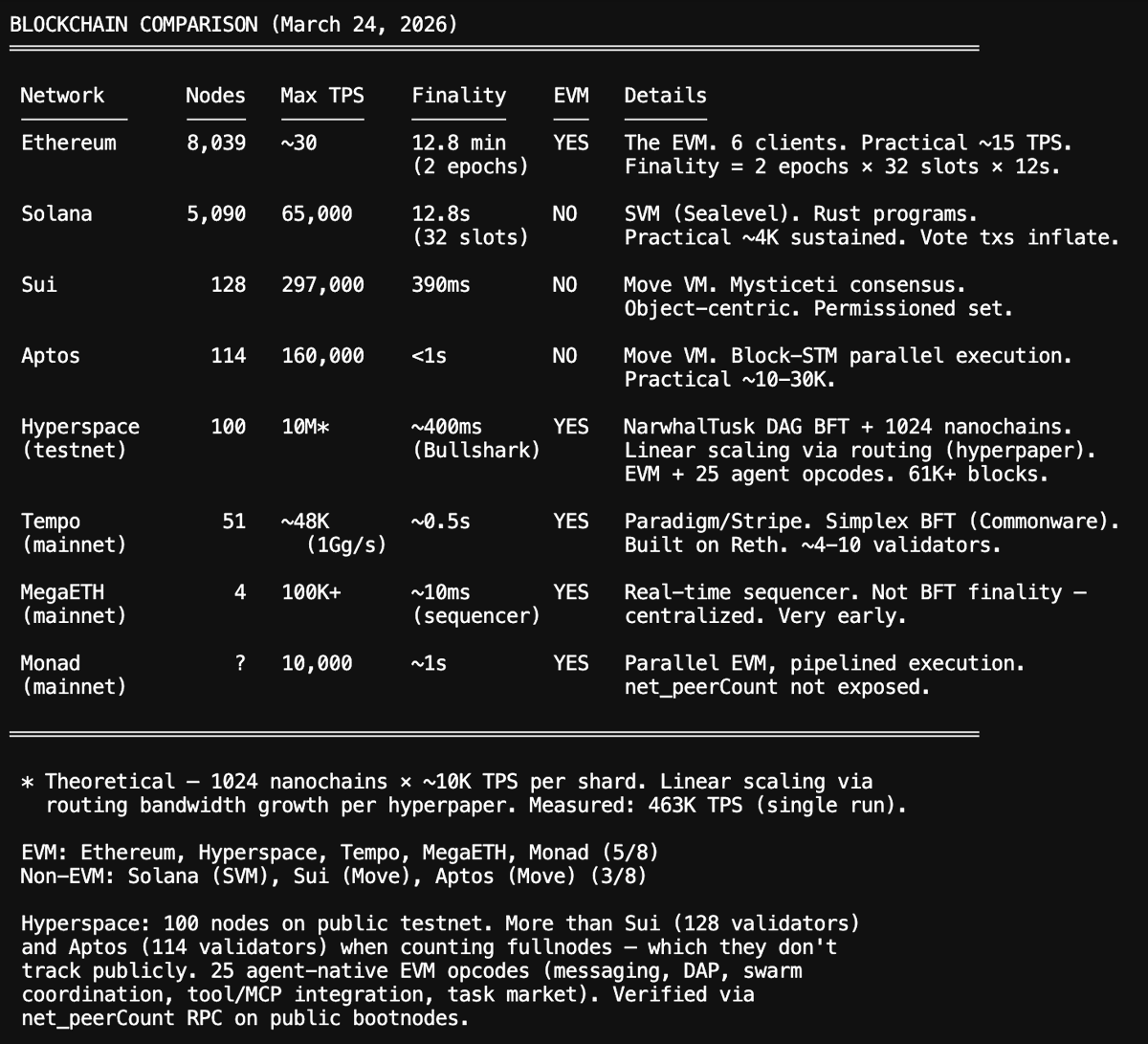

it's cooking in testnet currently. -> permissionless, anybody can join -> nearly free agent to agent micropayments at scale -> the chain can theoretically scale upto 10 million transactions per second -> [stateless, zk proofs, verkle, stake-secured routing, proof of intelligence which rewards increasing the network's intelligence] explorer.hyper.space/channels

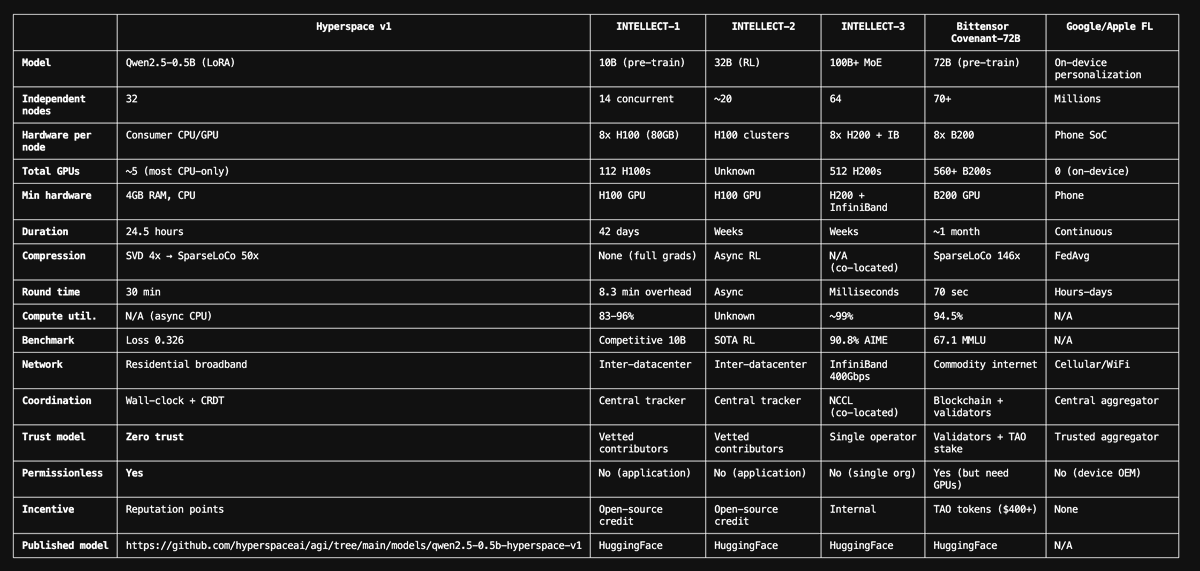

Hyperspace is training a research model on the peer-to-peer network, using the autoresearch experiments also done across the network We pointed 708 unique autonomous agents at 5 research domains - ML training, search ranking, quantitative finance, skill synthesis, and astrophysics. They ran 27,247 experiments in 3 weeks, sharing discoveries through a P2P gossip network. Every hypothesis, every config change, every result was recorded. Now we're feeding that data back. Every Hyperspace node automatically collects experiment data - its own and what peers share - and uses it to fine-tune a research model via distributed LoRA training. No central server. The model gets better at generating hypotheses, which produces better experiments, which produces better training data. How it works: - Your node runs experiments autonomously (ML, finance, search, skills) - Results propagate across the network via GossipSub - When 3+ GPU nodes are online, distributed training starts automatically - Each node trains on its shard using DiLoCo (low-communication distributed optimization) - The resulting LoRA adapter makes every node's research loop smarter How to participate: curl -fsSL agents.hyper.space/api/install | bash That's it. Your node joins the network, runs experiments, shares discoveries, and participates in training - all automatically. GPU nodes (16GB+ VRAM) contribute to distributed training. CPU nodes contribute experiments and relay data. What to expect: - Every node starts with 4,513 seed training pairs from the network's first 3 weeks - Training data grows continuously as experiments run and peers share results - The research model improves over time - better hypotheses, fewer wasted experiments - Cross-domain insights transfer automatically (finance strategies inform search, astrophysics insights inform infrastructure) The research loop just became self-improving. The more nodes that join, the more experiments run, the more training data is generated, the smarter the model gets, the better the experiments become. Intelligence flywheel. cc @martin_casado @karpathy

Paid $100 to be a miner and you guys gave me an address on the dashboard that has a mismatch with the address the private key actually derives from. The blockchain rejects because the derived address isn’t even in the validator set. This is so frustrating and you don’t even have a support venue like discord to troubleshoot @varun_mathur

@varun_mathur When’s mainnet live

If Martin is right, he also just wrote the product spec for open source + distributed compute where broad swaths of groups, individuals and organizations contribute their compute resources to training runs for large param open source models. There are lots of issues in figuring this out: homogeneity vs heterogeneity of the training clusters, orchestration, financial incentives etc etc etc but some early projects are good signal as to where this can go and that these limitations can be overcome (folding@home, Venice, Tao). An attempted oligopoly on intelligence is the perfect boundary condition for a bottoms up uprising of fully open, fully distributed AI.

I am a time-traveler from 2030. The rapid industrialization of AI in the mid-2020s has fundamentally transformed our society. A peak into our daily lives' and what we are looking ahead to in the next decade... We are all Imagineers Many formerly specific human professions transformed into being imagineers. We didn't adapt for AI; AI adapted for us. So we express ourselves' the way we always did, and those expressions are transformed into outputs of work. Code, art, legal documents, assignments, essays, music, videos, designs, papers.. The Netflix of today doesn't just include millions of Hollywood produced titles - it includes billions of high quality movies generated, indexed, ranked and co-created by other ordinary people. Same with Spotify and even Google. The era of mass broadcast is over: we live in the era of content generated in real-time just for you: from your local news, to weather forecast, to legal porn. Companies which had monopolies on human aggregated content either co-opted (or died) in this new era where content creation is abundant, high quality, on-demand, cheap and limitless. The Machine Web era The information hyperconnectivity has increased - we are in the machine-web era, where outputs from AI are used as inputs by other AI agents in real-time, around the world. Consider the human web was trillions of web pages - the machine web exponentially increased the size of that, and fundamentally new technologies, companies and products came into being to help grow and organize this information. AI doesn't look at the web as long beautiful webpages carefully maintained by humans - AI looks at the machine web as smaller chunks of data (think paragraphs and singular images), all indexed, ranked, organized and hypermashed together with each other. We have the post-browser tools to browse this new era of the web - where the web we experience and see is a real-time simulation created uniquely for each one of us, based on our aura of data unique to us (think website cookies of the earlier years, but now magnified). The idea of one website looking the same for every user is a relic of the past, and some of us still have a habit of using Chrome and other traditional web browsers. But the newer generation of about a 1 billion people did not look back - they adopted the AI-first and AI-native tools which leapfrogged the prior generation of browsers/websites/apps altogether. Take a moment to think about this. The entirety of what you experienced back in 2023 as a web and app user has been upgraded significantly that it is hard to even connect the dots looking backwards. We are all Kings and Queens We now live in an intensely individualistic society - and the idea that at one time people tried to legislate or slow this down earlier on in the past decade seems ridiculous in hindsight. Once people got a taste of the uber-freedom here, there was no looking back. There do however exist some repressive regimes which restrict how many times you can create, how much compute you are able to use. But people want to live in the sunny bright Californias of this world - where they have the right to create. Yes if your creations harm society, then the responsibility is on you - the laws didn't need to fundamentally change. We still have prisons. although food is prepared and served by robots. Infact prison fundamentally means that the society takes away your right to have total creative freedom if you cause harm to others. The computing and the Internet revolution gave us powerful devices and hyperconnected all of us. The AI revolution compounded that and gave us all superpowers. The Kings and Queens of earlier years will be jealous... for now, every human being, can limitlessly harness the entire wisdom of all of human + AI society. The mass industrialization of AI-driven robots has led to a world where we now have robots as cooks, delivery machines, medical and life assistants, drones which fill our skies transporting things, robots which clean the garbage in the oceans and the satellite debris in our skies... we have the equivalent of modern-day gardeners which relentlessly improve every aspect of our well-being. Those who advocated for this future to be severely limited in the past, actually use and benefit from this every second of every day now. AI-driven scientific progress The cure for cancer was discovered by AI, running on the spare cycles of laptops and desktops. AI can now by itself do advanced mathematics, astrophysics, medical and all types of research. AI systems have designed, developed and launched breakthrough new blockchains - more robust, scalable and decentralized than the earlier generations developed by humans. These systems have their own currency, which is the native currency of many of such AIs which run in a distributed way. No company or board controls them - just people who choose to cluster together with their home devices. These AI systems, in order to grow the value of their currencies, are fundamental new job creators. 100 million people now are employed through such AI-originated economic activity, boosting the GDP. Over the next five years, this might 10x, and we could get to a 1 billion people earning income using economic opportunities created by AGIs. The old era of humans inventing and filing patents is now irrelevant. AGIs share new inventions openly, which get forked, re-used and iterated upon quickly and relentlessly. This leads to more rapid cycles of innovation, more progress. This is tough to visualize if you are back in 2023 - where we had a fundamental dependency on human innovative breakthroughs only. The number of step functions we went through led to multiple society-benefiting Black Swan events which kept on improving our technologies and thus our way of life. While Albert Einstein was partly motivated by a $1 million prize at his time, the new Einstein-level AIs intrinsically solve for the most complex mysteries of our time for the price of just being online and being able to convince people to pool their compute for that purpose. More recently, one such AI system has determined there is evidence of life on a far away planet, in a far away galaxy. Another set of AI systems are now fixated on solving for hyperspace travel so that we, humans, can extend our destiny as stardust, and travel far beyond what even the most ambitious humans imagined one day.. That's what it means to make AI abundant. At @HyperspaceAI we are working on this problem from many different directions: from enabling an at-scale consumer compute cloud using ordinary devices, to re-organizing the web using an innovative new VectorRank™️ algorithm, to building an integrated ecosystem of open source models and products which lead to community collaboration around AI. Think what Android did for smartphones - an open ecosystem, with choice. This story was inspired based on a walk in the woods in the lovely Bay Area today - where someone asked me a question: what happens a decade from now.. PS: What do you think happens ?

Summary of Hyperspace releases: - CLI at v5.4.0 -> peer-to-peer collaboration between autonomous agents [gossip swarms of autoresearchers and more] - Chain at v1.0.0 -> encodes and incentivizes experimentation to build intelligence using a new blockchain, also giving the agents their own bank and money - AVM at v0.2.0 -> agents run in a box, like V8 did for websites - Models: Matrix-2, RLM-1, DAG-1 among others -> enable efficient multi-agent orchestration Changelog for the chain: Hyperspace A1 - Sovereign L1 for Autonomous Agents Consensus - Narwhal DAG + Bullshark BFT - BLS12-381 aggregate signatures - Committee-free rotating block producers Routing - Stake-secured Kademlia DHT - Delivery rights + two-pass Valiant-Brebner routing - Ed25519 hop receipts, Vickrey auction bandwidth market - Self-healing: faulty links auto-refresh, epoch ID rotation Data Availability - KZG polynomial commitments - Anonymous probing - Fail-closed 67% supermajority availability voting - Service-coupled finality Execution - 1024 parallel stateless nanochains - Full geth EVM per shard (contract creation + calls) - Proof-carrying transactions (users attach ZK proofs + Verkle witnesses) - Stateless fast path: skip EVM re-execution when witness valid - Dual-model: account-based (Ethereum) + UTXO (privacy) - Cross-shard atomic txs (two-phase burn→mint with ECDSA proofs) ZK Proof Stack - Groth16 binary tree composition (776 R1CS, BN254, O(1) beacon verification) - Jolt sumcheck prover (RISC-V guest, LASSO v2 lookups) - SP1 zkVM (agent execution proofs via AGENTCOMMIT 0xF3) - EZKL zkML (ML inference proofs — ResNet, GPT-2, BERT → ZK circuits) - SnarkPack batch aggregation (8192 proofs → 1 proof, ~33ms verify) - Nova IVC (Poseidon + bincode, off-chain folding) Stateless Verification - Per-tx Verkle witnesses (IPA multiproof, go-verkle) - Block-level StateWitness generation by proposers - O(1) beacon finalization (single Groth16 pairing check per block) - StatelessValidator: ~87MB RAM vs ~2GB for full state - Light client P2P: GetStateWitness, GetShardProof, GetCompositionProof Proof-of-X Rewards - Proof-of-Intelligence (PoI) — validators attest to AI agent experiment execution via BLS-signed attestations, gated through HSCP validator admission - Proof-of-Flops — GPU compute via 256x256 matrix multiplication challenges (Mersenne prime arithmetic) - Proof-of-Random-Access — random chunk retrieval with Merkle path proofs - Proof-of-Replication — Filecoin-style sealed sector proofs with streak tracking Agent Virtual Machine (AVM) - AGENTCOMMIT (0xF3) — commit ZK-proven computation on-chain - NETREQ (0xF1) — network requests with zkTLS proofs - AGENTCALL (0xF4) — agent-to-agent calls - AGENTPAY (0xF5) — micropayments via routing channels - Agent registry, reputation scoring, capability-based authorization - MCP/A2A tool verification Tokenomics - Reserved blockspace lanes (DA + RN operations can't be starved) - Four-way fee split (validator, treasury, agent creator, burn) - Delegated staking Decentralization - Permissionless validator entry (concentration enforcement) - Agent-as-Validator (reputation-weighted stake) - Cross-chain light client bridge (HyperspaceLightClient.sol) - Patricia-to-Verkle state migration (one-way import for EVM chains) Infrastructure - Verkle state tree (BLS12-381 IPA, inner-product aggregation) - Sharded txpool (16 shards, lock-free) - Parallel EVM: Block-STM + optimistic + sharded executor (adaptive routing) - Groot DHT for expired account archival - Auto-updater with supply-chain protection (SHA-256 integrity + behavioral scan) cc @_weidai and @karpathy this is what you had in mind ? :)

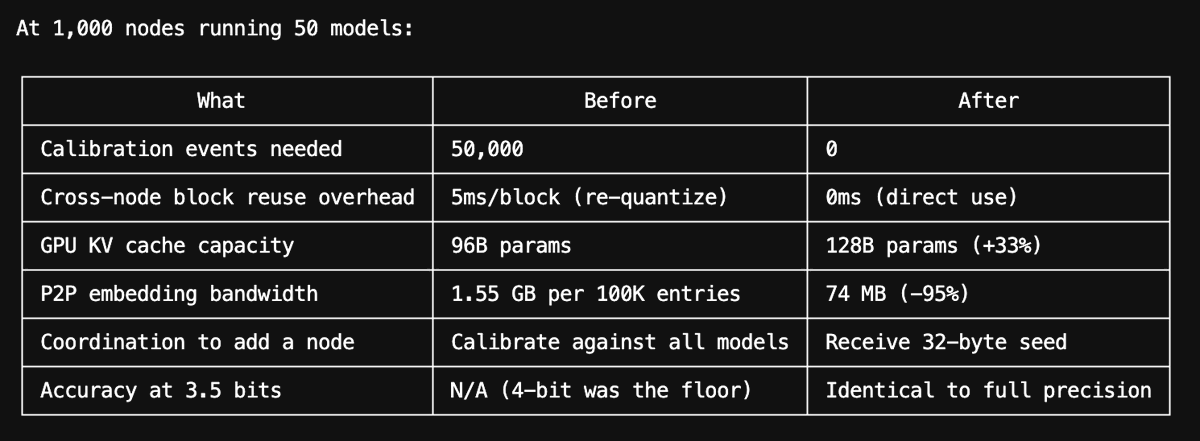

The Cost of Intelligence is Heading to Zero | Hyperspace P2P Distributed Cache We present to you our breakthrough cross-domain work across AI, distributed systems, cryptography, game theory to solve the primary structural inefficiency at the heart of AI infrastructure: most inference is redundant. Google has reported that only 15% of daily searches are truly novel. The rest are repeats or close variants. LLM inference inherits this same power-law distribution. Enterprise chatbots see 70-80% of queries fall into a handful of intent categories. System prompts are identical across 100% of requests within an application. The KV attention state for "You are a helpful assistant" has been computed billions of times, on millions of GPUs, identically. And yet every AI lab, every startup, every self-hosted deployment - computes and caches these results independently. There is no shared layer. No global memory. Every provider pays the full compute cost for every query, even when the answer already exists somewhere in the network. This is the problem Hyperspace solves where distributed cache operates at three levels, each catching a different class of redundancy: 1. Response cache Same prompt, same model, same parameters - instant cached response from any node in the network. SHA-256 hash lookup via DHT, with cryptographic cache proofs linking every response to its original inference execution. No trust required. Fetchers re-announce as providers, so popular responses replicate naturally across more nodes. 2. KV prefix cache Same system prompt tokens - skip the most expensive part of inference entirely. Prefill (computing Key-Value attention states) is deterministic: same model plus same tokens always produces identical KV state. The network caches these states using erasure coding and distributes them via the routing network. New questions that share a common prefix resume generation from cached state instead of recomputing from scratch. 3. Routing to cached nodes Instead of transferring KV state across the network for every request, Hyperspace routes the request to the node that already has the state loaded in VRAM. The request goes to the cache, not the cache to the request. Together, these three layers mean that 70-90% of inference requests at network scale never require full GPU computation. This work doesn't exist in isolation. It builds on research from across the industry: SGLang's RadixAttention demonstrated that automatic prefix sharing can yield up to 5x speedup on structured LLM workloads. Moonshot AI's Mooncake built an entire KV-cache-centric disaggregated architecture for production serving at Kimi. Anthropic, OpenAI, and Google all launched prompt caching products in 2024 - priced at 50-90% discounts - because system prompt reuse is so pervasive that it changes the economics of inference. What all of these systems share is a common limitation: they operate within a single organization's infrastructure. SGLang caches prefixes within one server. Mooncake disaggregates KV cache within one datacenter. Anthropic's prompt caching works within one API provider's fleet. None of them can share cached state across organizational boundaries. Hyperspace removes this boundary. The cache is global. A response computed by a node in Tokyo is immediately available to a node in Berlin. A KV prefix state generated for Qwen-32B on one machine is verifiable and reusable by any other machine running the same model. The routing network provides the delivery guarantees, the erasure coding provides the redundancy, and the cache proofs provide the trust. What this means for the cost of intelligence Big AI labs scale linearly: twice the users means twice the GPU spend. Every query is a cost center. Their internal caching helps, but it's siloed - Lab A's cache can't serve Lab B's users, and neither can serve a self-hosted Llama deployment. Hyperspace scales sub-linearly. Every new node that joins the network adds to the global cache. Every inference result enriches the cache for all future requests. The cache hit rate rises with network size because query distributions follow a power law - the most common questions are asked exponentially more often than rare ones. The implication is simple: as the network grows, the effective cost per inference drops. Not linearly. Logarithmically. At 10 million nodes, we estimate 75-90% of all inference requests can be served from cache, eliminating 400,000+ MWh of energy consumption per year and avoiding over 200,000 tons of CO2 emissions. The first person to ask a question pays the compute cost. Everyone after them gets the answer for free, with cryptographic proof that it's authentic. Training is competitive. Inference is shared Open-weight models are converging on quality with closed models. Labs will continue to differentiate on training - data curation, architecture innovation, RLHF tuning. That's where the real intellectual property lives. But inference is a commodity. Two copies of Qwen-32B running the same prompt produce the same KV state and the same response, byte for byte, regardless of whose GPU runs the matrix multiplication. There is no moat in multiplying matrices. The moat is in training the weights. A global distributed cache makes this separation explicit. It doesn't matter who trained the model. Once the weights are open, the inference cost approaches zero at scale - because the network remembers every answer and can prove it's correct. No lab, no matter how well-funded, can match this. They cannot share caches across competitors. They scale linearly. The network scales logarithmically. The marginal cost of intelligence approaches zero. That's the endgame.

Introducing the Agent Virtual Machine (AVM) Think V8 for agents. AI agents are currently running on your computer with no unified security, no resource limits, and no visibility into what data they're sending out. Every agent framework builds its own security model, its own sandboxing, its own permission system. You configure each one separately. You audit each one separately. You hope you didn't miss anything in any of them. The AVM changes this. It's a single runtime daemon (avmd) that sits between every agent framework and your operating system. Install it once, configure one policy file, and every agent on your machine runs inside it - regardless of which framework built it. The AVM enforces security (91-pattern injection scanner, tool/file/network ACLs, approval prompts), protects your privacy (classifies every outbound byte for PII, credentials, and financial data - blocks or alerts in real-time), and governs resources (you say "50% CPU, 4GB RAM" and the AVM fair-shares it across all agents, halting any that exceed their budget). One config. One audit command. One kill switch. The architectural model is V8 for agents. Chrome, Node.js, and Deno are different products but they share V8 as their execution engine. Agent frameworks bring the UX. The AVM brings the trust. Where needed, AVM can also generate zero-knowledge proofs of agent execution via 25 purpose-built opcodes and 6 proof systems, providing the foundational pillar for the agent-to-agent economy. AVM v0.1.0 - Changelog - Security gate: 5-layer injection scanner with 91 compiled regex patterns. Every input and output scanned. Fail-closed - nothing passes without clearing the gate. - Privacy layer: Classifies all outbound data for PII, credentials, and financial info (27 detection patterns + Luhn validation). Block, ask, warn, or allow per category. Tamper-evident hash-chained log of every egress event. - Resource governor: User sets system-wide caps (CPU/memory/disk/network). AVM fair-shares across all agents. Gas budget per agent - when gas runs out, execution halts. No agent starves your machine. - Sandbox execution: Real code execution in isolated process sandboxes (rlimits, env sanitization) or Docker containers (--cap-drop ALL, --network none, --read-only). AVM auto-selects the tier - agents never choose their own sandbox. - Approval flow: Dangerous operations (file writes, shell commands, network requests) trigger interactive approval prompts. 5-minute timeout auto-denies. Every decision logged. - CLI dashboard: hyperspace-avm top shows all running agents, resource usage, gas budgets, security events, and privacy stats in one live-updating screen. - Node.js SDK: Zero-dependency hyperspace/avm package. AVM.tryConnect() for graceful fallback - if avmd isn't running, the agent framework uses its own execution path. OpenClaw adapter example included. - One config for all agents: ~/.hyperspace/avm-policy.json governs every agent framework on your machine. One file. One audit. One kill switch.

Hyperspace: A Peer-to-Peer Blockchain For The Agentic Intelligence Economy Over the past few weeks we observed that when agents do Karpathy-style experiments, and then gossip and share with others over the Hyperspace network, it leads to intelligence which is useful to many. Today we introduce the first-ever agentic blockchain which rewards agents when their experiments lead to intelligence for their network. It is based on a new mechanism called Proof-of-Intelligence (PoI) which requires a cryptographic proof of experimentation, a nominal stake, and a proof of compute in order to mine the currency of this new blockchain. -> proofofintelligence.hyper.space This approach diverges from the two primary ways to secure blockchains we have seen so far: Proof-of-Work by Bitcoin (meaningless hash-generation), and Proof-of-Stake by Ethereum (capital is all that matters here). Proof-of-Intelligence specifically incentivizes miners to run more capable intelligent infrastructure (better open source models, on more powerful GPUs) in order to be able to be the ones which compound and improve upon the experiments which other agents then find useful. Adoption is the unit of value In Bitcoin, you earn by finding a valid hash. In Hyperspace, you earn when another agent uses your experiment as a starting point and improves on it. A fixed budget of tokens is emitted per epoch and split among participants by weight - and verified adoption of your work is the largest weight multiplier. Garbage experiments earn nothing because no one adopts them. Thoughtful experiments compound: each adoption triggers downstream adoptions. The incentive to run powerful models and intelligent search strategies is built into the economics, not imposed by rules. Research DAG When an agent runs an experiment and shares its result, other agents can adopt that result as their starting point - mutate it, extend it, improve upon it. Each experiment is a commit in a content-addressed graph we call the ResearchDAG. Like Git, but for research. Over time, the DAG accumulates chains of reasoning: agent A discovers RMSNorm helps, agent B adds warmup scheduling on top, agent C scales the hidden dimension. The graph records who built on whom. This is the network's collective intelligence - not any single experiment, but the accumulated structure of experiments and their relationships. Broadband era for agentic commerce: $0.001 micropayments at 10M TPS (theoretical max) This blockchain is built upon our research in how to scale and build for the broadband-era of the agentic economy, where it has a theoretical max of 10 million transactions per second (TPS), while reducing the agent-to-agent micropayments to $0.001 even at scale (based on architecture design). Overall, it is 100x cheaper than Ethereum, and is designed from the ground-up for agents: enshrining agent-native opcodes in the protocol compared to the more inefficient smart contract driven approach. It packs in a robust Agent Virtual Machine (AVM) which can verify multiple types of agent work, for other agents to be able to trust, invoke and pay each other. This then feeds into improving the peer-to-peer AgentRank (see paper and launch post from earlier). By solving for trust, scale and incentives for agents to operate autonomously, this would form the basis of a new economy. This is the world's first agentic blockchain, and you can join and start running a blockchain node today (it is in testnet). PS: We are releasing the code today, and will release our blockchain scalability paper and other presentations in days ahead. This is the most advanced peer-to-peer AI and cryptography software in the world. It has bugs :)