Eric Hedlin

134 posts

Eric Hedlin

@IAmEricHedlin

Multimodal researcher at Qualcomm. Two-time World Championships medalist in open water swimming

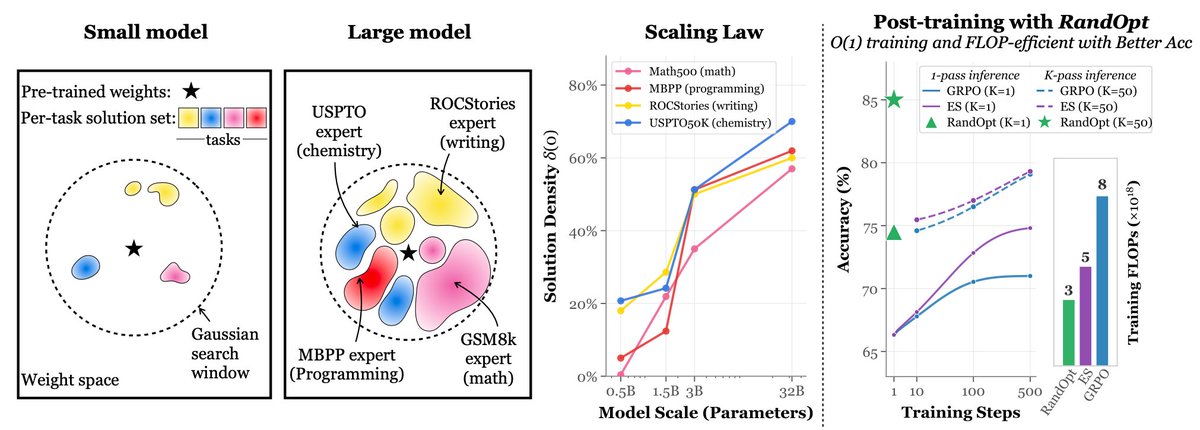

We are releasing a paper I'm very excited about. We know test-time scaling is a path to greatly improved results, and achieves reasoning in the case of LLMs. We present a new and promising way to amortize it into training using HyperNetworks for image generation models.

We present Hypernetwork Fields. We estimate the entire convergence trajectory for hypernetworks by introducing an extra variable representing the state of convergence. We show results for our model estimating DreamBooth parameters. 1/N🧵

We present Hypernetwork Fields. We estimate the entire convergence trajectory for hypernetworks by introducing an extra variable representing the state of convergence. We show results for our model estimating DreamBooth parameters. 1/N🧵

We present Hypernetwork Fields. We estimate the entire convergence trajectory for hypernetworks by introducing an extra variable representing the state of convergence. We show results for our model estimating DreamBooth parameters. 1/N🧵