ICPSquad

3.7K posts

@ICPSquad

The #1 Source Of Internet Computer Intel, News & Resources That Will Take You From $ICP Newbie To Kingpin So You Can Start Stacking $ICP Like Pancakes

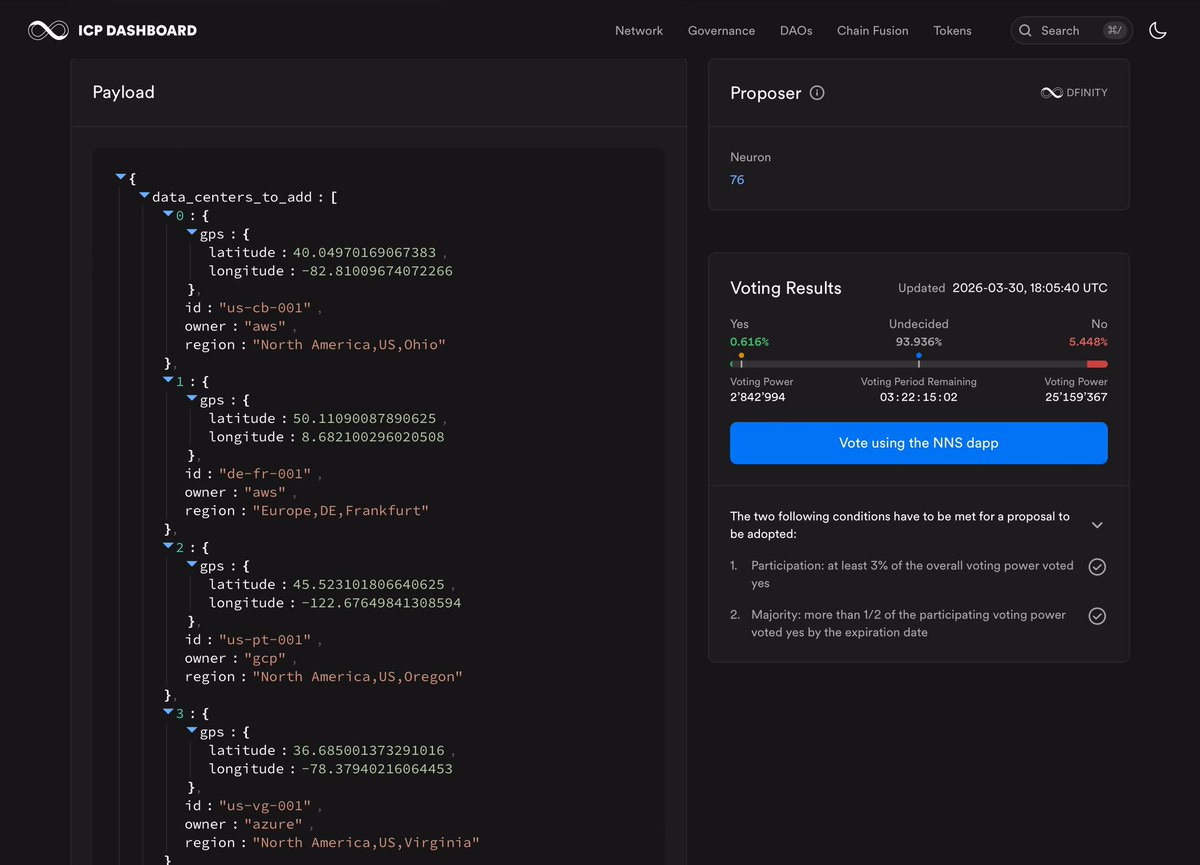

Proposal 140924 has been rejected, and now we’re dealing with the consequences. I’ve mentioned this multiple times: we need a way to protect the NNS from spam and advertising abuse. If the argument is “this is democracy and they lose 25 $ICP,” just keep in mind that 25 ICP (~$60) is nothing for someone holding millions. It’s basically a cheap way to advertise to thousands of people. I’m not saying we should go against democratic processes. I’m saying we should make it harder for bad actors. If someone wants to use the NNS to push their narrative, fine, but at least make them pay a meaningful amount, not pocket change.

Urm, this is very misleading: "Zero... provides a credible alternative to centralized cloud providers like AWS" At a high-level, all you need to know is that Zero works by proving hosted computation is correct. The proving overhead makes computation 100,000X more expensive. Zero uses the Jolt zkVM to run computations, and generate proofs that computation has been performed correctly. The 100,000X cost multiplier number comes from the Jolt project itself, as per this linked content from the fall of 2025: a16zcrypto.com/posts/article/… Factually-detached as marketing in our industry typically is, I feel duty bound to share the truth because harmful market confusion has now been caused by a succession of networks marketing themselves as "world computers" capable of providing onchain cloud, when they can't remotely do anything of the sort. Hosting computation and apps fully onchain on the Internet Computer network, by comparison, doesn't involve an insane overhead, which is why the network is actually being used for sovereign cloud, and as a self-writing cloud backend by Caffeine. We don't want people to get confused. LayerZero naturally fails to mention the 100,000X expense overhead and instead bamboozles readers with descriptions of its "QMDB" verifiable database, and lofty claims that Zero could potentially process 2 million TPS (transactions per second). Team posts generally also include some idealist blockchain polemic for good measure, to emphasize they are the real deal. But just focus on the 100,000X cost multipler. The claim that Zero can provide onchain cloud that rivals AWS doesn't pass the smell test! That does mean the Jolt zkVM ("zero knowledge virtual machine") developed by a16z Research, is anything less than very impressive. It delivers major advances in the field and can be accurately described as an incredible piece of work. I can even imagine the Internet Computer network using it for much more specific purposes in the future. But, "The Network is The Cloud" paradigm cannot remotely be delivered by Zero so long as it relies on Jolt to prove the correctness of compute, which adds this insane overhead (Jolt runs the compute at near native speed, and it's the generation of the proof that creates the massive overhead)... Example 1: If a database command takes 1 second to run on some machine, then if that machines runs the computation using Jolt, it will take longer than a whole day for the computation to complete! Example 2: If a single server machine is running at full utilization, then to run that workload on Jolt, we must offload its proving work to other server machines. Since dividing proving work amongst different servers introduces additional overhead, around an additional 125,000X server machines will be required to prove the computation taking place. You read that correctly! LayerZero claims the Zero L1 can process 2 million TPS of general-purpose cloud logic. If we assume a standard server/node handles 2,000 TPS of complex SQLite logic (for example, SQLite can be embedded inside a Wasm canister smart contract on the Internet Computer), LayerZero would need 1,000 servers just for execution. But to provide the Jolt proofs they promise, they would need an additional 125,000,000 servers (125 million servers) running at full capacity just to keep up. This would require an unimaginably large data center to be available for proving (one orders of magnitude larger than has ever been created before) and somehow the network would have to pay for that. LayerZero is aware of these issues, and so let's look at how they hope to get around them, and the sacrifices Zero makes, which sheds light on the validity of its deccentralization claims. LayerZero hopes to leverage two technical angles to make this scheme practical. Firstly, zkVMs like Jolt allow computation and proving to be separated. Essentially, the computation runs on Jolt first at near native speed, producing an "execution trace" as a side effect, then that trace is used to generate the proof in a separate process that can run afterwards (which proof creation adds the 100,000X computational cost overhead). Secondly, the job of creating the proof from the trace can be divided amongst different machines ("sharded"), to speed up proof generation. For example, dividing the work of proof generation among 10 machines might produce a 9X speedup (rather than a 10X speedup owing to the overhead of sharding). Note that although a speedup is achieved, the overall cost multiplier increases beyond 100,000X owing to the overhead involved with sharding/parallelization. Zero is composed of multiple "atomicity zones," which scale Zero's capacity horizontally. An atomicity zone is roughly analogous to an Internet Computer subnet. Each atomicity zone reaches "soft finality" first, which occurs when the computation completes. Then "hard finality" is achieved later when the proving/generation of the proof of correctness is complete. Let's assume that proving is offloaded to some other capacity within the network, so computation can proceed at near native speed, delivering soft finality fast, while the proving catches up in the background, providing hard finality later. Firstly, we can see that while computation can proceed ahead of proving in bursts, proving must generally keep pace with computation, otherwise it will fall further and further behind. Ultimately, that means that computation is like a high-speed car that can only drive as fast as a person in the back can draw a map of where it's going. Secondly, we see that Zero is probably relying on the idea that people will be happy with soft finality. This is why their SVID (Scalable Verifiable Information Dispersal) module essentially shares *claims* about the state of atomicity zones across the network, rather than proven state created by hard finality. Thirdly, we can see that Zero's idea about the network relying on sharing data for which only soft finality has been achieved is flawed. Why? Because different atomicity zones will wish to rely on the state/data of others in their own computations. This results in easy-to-understand phenomenon called "fan out." Let's say Zone A shares soft finality data with Zone B, which performs some actions, updating its own data, which is shared with Zone C. If Zone A cannot later produce a proof to show that its data was correct at the time it was shared with B, and it reached hard finality, then Zone A must rollback to the previous valid hard finality state—which in turn means that Zone B must rollback, and Zone C must rollback. In a global onchain cloud environment, one app can call another app, that can call another app, ad infinitum. This "fan out" makes reverting state near impossible, especially if proving falls well behind computation. According to the "Zero: Technical Positioning Paper," if a proof fails, the system will use on-chain governance (directed by voting by ZRO holders/validators) to manually "adjust protocol parameters" or "upgrade validator software" to fix the problem. Obviously, if this ever became necessary, implementing the fix will not be so easy, and will take the network down for a very long time indeed. So what is LayerZero thinking? My guess is that they assume by partnering with trusted centralized parties like Google and Citidael, and getting them to run atomicity zones, their soft finality will be reliable enough that state reversions won't ever be necessary. We saw something similar in Optimism, the so-called Ethereum L2, which was run on a tightly-controlled network of machines. It was possible to submit a fraud proof to the network showing that something had gone wrong, but there was no way to revert to the state in such a case. It really was truly optimistic! If this understanding is correct, it means Zero is really a network depending on trust in institutions, rather than the math of zkVM proving per se. Since the network will be relying on soft finality and trust in institutions, rather than the hard finality provided by proving, it's not clear what the benefit of using Jolt exactly is—if the real purpose is to attach Zero to the buzz around zero knowledge proofs, then potentially this will become one of the most expensive marketing exercises in history. But, you say, this SURELY cannot be true! Well, this is where I landed looking at how they claim Zero works—although my time is limited, and I could have made mistakes. I hope they let me know if I have. My guess is that LayerZero justifies the current design of Zero on the basis that hardware cavalry is coming to make their architecture work better in the future. Specialized zk hardware, such as ZK-ASIC or FPGA devices that can be installed into servers, much like the Bitcoin mining cards that do hashing, are in development by companies like Ingonyama, and might reduce the proving overhead to 1,000x - 5,000x if their claims are accurate. Obviously, that kind of overhead will still be far too high for Zero to provide onchain cloud that can rival AWS, but, if it enables them to ditch soft finality, removing the impossible-to-satisfy requirement that the network can run global state reversions across atomicity zones, Zero will be interesting as a solution for hosting DeFi. I wish them well.

Have been following reactions to what I said about L2s about 1.5 days ago. One important thing that I believe is: "make yet another EVM chain and add an optimistic bridge to Ethereum with a 1 week delay" is to infra what forking Compound is to governance - something we've done far too much for far too long, because we got comfortable, and which has sapped our imagination and put us in a dead end. If you make an EVM chain *without* an optimistic bridge to Ethereum (aka an alt L1), that's even worse. We don't friggin need more copypasta EVM chains, and we definitely don't need even more L1s. L1 is scaling and is going to bring lots of EVM blockspace - not infinite (AIs in particular will need both more blockspace and lower latency than even a greatly scaled L1 can offer), but lots. Build something that brings something new to the table. I gave a few examples: privacy, app-specific efficiency, ultra-low latency, but my list is surely very incomplete. A second important thing that I believe is: regarding "connection to Ethereum", vibes need to match substance. I personally am a fan of many of the things that can be called "app chains". For example I think there's a large chance that the optimal architecture for prediction markets is something like: the market gets issued and resolved on L1, user accounts are on L1, but trading happens on some based rollup or other L2-like system, where the execution reads the L1 to verify signatures and markets. I like architectures where deep connection to L1 is first-class, and not an afterthought ("we're pretty much a separate chain, but oh yeah, we have a bridge, and ok fine let's put 1-2 devs to get it to stage 1 so the l2beat people will put a green checkmark on it so vitalik likes us"). The other extreme of "app chain", eg. the version where you convince some government registry, or social media platform, or gaming thing, to start putting merkle roots of its database, with STARKs that prove every update was authorized and signed and executed according to a pre-committed algorithm, onchain, is also reasonable - this is what makes the most sense to me in terms of "institutional L2s". It's obviously not Ethereum, not credibly neutral and not trustless - the operator can always just choose to say "we're switching to a different version with different rules now". But it would enable verifiable algorithmic transparency, a property that many of us would love to see in government, social media algorithms or wherever else, and it may enable economic activity that would otherwise not be possible. I think if you're the first thing, it's valid and great to call yourself an Ethereum application - it can't survive without Ethereum even technologically, it maximizes interoperability and composability with other Ethereum applications. If you're the second thing, then you're not Ethereum, but you are (i) bringing humanity more algorithmic transparency and trust minimization, so you're pursuing a similar vision, and (ii) depending on details probably synergistic with Ethereum. So you should just say those things directly! Basically: 1. Do something that brings something actually new to the table. 2. Vibes should match substance - the degree of connection to Ethereum in your public image should reflect the degree of connection to Ethereum that your thing has in reality.