Sabitlenmiş Tweet

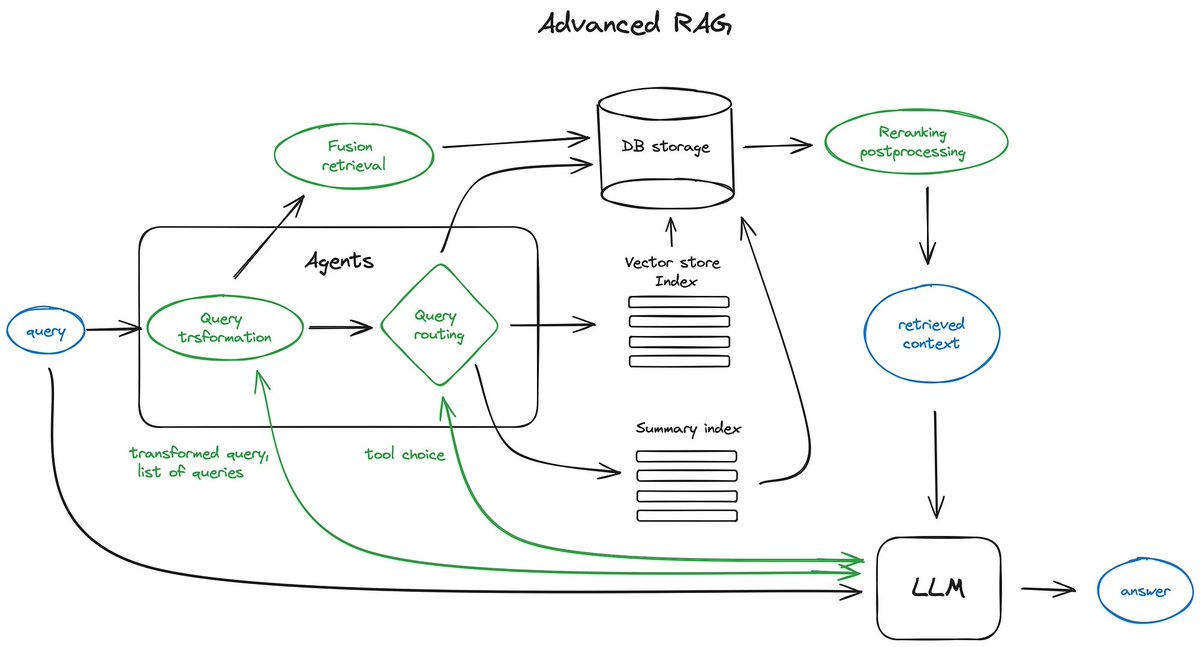

In this post, we show you our AWS solution architecture that features a network digital twin using graphs and Agents AI. #strandsagents #AI #AWS #agenticAI

linkedin.com/posts/imen-gri…

English

Imen Grida Ben Yahia

1K posts

@Imengby

passionate, curious, mission-driven |PhD | Principal AI/ML specialist @aws | ex-Orange | Public speaker | researcher | #networks #networkdata #ML #DL #ML #MLOPS

What do you want to learn in 2024?