Sabitlenmiş Tweet

What many people miss about @MarinadeFinance

It doesn’t chase validators.

It actively distributes stake to strengthen Solana’s decentralization.

That’s real protocol responsibility.

Stake with marinade.finance

English

Paul

12.6K posts

@Importerpaul

I talk money, mindset & real life. ■ web 3 Content creator ■ Guardian @solflare ■ Building on @solana ■ Master chef @MarinadeFinance

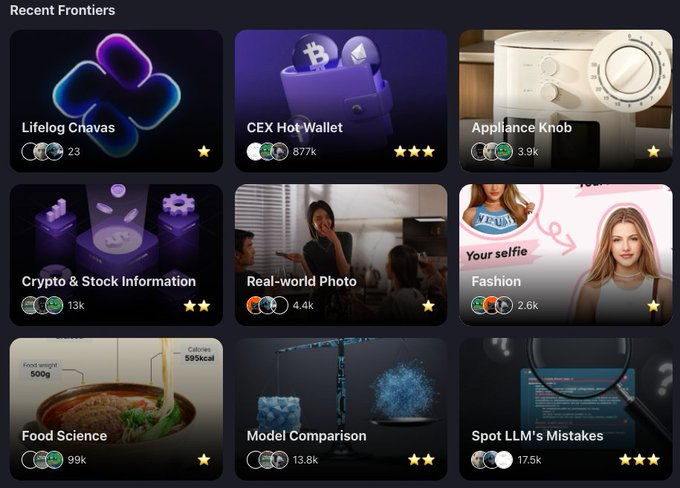

Robots are learning to work in the real world — and they need real human footage to do it. Help train the next generation of home-assist robots by recording first-person POV videos of yourself performing everyday household tasks. 3 scenes to choose from: - Kitchen Cooking - Household Tidying - Home Cleaning Every valid 10-minute clip earns 100 Points + 0.5 USDT.