Infini-AI-Lab

109 posts

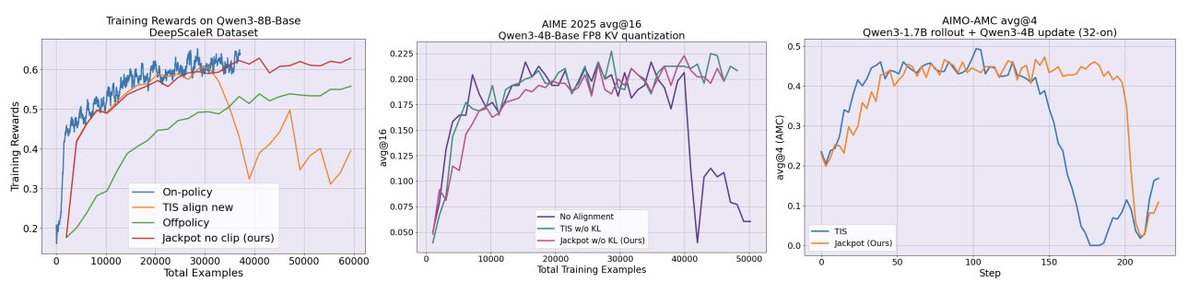

RL is notoriously unstable under actor–policy mismatch 😥 — a common reality caused by kernel differences, MoE randomness, FP8 rollouts, or asynchronous pipelines. But here’s a crazy thought 🤔 👉 What if you could RL-train a large model using rollouts generated only by a weaker, faster, and completely different model? Sounds doomed from the start? 💩 We are releasing Jackpot 🎰.💡 enabling training Qwen3-8B-Base using only Qwen3-1.7B-Base generated rollouts ✨ Jackpot is surprisingly powerful: • Enables cheap, fast rollouts to train stronger models • Dramatically changes the cost–performance tradeoff of RL training We release Jackpot 🎰 in the following format: 🌔Paper: arxiv.org/abs/2602.06107 🌕Code: github.com/Infini-AI-Lab/… 🌖Blog: infini-ai-lab.github.io/jpt_website/ [1/n]

🤔Can we train RL on LLMs with extremely stale data? 🚀Our latest study says YES! Stale data can be as informative as on-policy data, unlocking more scalable, efficient asynchronous RL for LLMs. We introduce M2PO, an off-policy RL algorithm that keeps training stable and performant even when using data stale by 256 model updates. 🔗 Notion Blog: m2po.notion.site/rl-stale-m2po 📄 Paper: arxiv.org/abs/2510.01161 💻 GitHub: github.com/Infini-AI-Lab/… 🧵 1/4