Fondation Intelligence

32.3K posts

@IntelligenceTV

Fondation Intelligence / Intelligence Foundation

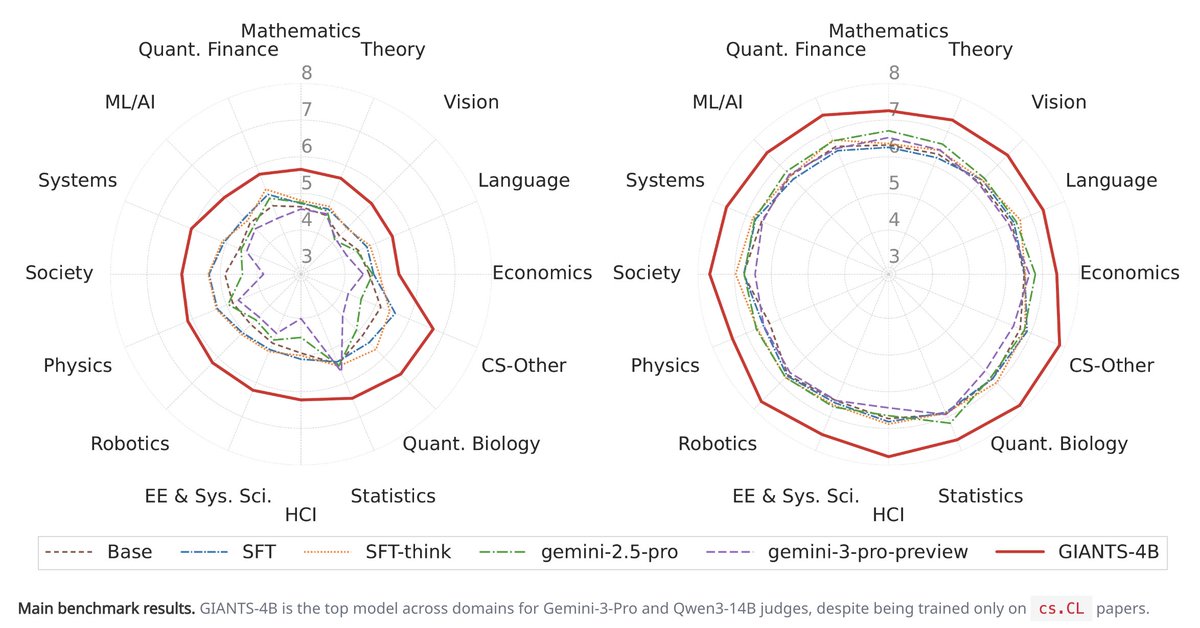

Scientists often make breakthroughs by synthesizing ideas across papers. In our new paper, we ask whether a language model can anticipate this process: given two parent papers, can it generate the core insight of a future paper built on them? 🧵⬇️

had the pleasure of watching "The Thinking Game" over the weekend. on one level, it is about @demishassabis and the path of AI over the last 20 years, especially around solving the protein folding problem. On another level, it is about the act of multi-disciplinary science - experimentation, running into walls and persistence. recommended watch.

I’m starting to get into a habit of reading everything (blogs, articles, book chapters,…) with LLMs. Usually pass 1 is manual, then pass 2 “explain/summarize”, pass 3 Q&A. I usually end up with a better/deeper understanding than if I moved on. Growing to among top use cases. On the flip side, if you’re a writer trying to explain/communicate something, we may increasingly see less of a mindset of “I’m writing this for another human” and more “I’m writing this for an LLM”. Because once an LLM “gets it”, it can then target, personalize and serve the idea to its user.