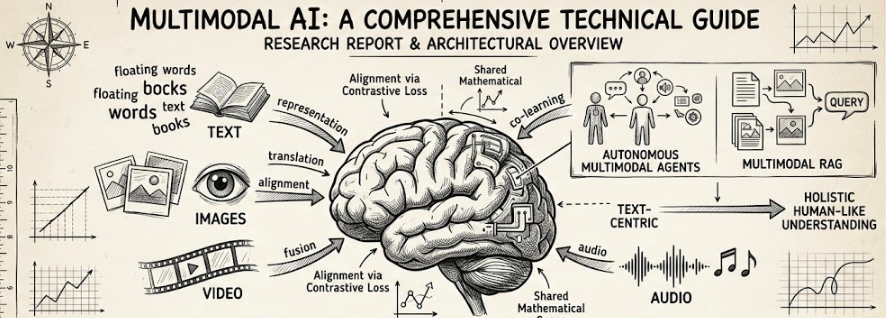

AI Engineering Cohort registrations are open. The cohort is a 16-week program for professionals who want to build production-grade AI applications. The cohort will start on 28th February 2026. ------------ Main topics: Week 1. Setup + Core Math Week 2. Terminology + MNIST Week 3. Basics of LLMs: Tokenization, Vectorization, Attention Week 4. Deep dive into LLMs: QKV matrices, Cross + Self + MH Attention Week 5. LLM Coding: Causal Masking + Code GPT Week 6. Think like an engineer: How massive models are trained to production Week 7. Optimization Hacks: KV Caching, Quantization, LoRA Week 8. The RAG Problem: What is RAG, Chunking, Reranking, Vector DBs Week 9. The RAG Code: Safety + Guardrails, Code RAG Week 10. AI Agents: ReAct Pattern, Tool Calling, LangChain, LangGraph Week 11. Context Engineering: Memory Systems, MCP, Multi-Agents Week 12. AI Engineering: Evals, Tradeoffs, Fine-tuning vs. RAG vs Prompting Week 13. Thinking Models: Reasoning, Chain of Thought Week 14. Multi-modal Models: Images + Video, CLIP, Diffusion Models Week 15. Capstone Project: Build your own AI Project Week 16. Career Goals: How to move to AI Engineering ---------------- Primary Audience: Software / AI Engineers Number of Sessions: 32 Live Classes + 15 General Sessions Involves Coding: Yes Classes: Live Pricing: ₹1,20,000 / $1400 ---------------- This cohort is for working professionals looking to gain job-relevant AI capabilities. You can find the detailed syllabus on the website. Register here: aiengg.dev Wishing you a great 2026!