Ismail Elezi

609 posts

Ismail Elezi

@Ismail_Elezi

Principal Research Scientist at @Huawei Noah Ark in London (before that @TUM @Nvidia). Views are my own.

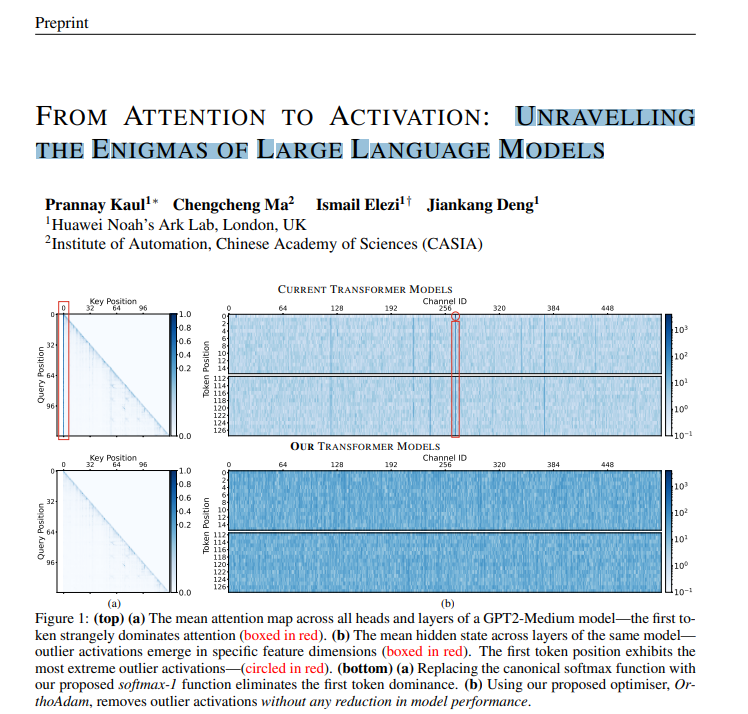

A new paper is out! CASteer: Steering Diffusion Models for Controllable Generation Arxiv link: arxiv.org/abs/2503.09630 Code: github.com/Atmyre/CASteer Diffusion models are powerful, but their generation process can be difficult to control, which poses safety risks (e.g., generating images with nudity/violence). There are many ideas on how to address this, but most of the existing approaches are limited in what issues they can handle and often require additional training. CASteer, on the other hand, is capable of handling broad range of tasks, while being completely training-free! CASteer works by constructing a special steering vector for each cross-attention layer in a diffusion model using prompt pairs that capture dspecific concepts. By adding or subtracting these vectors from the outputs of cross-attention layers during inference, we gain fine-grained control over the entire generation process. We can build steering vectors for any kind of concept, and this allows for broad range of manipulations over images being generated. We can add/remove objects (e.g., apples), alter abstract attributes (e.g., nudity), do style transfer, identity manipulation (switching Leonrado DiCaprio to Keanu Reevs), concept interpolation (going from cat to giragge), and more (see picture). Simplicity of CASteer allows for easy incorporation of it into most of the modern DMs. I would like to thank ChengCheng Ma and @Ismail_Elezi , who provided invaluable assistance in this project, as well as my university supervisors: Ziquan Liu, Martin Benning, Gregory Slabaugh and Jiankang Deng. Hope to have further great collaborations!