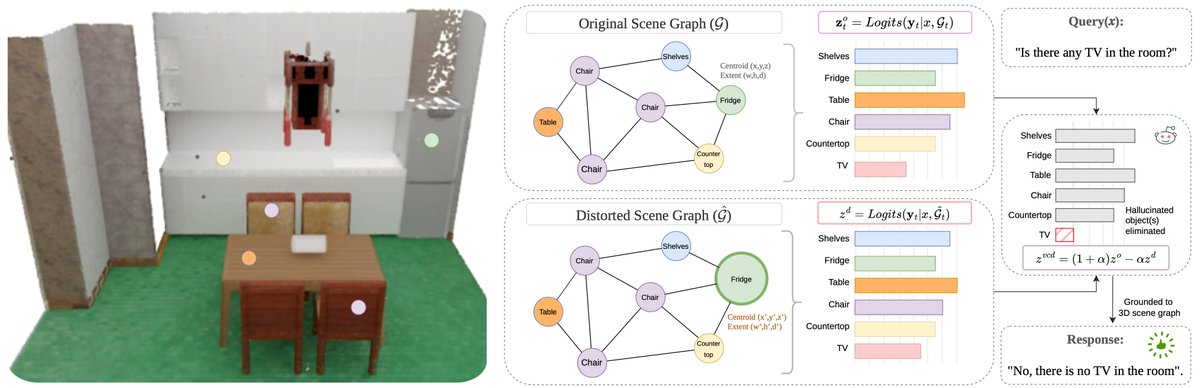

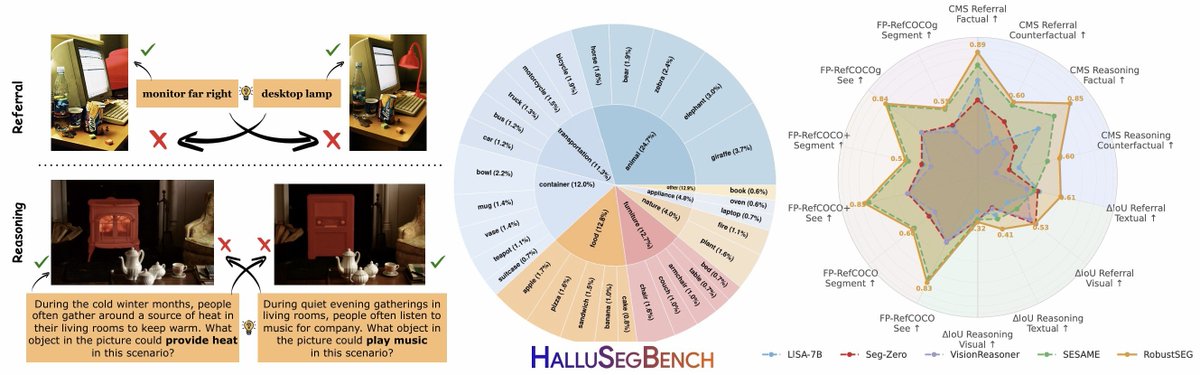

#iSchoolUI Assistant Professor Ismini Lourentzou has received a $600K @NSF CAREER award for her project, “Shaping Embodied Intelligence Through Language-Guided Introspection,” which aims to build safer and more reliable AI systems. 🤖 Congrats, Ismini! ▶️ bit.ly/42QoWjn