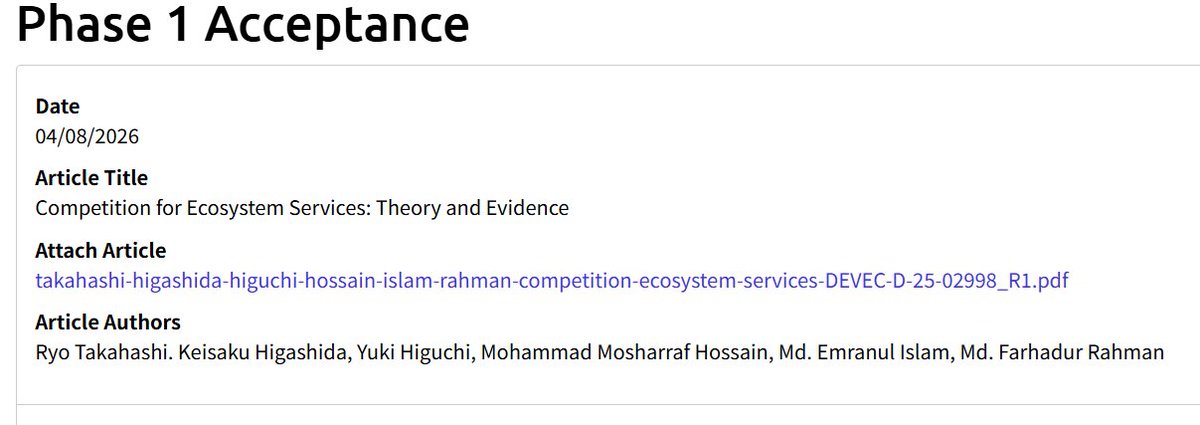

Andy Hall@ahall_research

Last weekend I posted that Claude Code created a full empirical polisci study in an hour. A lot of people asked: but how accurate was the study?

The answer: quite accurate, with some interesting mistakes and important limitations.

To get the answer, Graham Straus kindly offered to do an independent, manual audit—collecting the same data and extending the paper like Claude did, but without using any AI. Here’s what he found:

Claude replicated the original paper exactly, coded 29/30 CA counties correctly on treatment timing, and collected election data that correlated >.999 with manual collection.

The three main errors Graham found—mis-coding one county’s treatment year, omitting data collection for several potentially relevant races in always-treated states, and not using non-presidential elections to compute turnout—are similar to the kinds of mistakes a human might make on a first pass at writing this paper, and had only small effects on the subsequent estimates.

On the other hand, when Claude tried to create new analyses that weren’t straightforward extensions of the original paper, it did worse. No hallucinations or crazy errors, per se, but it drifted from the prompt and produced results we found to be poorly conceived.

My read:

–AI today is already an extremely powerful way to rapidly update and extend well-contained, simple empirical papers.

–To do empirical social science research well, it absolutely needs guidance and oversight from human experts.

We’ll be sharing broader thoughts on this work, what we learned by doing it, and where we go from here next week on my blog. Thank you to the many, many people who reached out, asked questions, and offered feedback on this project.