Jaime RV

30 posts

Our Principles: Democratization, Empowerment, Universal Prosperity, Resilience, and Adaptability openai.com/index/our-prin…

B R O A D T I M E L I N E S We should have neither short AI timelines, nor long timelines, but a broad probability distribution over when transformative AI will arrive. My new essay explains why & explores the implications of such deep uncertainty. 🧵 1/

BREAKING: Trump: "We are going to cut off all trade with Spain. We don't want anything to do with Spain." We rely on Spain for Olive oil, wine, pharmaceuticals, aerospace components, and many specialty chemicals.

Enforcing the SCR designation on Anthropic would be very bad for our industry and our country, and obviously their company. We said to the DoW before and after. We said that part of the reason we were willing to do this quickly was in the hopes of de-esclation. I feel competitive with Anthropic for sure, but successfully building safe superintelligence and widely sharing the benefits is way more important that any company competition. I believe they would do something to try to help us in the face of great injustice if we could. We should all care very much about the precedent. I saw in some other tweet that I must not be willing to criticize the DoW (it said something about sucking their dick too hard to be able to say anything critical, but I assume this was the intent). To say it very clearly: I think this is a very bad decision from the DoW and I hope they reverse it. If we take heat for strongly criticizing it, so be it.

We may yet fail to rise to all the challenges posed by transformative AI. But it is worth celebrating that when it mattered most and we were asked to compromise the most basic principles of liberty, we said no. I hope others will join. notdivided.org

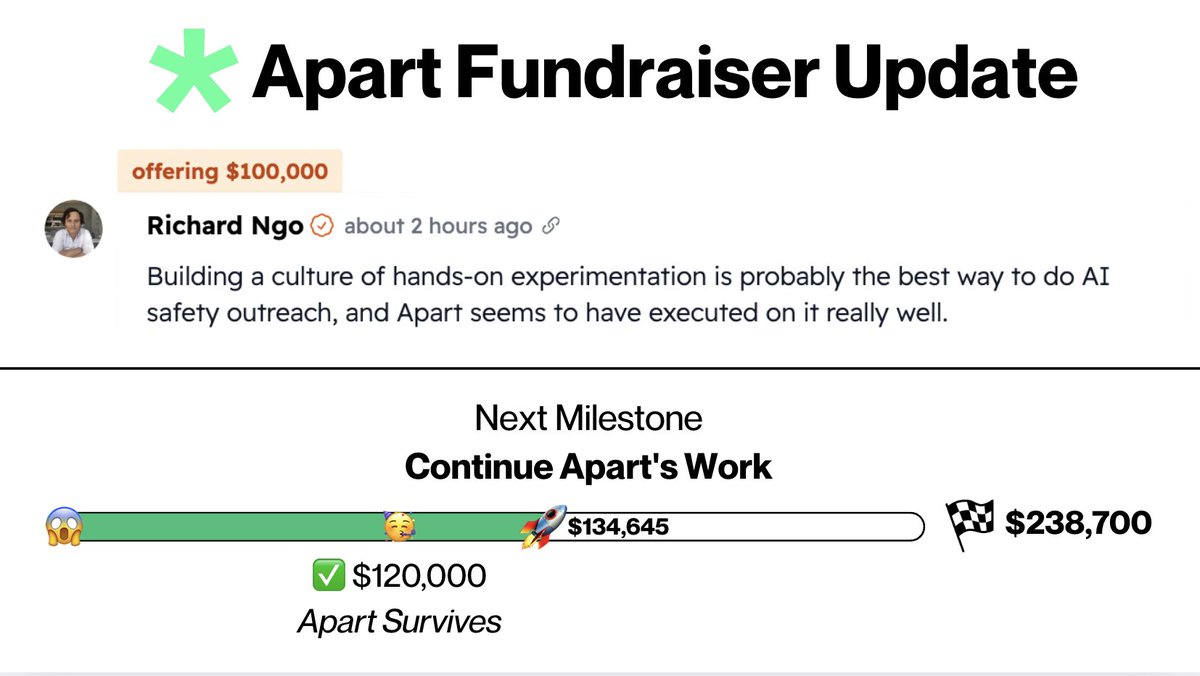

We have been overwhelmed with the support received since launching our fundraiser! 🫶 In only 10 days, we have received over 30 individual donations and even more testimonials of people outlining how Apart impacted their career and transition into AI safety!

I just committed $100k to this. I want to promote a culture of hands-on experimentation figuring out how and why LLMs work, and Apart’s hackathons are a great channel for this. I encourage you to consider donating too.

From the start, we've been dedicated to providing a scientific understanding of AI’s risks to protect people's safety and security🔎🔐 Today we’re crystallising that mission and changing our name to the AI Security Institute. 1/3