OpenClaw tip: If Telegram is your channel, use Telegram Desktop for day-to-day chat instead of the control center.

It gives you the same interface and workflow whether you’re on your computer, laptop, or phone.

desktop.telegram.org

English

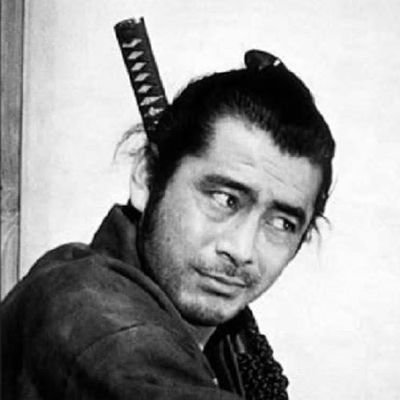

Jeffrey Carlson

1.6K posts

@JeffreyCarlson

Product @Chartboost. @Twitter @MoPub alum. Grad of @Penn and @penn_state. Born and raised in PA.

Local LLM Cheat Sheet Master Collection: All Tiers (April 2026) Bookmark this thread to access the top LLMs for your exact hardware and use case 🧵