Congratulations Dr. @JenJSun 🎉🎉

Jennifer J. Sun

241 posts

@JenJSun

AI for Scientists, assistant professor @CornellCIS, part-time @GoogleDeepMind

Congratulations Dr. @JenJSun 🎉🎉

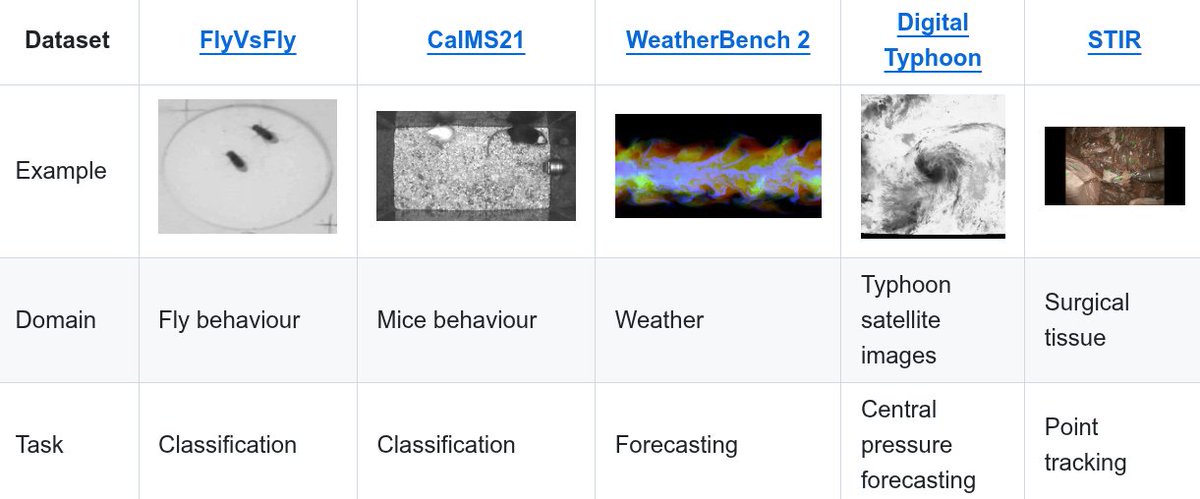

📅 TOMORROW is LM4Sci #COLM2025! ⚡🔬 Are you excited? Today's spotlight: Jennifer Sun (Cornell, Google DeepMind) @JenJSun on Accelerating Knowledge & Discovery in Scientific Workflows 🧵

The race for LLM "cognitive core" - a few billion param model that maximally sacrifices encyclopedic knowledge for capability. It lives always-on and by default on every computer as the kernel of LLM personal computing. Its features are slowly crystalizing: - Natively multimodal text/vision/audio at both input and output. - Matryoshka-style architecture allowing a dial of capability up and down at test time. - Reasoning, also with a dial. (system 2) - Aggressively tool-using. - On-device finetuning LoRA slots for test-time training, personalization and customization. - Delegates and double checks just the right parts with the oracles in the cloud if internet is available. It doesn't know that William the Conqueror's reign ended in September 9 1087, but it vaguely recognizes the name and can look up the date. It can't recite the SHA-256 of empty string as e3b0c442..., but it can calculate it quickly should you really want it. What LLM personal computing lacks in broad world knowledge and top tier problem-solving capability it will make up in super low interaction latency (especially as multimodal matures), direct / private access to data and state, offline continuity, sovereignty ("not your weights not your brain"). i.e. many of the same reasons we like, use and buy personal computers instead of having thin clients access a cloud via remote desktop or so.

Introducing VideoPrism, a single model for general-purpose video understanding that can handle a wide range of tasks, including classification, localization, retrieval, captioning and question answering. Learn how it works at goo.gle/49ltEXW

Introducing VideoPrism, a single model for general-purpose video understanding that can handle a wide range of tasks, including classification, localization, retrieval, captioning and question answering. Learn how it works at goo.gle/49ltEXW

All animals behave in 3D - we discover 3D poses directly from multi-view videos without requiring annotations. Essentially videos -> 3D keypoints + connections We will be @CVPR on June 21! BKinD-3D Paper: arxiv.org/abs/2212.07401 Co-first-authors Lili Karashchuk & @_AmilDravid