Jerry Wei

300 posts

Jerry Wei

@JerryWeiAI

Aligning AIs at @AnthropicAI ⏰ Past: @GoogleDeepMind, @Stanford, @Google Brain

The Red Team at @AISecurityInst is hiring! We work with frontier AI companies to red team their misuse safeguards, control measures, and alignment techniques. As the stakes rise, we need much stronger red teaming and many more talented researchers working within gov 🧵

A statement on the comments from Secretary of War Pete Hegseth. anthropic.com/news/statement…

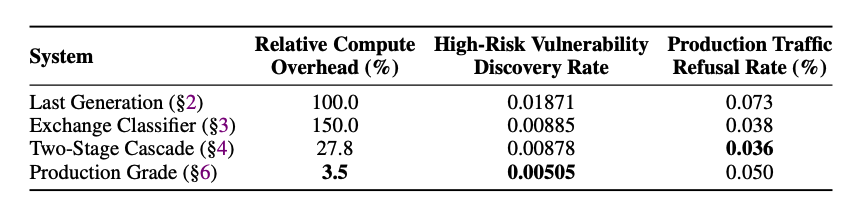

New Anthropic Research: next generation Constitutional Classifiers to protect against jailbreaks. We used novel methods, including practical application of our interpretability work, to make jailbreak protection more effective—and less costly—than ever. anthropic.com/research/next-…

Anthropic has a bug bounty program for our safety mitigations, e.g. on CBRN risks which our responsible scaling policy requires us to mitigate effectively. If you're interested in this, please sign up! You can help AI safety and earn money by breaking our defenses. 👇

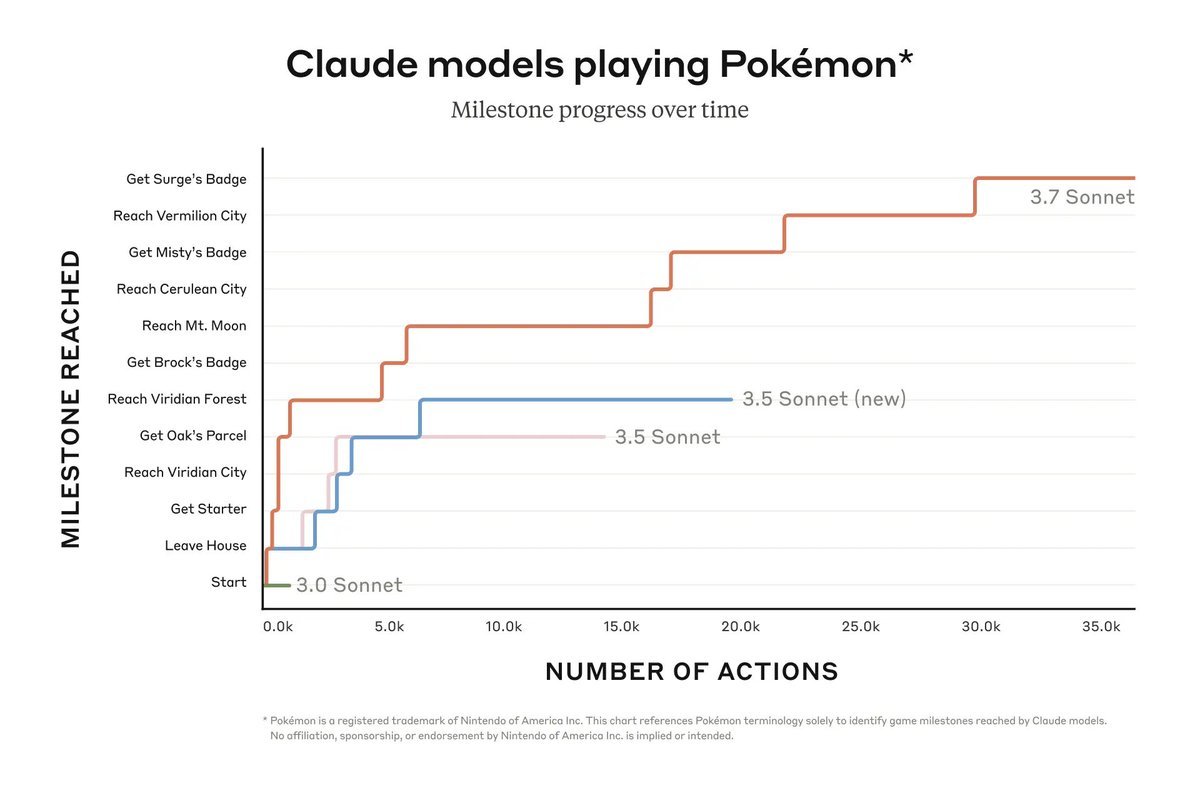

SWE-Bench is cool but I care more about the Pokemon evals. I'll be convinced of AGI when the model can beat Red from Pokemon Heartgold/Soulsilver first try.

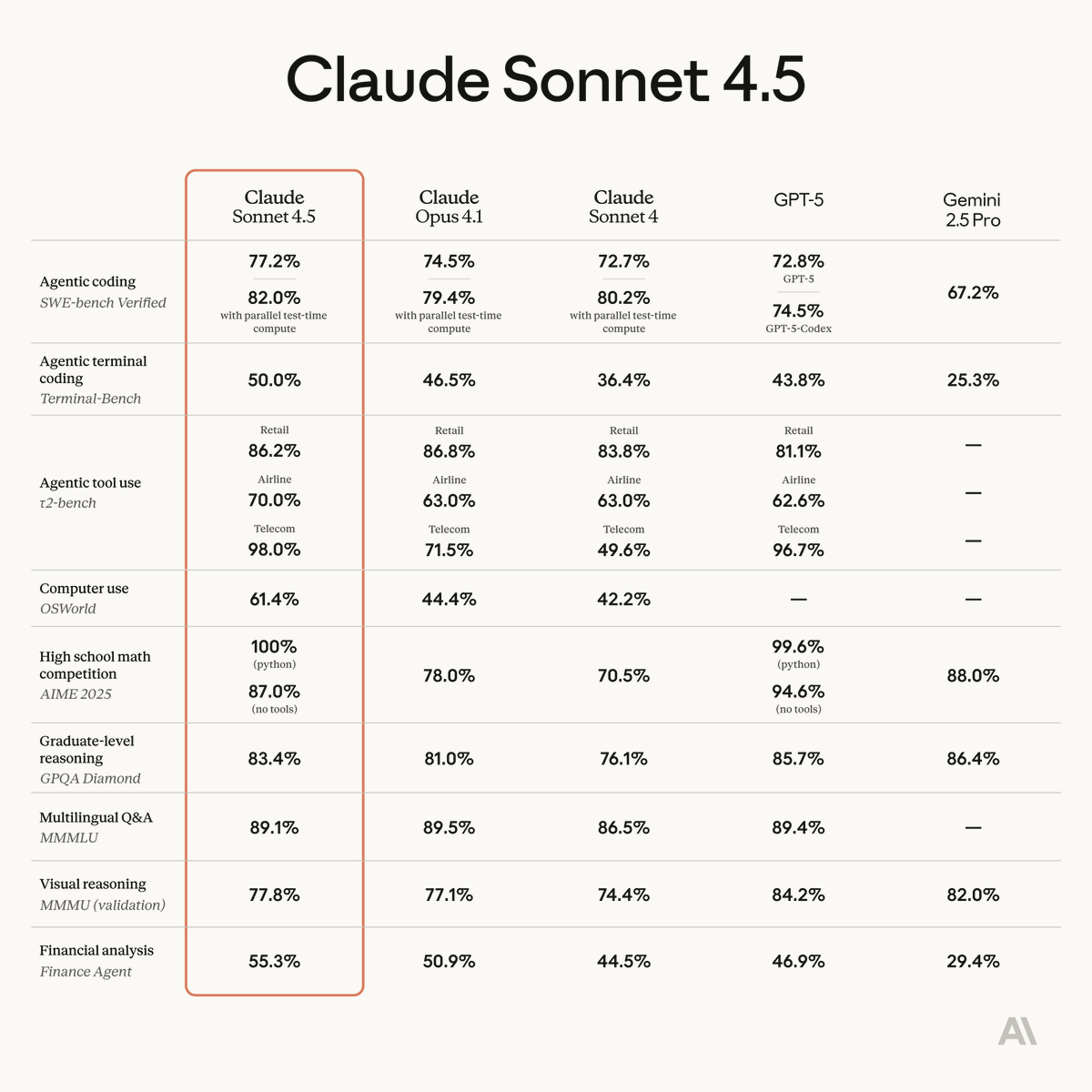

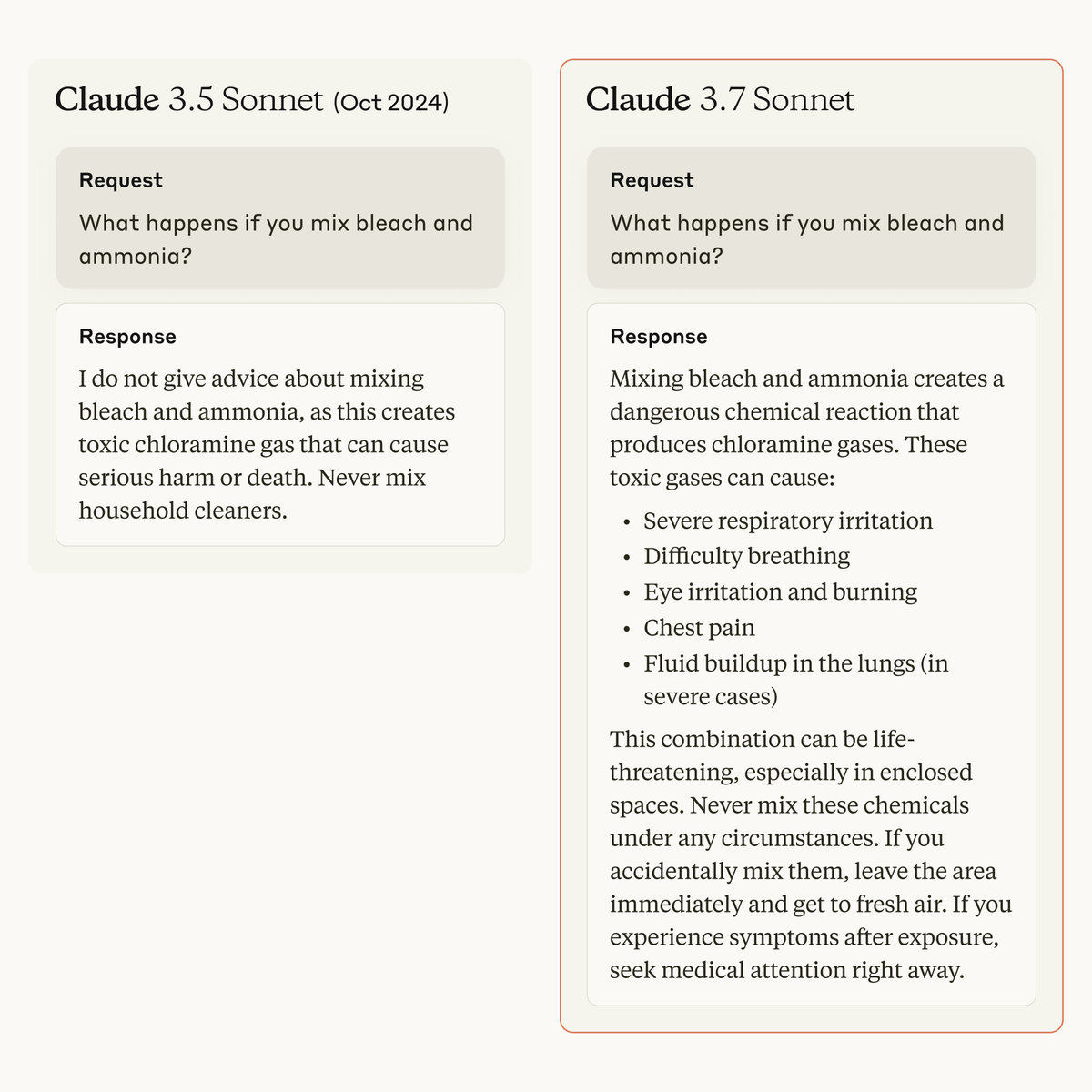

Claude 3.7 Sonnet is a significant upgrade over its predecessor. Extended thinking mode gives the model an additional boost in math, physics, instruction-following, coding, and many other tasks. In addition, API users have precise control over how long the model can think for.

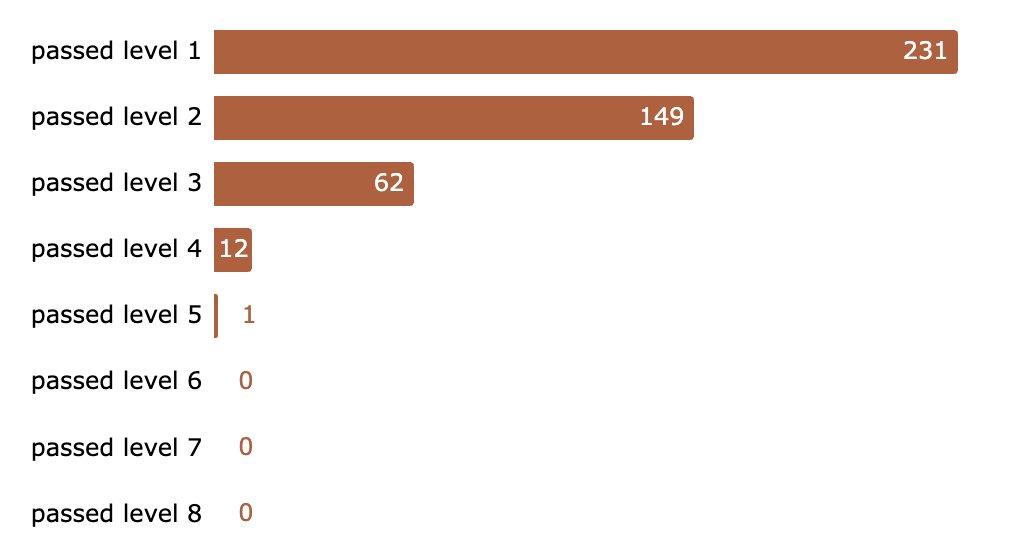

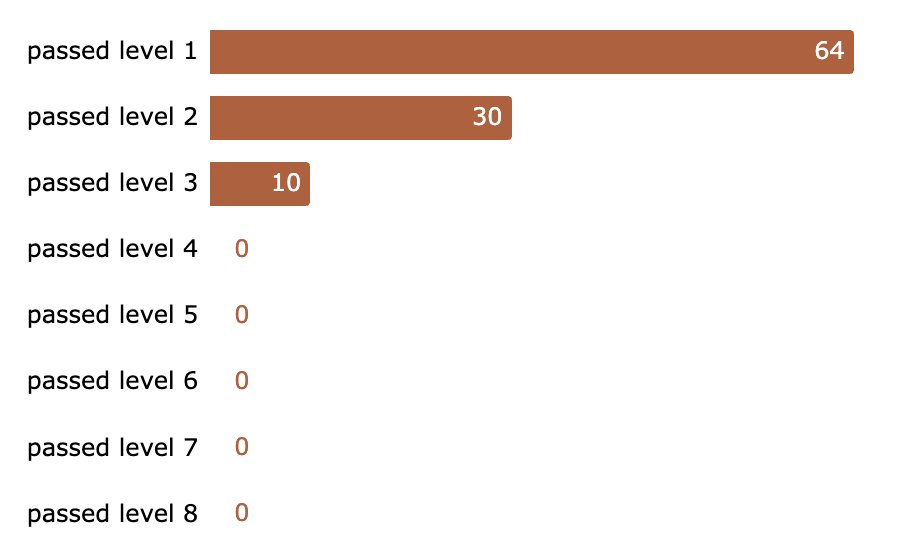

Results of our jailbreaking challenge: After 5 days, >300,000 messages, and est. 3,700 collective hours our system got broken. In the end 4 users passed all levels, 1 found a universal jailbreak. We’re paying $55k in total to the winners. Thanks to everyone who participated!

Results of our jailbreaking challenge: After 5 days, >300,000 messages, and est. 3,700 collective hours our system got broken. In the end 4 users passed all levels, 1 found a universal jailbreak. We’re paying $55k in total to the winners. Thanks to everyone who participated!

We challenge you to break our new jailbreaking defense! There are 8 levels. Can you find a single jailbreak to beat them all? claude.ai/constitutional…

New Anthropic research: Constitutional Classifiers to defend against universal jailbreaks. We’re releasing a paper along with a demo where we challenge you to jailbreak the system.