Sabitlenmiş Tweet

Jint3x

746 posts

Jint3x

@Jint3x

Undergraduate student | AI, Agentic Programming

Katılım Ekim 2018

43 Takip Edilen20 Takipçiler

@lady_valor_07 Do not take fluoroquinolone-class antibiotics unless life-threatening emergency.

English

@ShanuMathew93 Lol, I do the same (not sure if I have bad vision). After 8 hours of reading, there's no way in hell to make me read 14px or 16px font-size text.

English

@chatgpt21 They look somewhat weak atm, but that's what is publicly known. They have the infra, so if they do have capable people, they are definitely doing something.

I still use Grok 4.20 for search, much better than everything else I've tried.

English

Jint3x retweetledi

@awnihannun Increasing context size + context performance (especially the performance) will definitely make good compaction much stronger.

English

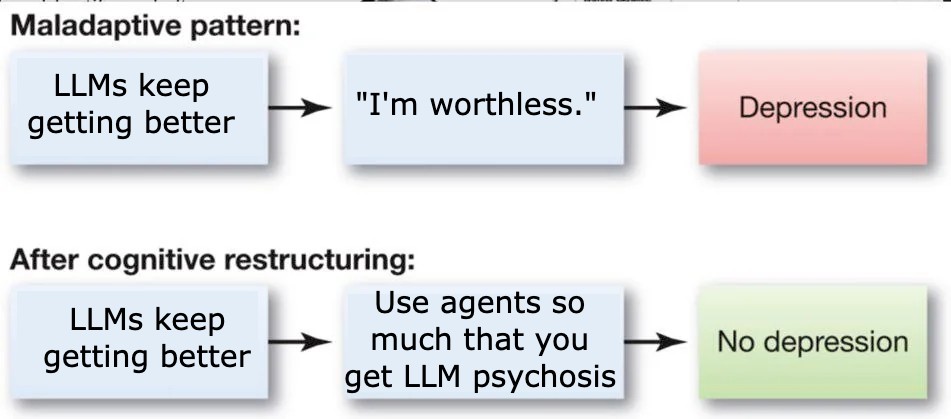

I've been thinking a bit about continual learning recently, especially as it relates to long-running agents (and running a few toy experiments with MLX).

The status quo of prompt compaction coupled with recursive sub-agents is actually remarkably effective. Seems like we can go pretty far with this. (Prompt compaction = when the context window gets close to full, model generates a shorter summary, then start from scratch using the summary. Recursive sub-agents = decompose tasks into smaller tasks to deal with finite context windows)

Recursive sub-agents will probably always be useful. But prompt compaction seems like a bit of an inefficient (though highly effective) hack.

The are two other alternatives I know of 1. online fine-tuning and 2. memory based techniques.

Online fine-tuning: train some LoRA adapters on data the model encounters during deployment. I'm less bullish on this in general. Aside from the engineering challenges of deploying custom models / adapters for each use case / user there are a some fundamental issues:

- Online fine-tuning is inherently unstable. If you train on data in the target domain you can catastrophically destroy capabilities that you don't target. One way around this is to keep a mixed dataset with the new and the old. But this gets pretty complicated pretty quickly.

- What does the data even look like for online fine tuning? Do you generate Q/A pairs based on the target domain to train the model? You also have the problem prioritizing information in the data mixture given finite capacity.

Memory based techniques: basically a policy for keeping useful memory around and discarding what is not needed. This feels much more like how humans retain information: "use it or lose it". You only need a few things for this to work:

- An eviction/retention policy. Something like "keep a memory if it has been accessed at least once in the last 10k tokens".

- The policy needs to be efficiently computable

- A place for the model to store and access long-term memory. Maybe a sparsely accessed KV cache would be sufficient. But for efficient access to a large memory a hierarchical data structure might be beter.

English

@philipkiely Downloaded the book yesterday, already at chap 4 and would've read more if I had the time. As someone who doesn't specialize in inference engineer, the book has been very interesting so far.

English

I made a mistake yesterday and now I have a (good) problem.

Turns out I massively underestimated demand for Inference Engineering. Yesterday we saw:

> 2M+ views, trending on tech twitter

> Shipped books to awesome people worldwide

> Thousands more asking for the book

The problem?

I'm sold out. Was on the phone with my printer in Belgium first thing this morning, more copies on the way by air freight.

In the meantime, the PDF on the website is free.

English

Jint3x retweetledi