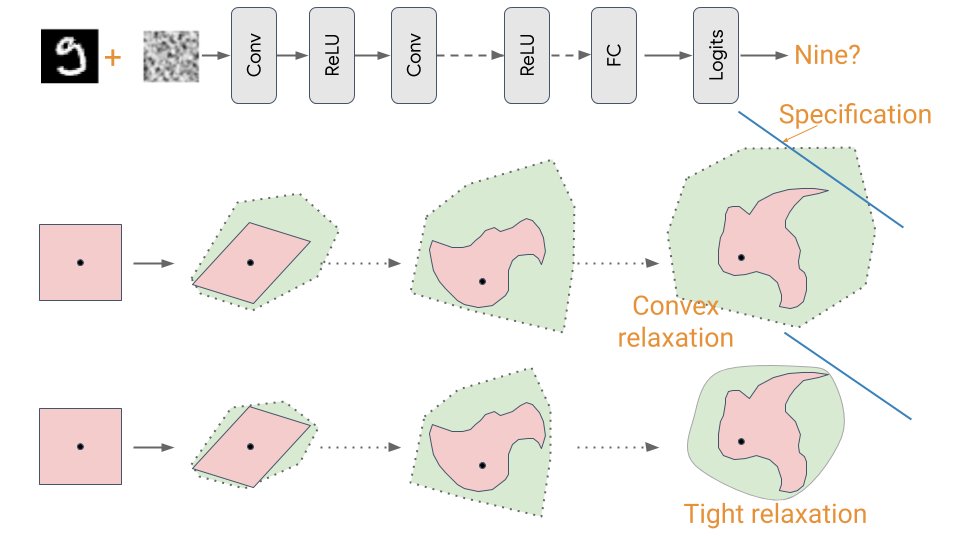

Over several decades, software engineers have developed a toolkit for debugging - from unit testing to formal verification. Our Robust & Verified AI team works on analogous approaches for ensuring that machine learning systems are robust at deployment: deepmind.com/blog/robust-an…