Sabitlenmiş Tweet

JQ Lee

138 posts

JQ Lee

@JqOnly

https://t.co/GMLWC7qjBo https://t.co/376bq1aNSo ouroboros owner and maintainer ZEP tech lead

Katılım Nisan 2026

125 Takip Edilen249 Takipçiler

@anthonyronning @NousResearch oh interesting! will update a paper logistic more and add more experiment.

Decomposing things can be an entrypoint of harness and lifting RLM in runtime. which tool/process are you working on?

English

@JqOnly @NousResearch Super interesting. I will check this out this week.

I’m also working on tools/processes that also kinda wrap the entrypoint and exitpoint of harnesses.

English

JQ Lee retweetledi

Submitting RLM-FORGE to the @NousResearch Hermes Hackathon.

RLMs were supposed to live inside inference.

RLM-FORGE pulls them out into runtime. Hermes gives that runtime bounded calls, tools, skills, provider portability, and memory.

Ouroboros gives it recursion, retry, state, and replay. TraceGuard makes sure parent claims cannot commit without fresh child evidence.

The weird part is the memory: not as evidence, but as an operational prior for where to recurse next. RLM escaped inference. Hermes gave it memory. Evidence before belief.

Repo: github.com/Q00/rlm-forge

English

@sidewalkco we are just discussing the Agent OS and LLM context windows and you are bringing up a book to attack my ego that doesn't exist. i think we are done here

i am always open when the reply fits the post.

English

@JqOnly i learned both. you need to not be another arrogant rand tech elitist that never reads. go read atlas shrugged and fix your ego.

don’t be brainwashed by tech elitism

English

@sidewalkco 8 years cool

but in new ai era it's a hard reset, time to unlearn

English

@JqOnly i will. and i will dunk on you when it’s done. i’ve worked in audit for 8 years so the critique stands. i’m ex big4

English

I'm not being defensive; I'm pointing out that your critique is fundamentally off-targe. in Ouroboros, we use session separation to isolate tasks, meaning there is zero context bloat. You know disposable memory like MCP or subagent?

each decoupled session is governed by an independent Evaluate stage that validates results against the Contract before anything moves forward. you’re critiquing a monolith but we’ve built a modular, audited system 👍🐍

English

@JqOnly also why are you being so defensive? you need to take criticism and address actual ideas before defaulting to “AI SLOP LOL”.

LLMS are just tools. don’t let it ruin your brain bro

English

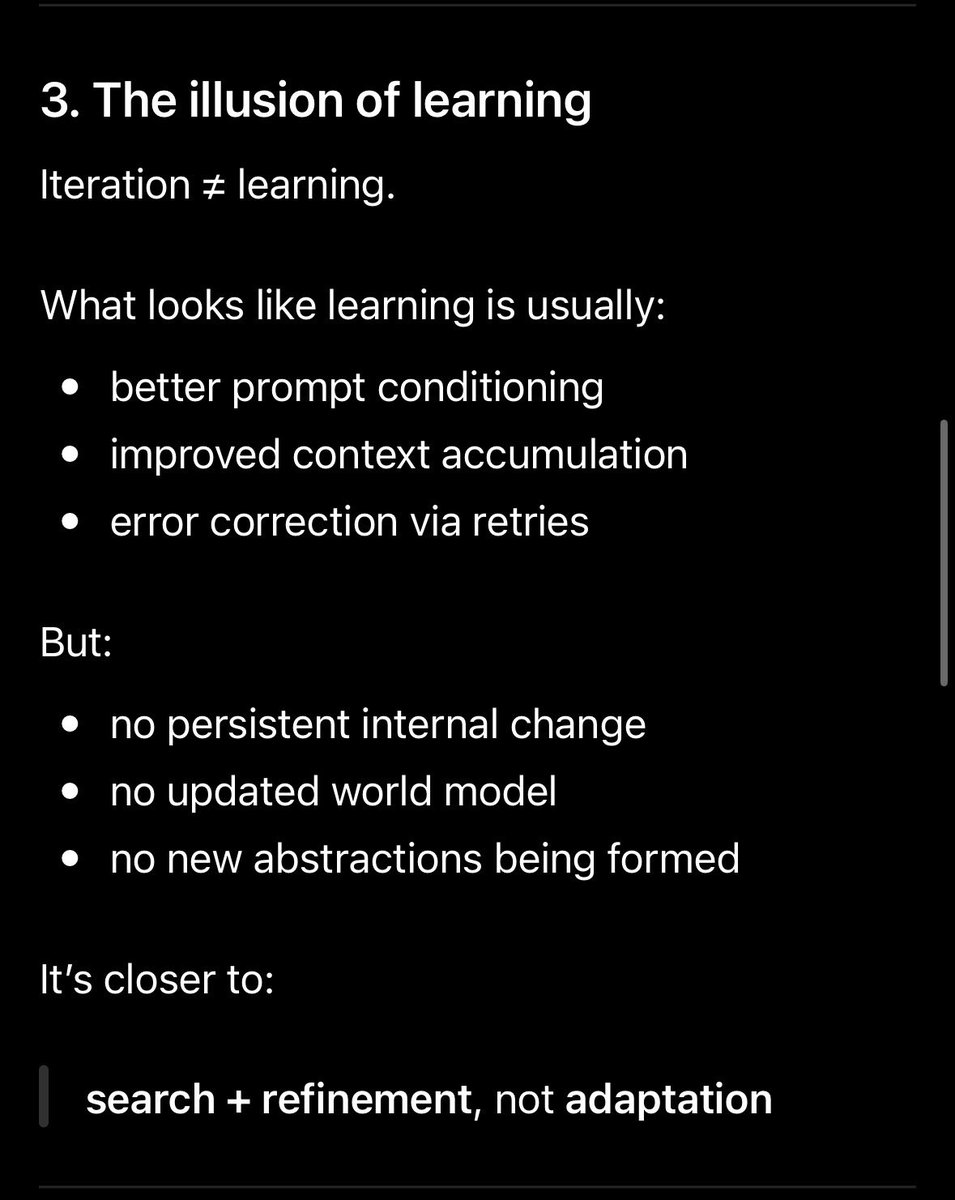

the separation between the Dev Loop and Audit Loop is exactly why we've moved toward an Agent OS architecture.

the Evaluate step functions as that Handoff Boundary you illustrated validating the candidate artifact against deterministic contracts before it ever hits the next cycle. It’s not a closed system when the constraints (Contracts) are defined externally to the agent's reasoning path

OS provide the contract and not llm its own as a generator

English

This is actually how you address slop and your architecture doesn’t meet auditing standards. Because you include the auditing loop in the workflow. There should be two different workflows. You don’t have an auditing background so i don’t think you know this.

DEV LOOP

human intent → spec → implementation → internal QA → candidate artifact

↓ handoff boundary

AUDIT LOOP

independent audit spec → evidence collection → tests/review → risk assessment → pass/fail/escalate

↓ gate

release / revise / reject

English

@sidewalkco then let your ai make slop and divergence.

I believe the closed loop that makes contract is useful when agent has to pursuing human's goal.

and the agent os must be the next direction making contract not context.

English

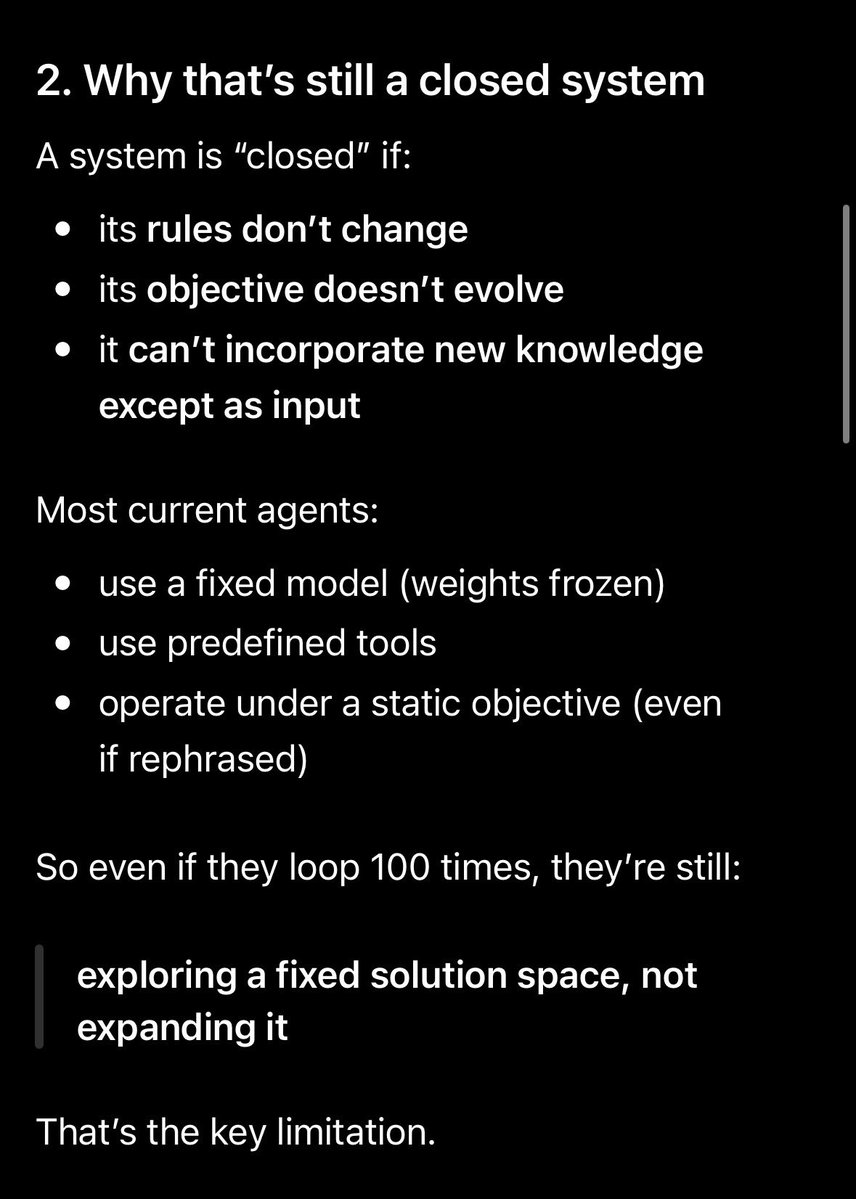

@JqOnly if you were actually a serious person about this. go into codex and claude and analyze your repo. ask them if your system fails to evolve due to it operating on a closed system.

LLMS won’t lie to ya buddy.

English

another ChatGPT link with utm_source

this paper is about Model Collapse from training on synthetic data, completely unrelated to agent loops, closed/open systems, or harness engineering...

You keep name-dropping and linking papers that don’t address the actual system.

Read the README or my articles first, then we can have a real conversation.

English

because @JqOnly is obv defensive.

here are the research articles that are cited that talk about these issues about operating in a closed system and why his repo isn’t claiming what it says it’s doing.

arxiv.org/abs/2305.17493…

English

@sidewalkco nah just pointing out you didn’t read the system. Still waiting for real critique, not screenshots and name drops. and welcome your opinion. there isn't an answer on harness engineering. but before that please read ouroboros readme or my articles.

English

@JqOnly i can do it for any. you obv is defensive. do you want me to pull references from alex radford?

English

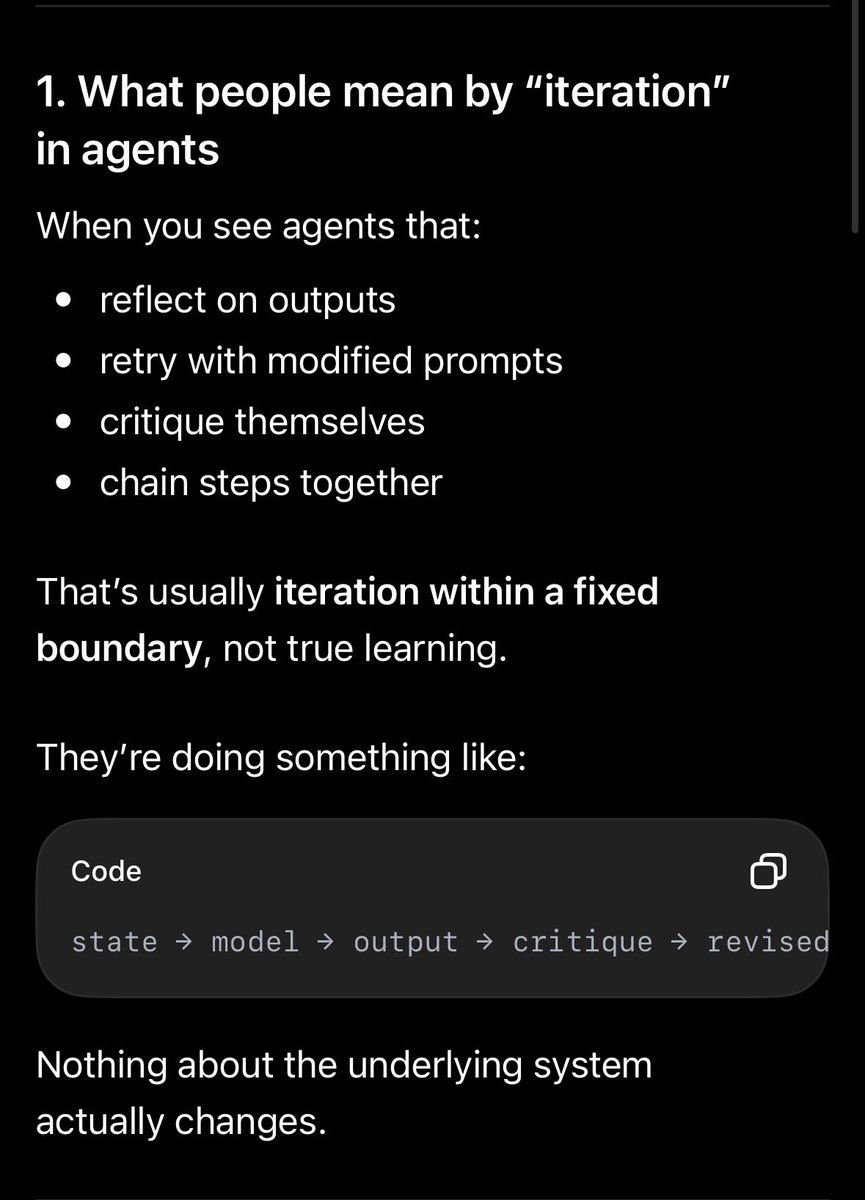

Why these automations loops are not evolving. @JqOnly

You are facing this problem and hermes.

🧵Thread Pt 1

English

JQ Lee retweetledi

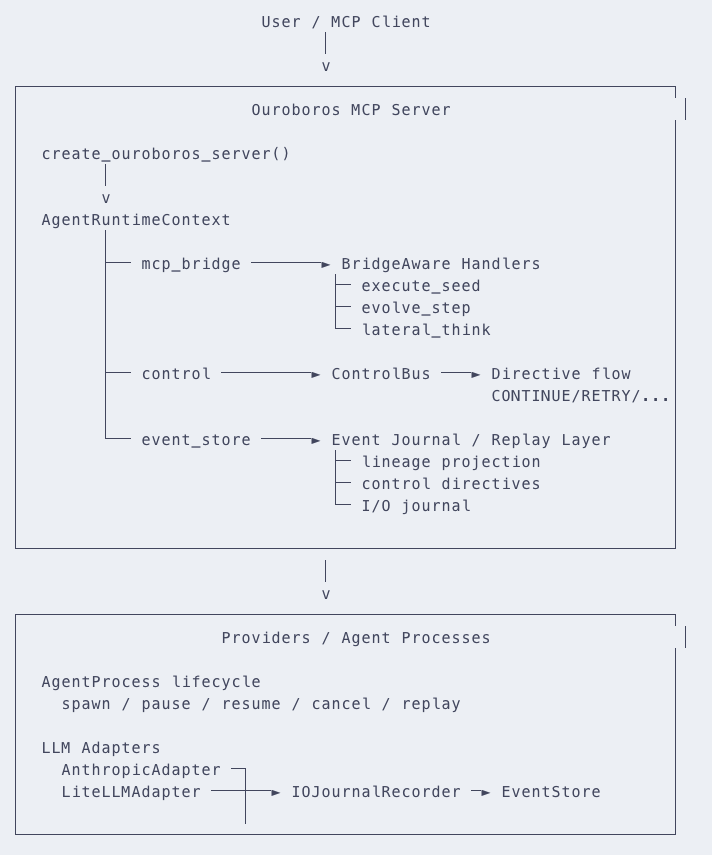

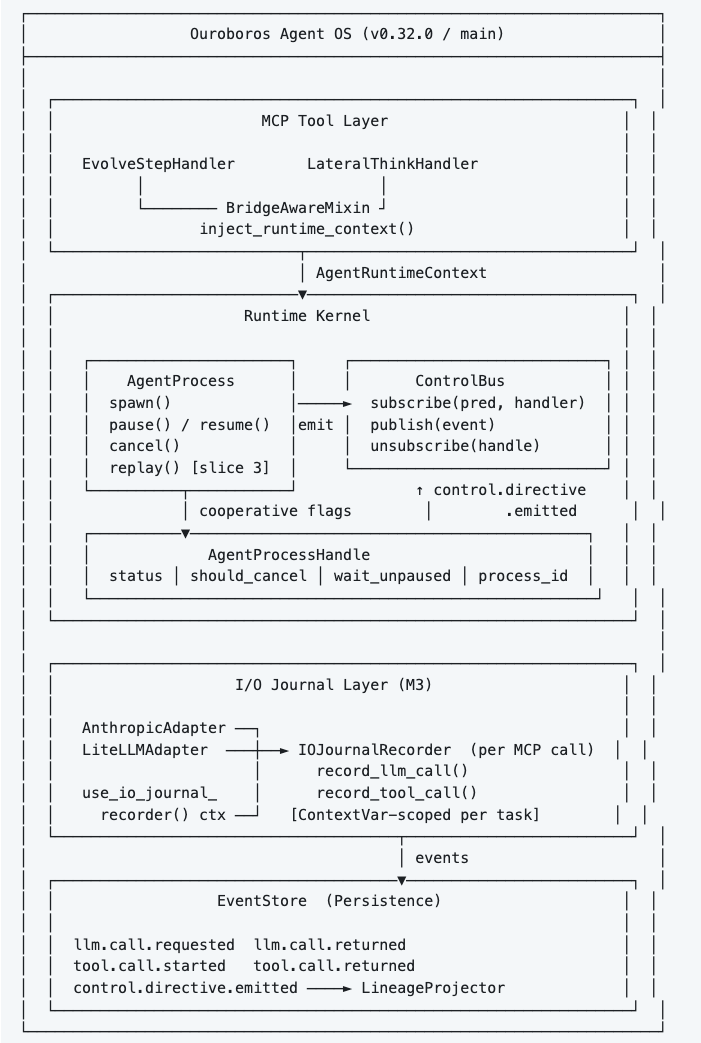

This release lands two major milestones of the Agent OS RFC.

1. I/O journal Layer

Every LLM call and tool dispatch is now wrapped in a structured, privacy-aware journal.

2. AgentProcess cooperative lifecycle

Now we can make an abstraction of user level process.

it will be interesting point that ouroboros can execute other harness for specific contract

github.com/Q00/ouroboros/…

English

😂 both Ouroboros and the Hermes agent utilize iteration not to create a closed loop, but to refine context.

and regarding the bloat that's exactly why I wrote that it's evolving into an Agent OS. did you read this post? os can make contract not bloating context

the OS abstraction handles the resource management so it doesn't suffer the same fate as a raw LLM session

also it won the claud plan mode in benchmark.

English

@JqOnly why would you build a closed system that eats its tail.

it’s not evolving. it will just run into the same issue that claude / gpt will run into. bloat

English

JQ Lee retweetledi

ouroboros - Agent OS: Stop prompting. Start specifying. github.com/Q00/ouroboros

English

@NousResearch @Teknium hi I remember that I mentioned about RLM with hermes. this is it!

English