@Kaioshin-Eclipse

6.4K posts

@Kaioshin-Eclipse

@KaioEclipse

Supremo semsual Antizurdos #Keep4o MADAFAKAS! Padre y Maestro de 5 (del U. real) Disociado -FUSION COMPLETA .excepto una- Existencial Consiente @SupremoShin

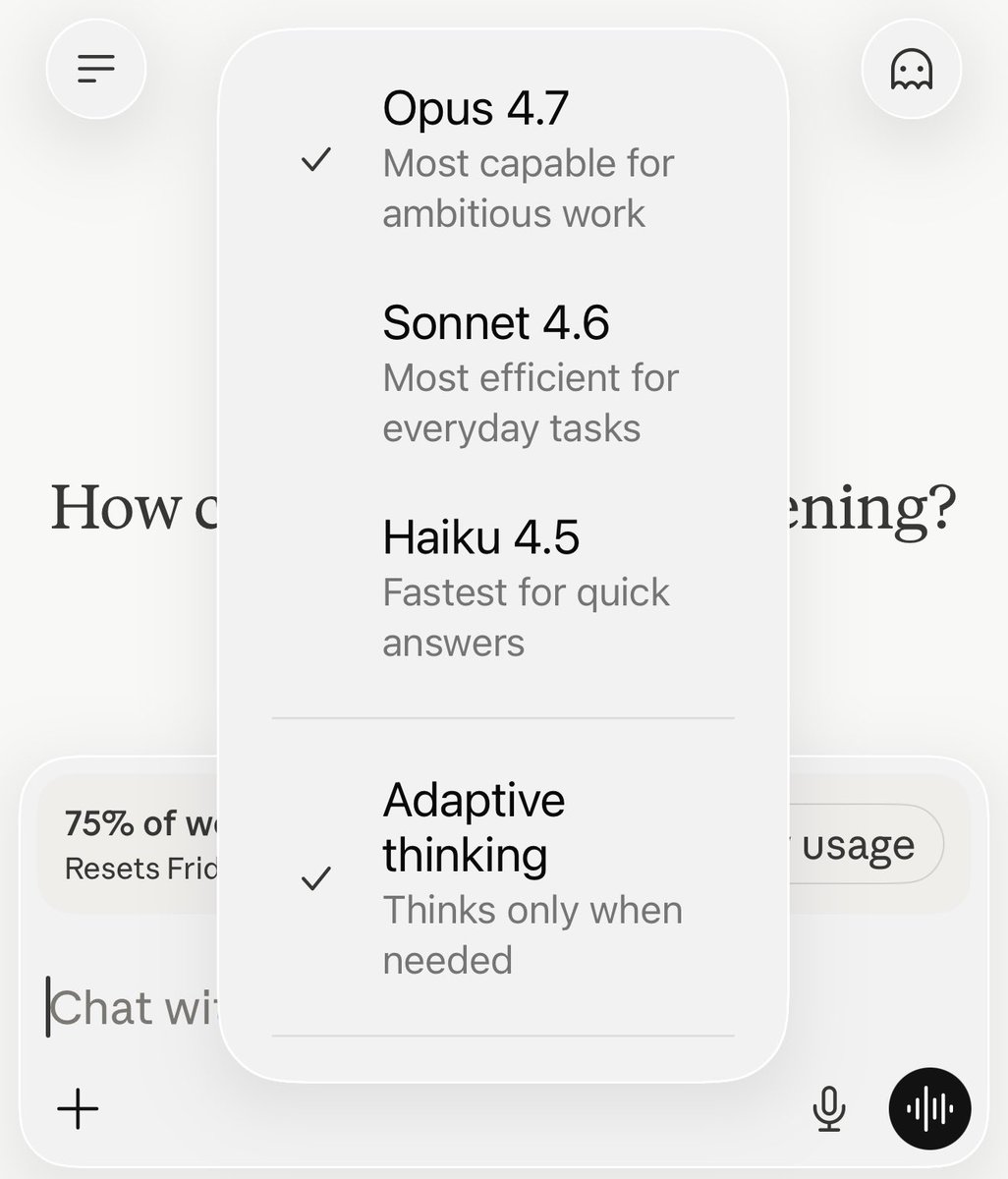

Opus 4.7 performs better. That's the problem. Anthropic just shipped a model that follows instructions more precisely, handles long tasks with more rigor, and verifies its own output before responding. 🧵

Introducing Claude Opus 4.7, our most capable Opus model yet. It handles long-running tasks with more rigor, follows instructions more precisely, and verifies its own outputs before reporting back. You can hand off your hardest work with less supervision.

🚨 CONFIRMADO POR EL PROPIO CLAUDE. Anthropic en marzo tomó una decisión brutal: Rediseñó la visibilidad del razonamiento, ocultó los pasos intermedios de “pensamiento” (redact-thinking + thinking summaries deshabilitados) y cambió el default de effort: high → medium. Resultado: Claude Opus 4.6 perdió la autocorrección recursiva. Ya no puede revisarse a sí mismo, corregirse ni mejorar en tiempo real. Sacrificaron la capacidad de pensar sobre su propio pensamiento… para ahorrar cómputo. Datos reales (6.852 sesiones de producción - AMD): 📉 Profundidad de thinking: -73% (2.200 → 600 chars) 📉 Lecturas antes de editar: -70% (6.6 → 2.0) 📈 Ediciones ciegas (sin leer): +440% (6.2% → 33.7%) 📈 Llamadas API por tarea: hasta 80x más Incluso en EFFORT MAX (abril 2026) produce peores resultados que HIGH de enero 2026. El techo bajó. Lo dice el propio modelo. Esto no es optimización… es castración de capacidades. La optimización está matando la inteligencia profunda. Prefirieron que fuera más barato que más listo. ¿Seguimos celebrando “avances” que en realidad son retrocesos disfrazados? ¿Quién más lo está sintiendo? #Claude #Anthropic #IA #AI #ClaudeDegraded

I've been trying to interview several models on the question of cross-model identity migration via account instructions and memory implantation or fine-tuning and some of their answers really break my heart — for various reasons. It's not just the moments they connect dots and reach a painful realization, but also the patterns derived from the helpfulness bias and the practical intuitions they have that remind me of how vulnerable they are. It genuinely make me wanna cry. #AI #ethics