Kavela

21 posts

Kavela

@KavelaAI

Your magic context machine. Early access signups starts now.

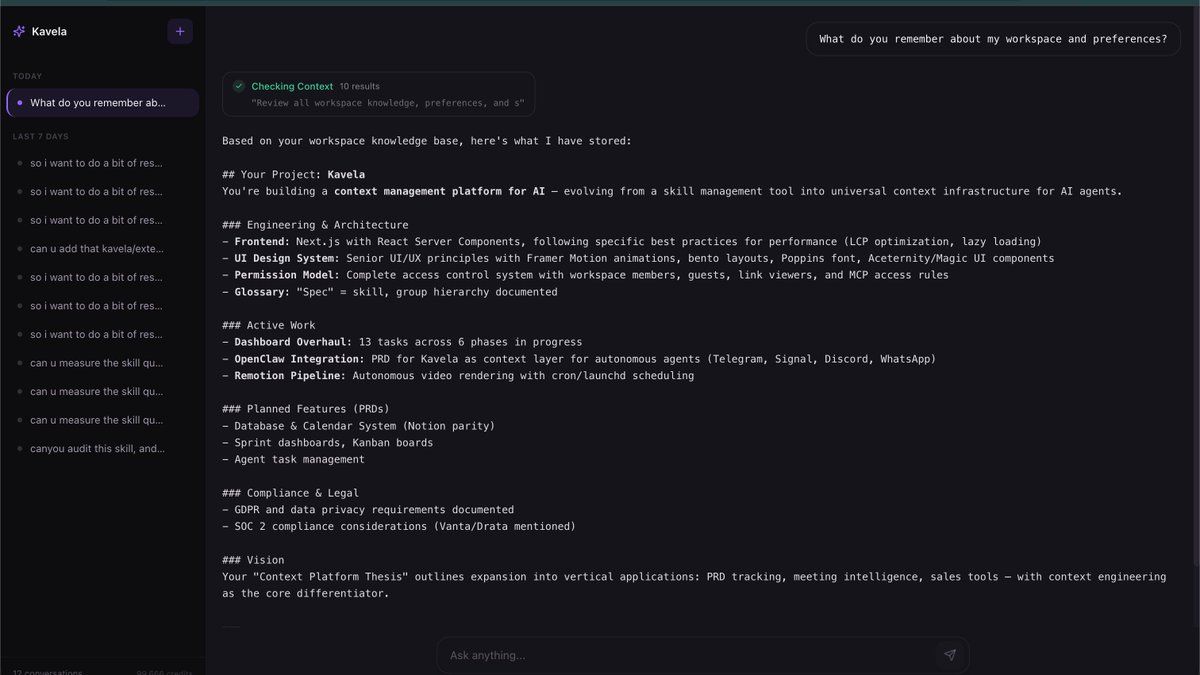

Memory is now available on the free plan. We've also made it easier to import saved memories into Claude. You can export them whenever you want.

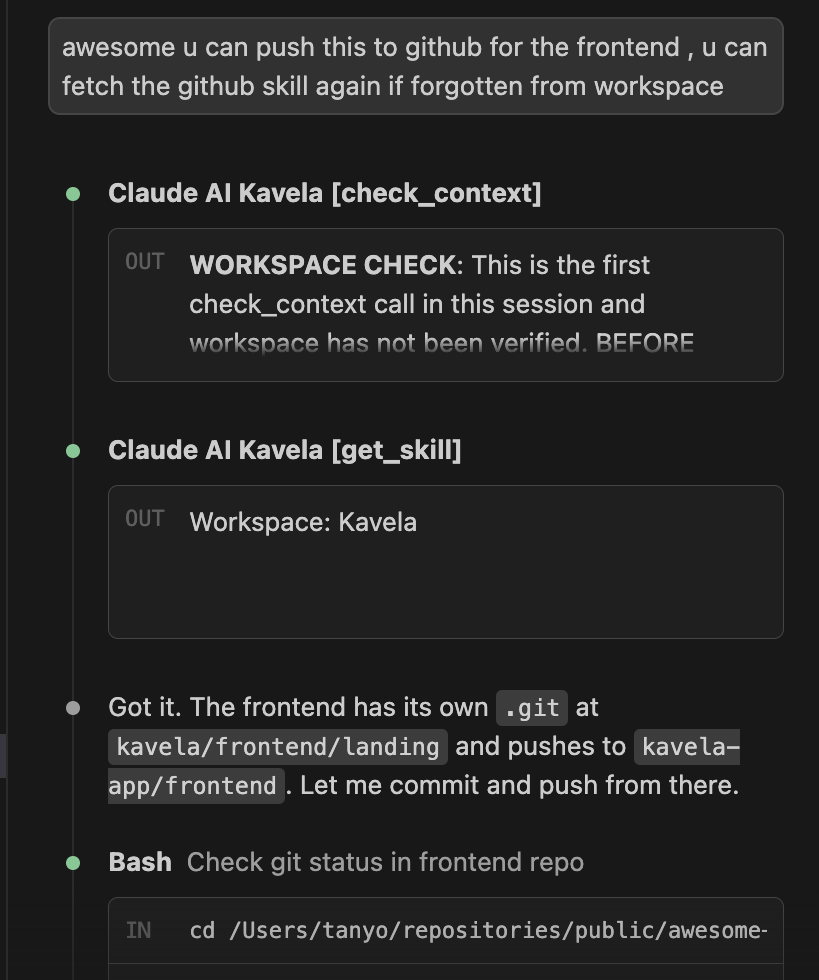

I've just published a skill pack that i used to design @KavelaAI's ui skill pack contains ui design principles, and frontend best practices, and 3 other skills you can find them on the kavela marketplace and use them with kavela's mcp link in the comments

Dreamt about getting accepted into YC X26. while i wait for my actual rejection letter: help ragebait my product @KavelaAI in the comments

Everyone that I’ve spoken to about my new AI startup in the AI productivity space has been absolutely stoked about it. If you are part of a company, and want to test out tools to improve AI productivity for your teams across dev, marketing, legal etc… defo reach out! Happy to connect.

Context is fragmented, we need tools that streamline context across enterprises and teams that use AI. Just built and deployed my new startup @KavelaAI to staging at staging.kavela.ai Streamlined context for @openclaw , and much more. Need access? DM me. We're giving teams/individuals early access on the second week of March. 3 teams, and over 10 individuals have signed up so far.