Kwangjun Ahn

73 posts

Kwangjun Ahn

@KwangjunA

Researcher at NVIDIA // ex-Researcher at Microsoft, PhD from MIT EECS

they also dropped fsdp2 optimized muon. though they don't use muon for 2.6b dense model, i think it's just beginning and they are preparing larger one. they pipeline muon's comm-comp with calc flops and the code is neat. not sure if it's existing method. huggingface.co/Motif-Technolo…

Since nobody asked :-), here is my list of papers not to be missed from ICML: 1) Dion: distributed orthonormalized updates (well, technically not at ICML, but everyone's talking about it). 2) MARS: Unleashing the Power of Variance Reduction for Training Large Models 3) ...

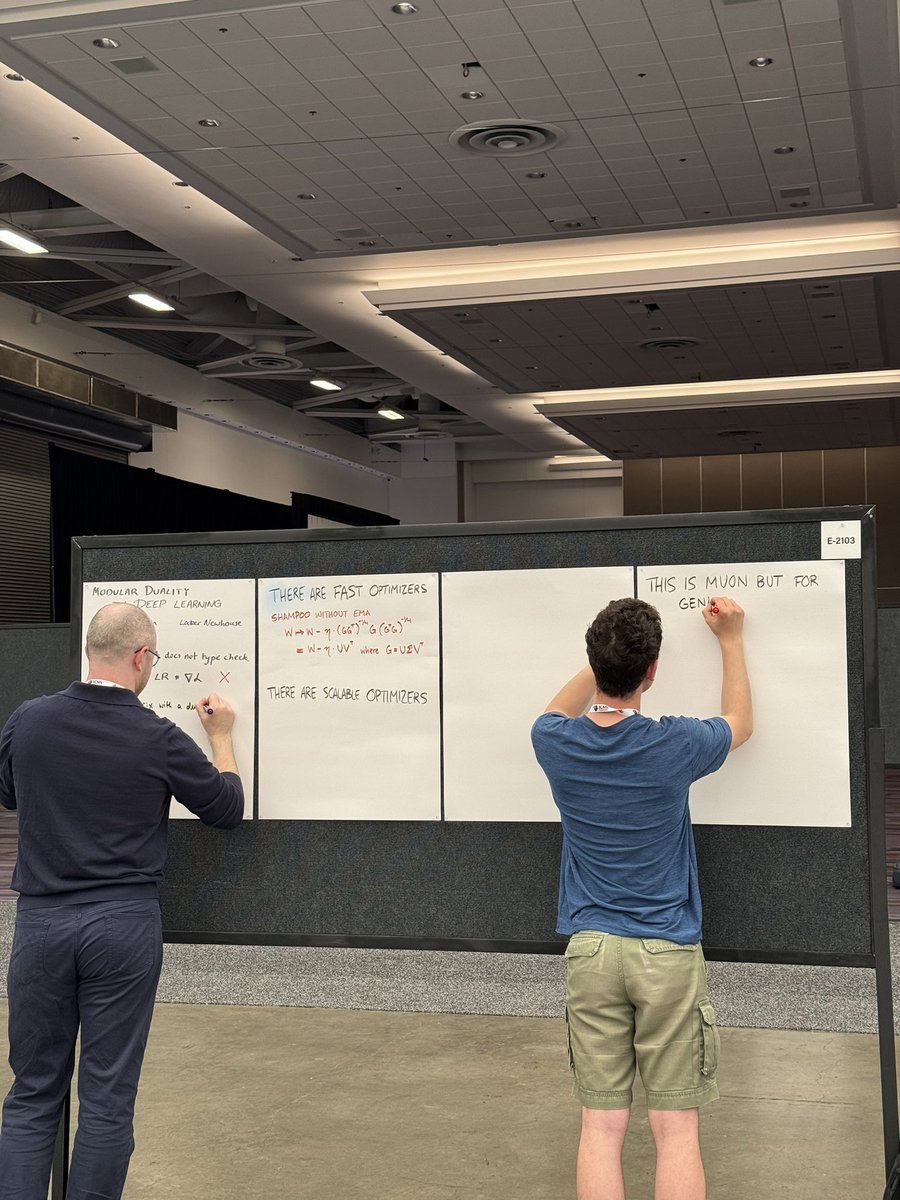

Laker and I are presenting this work in an hour at ICML poster E-2103. It’s on a theoretical framework and language (modula) for optimizers that are fast (like Shampoo) and scalable (like muP). You can think of modula as Muon extended to general layer types and network topologies