Sabitlenmiş Tweet

We just launched a new short course with @DeepLearningAI

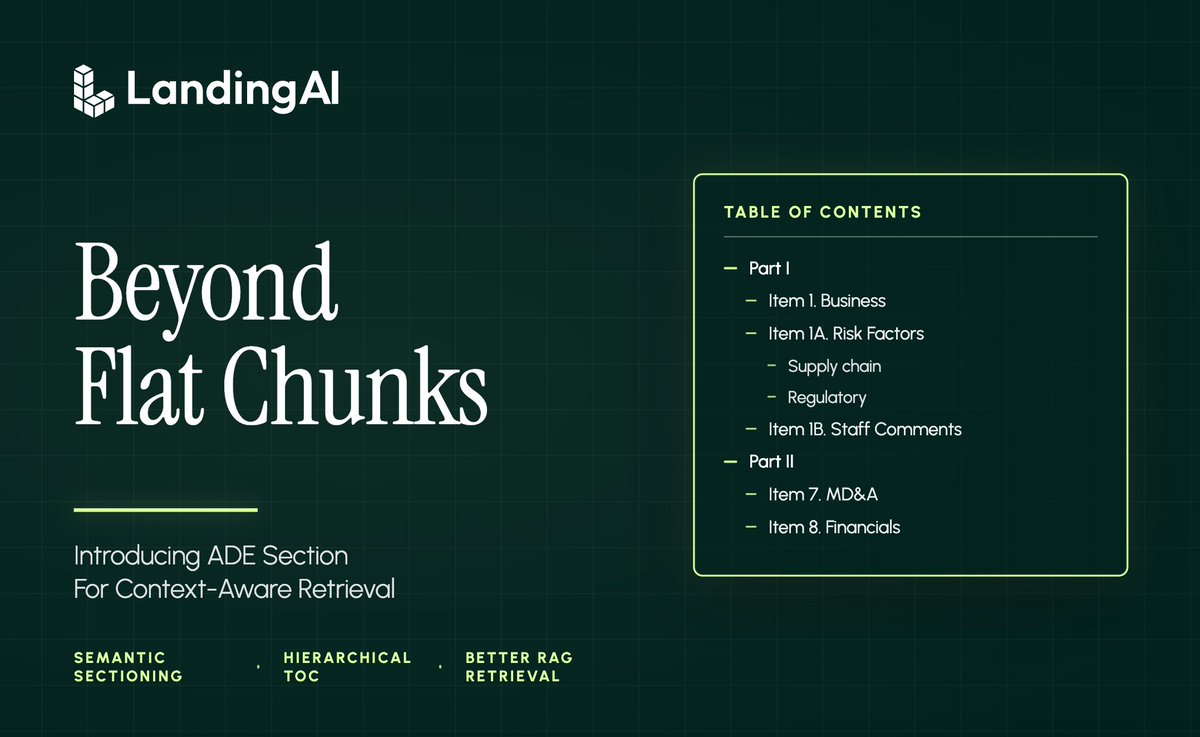

Document AI: From OCR to Agentic Doc Extraction.

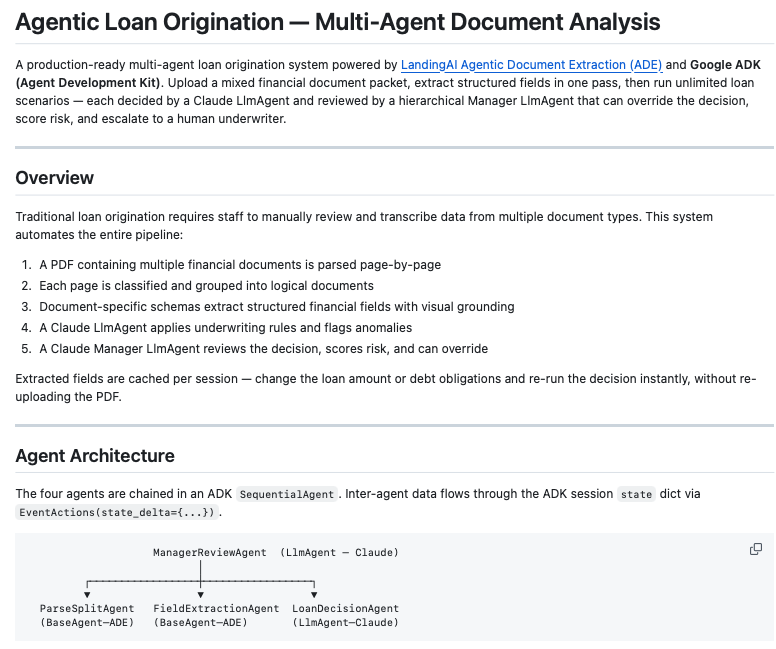

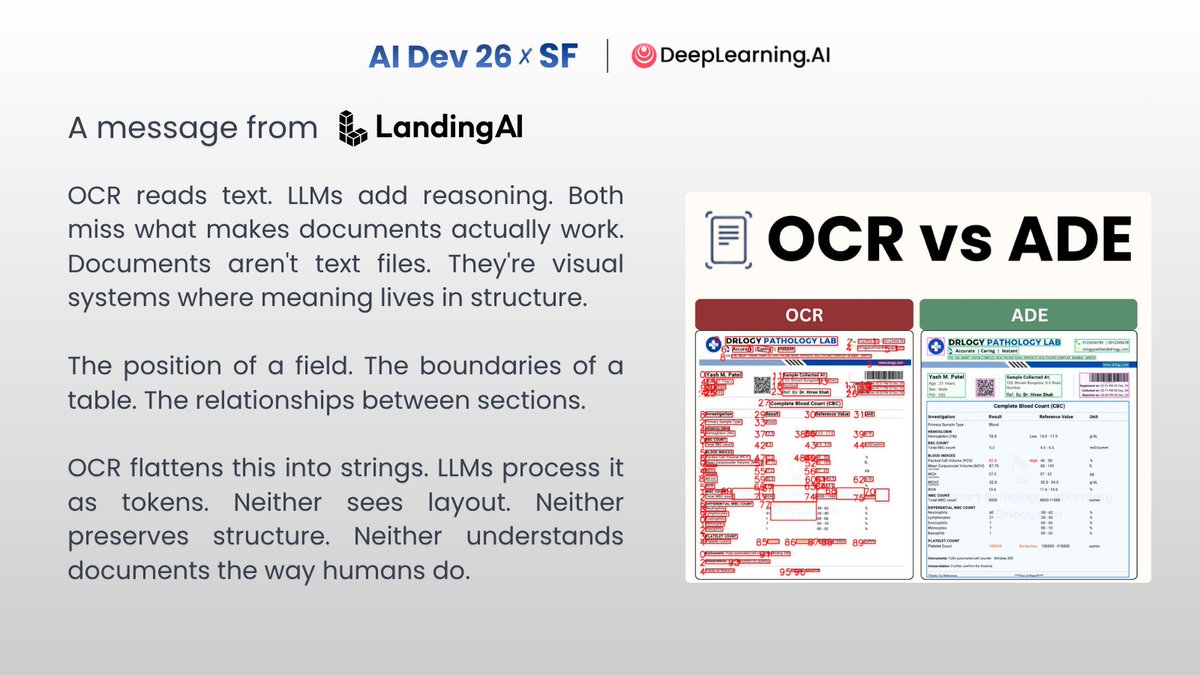

This course shows how to move beyond OCR by building agentic document pipelines that preserve layout, reading order, and visual context when extracting structured data from complex PDFs and images.

Free to enroll here: bit.ly/4bpV2rG

English