NobuYa

217 posts

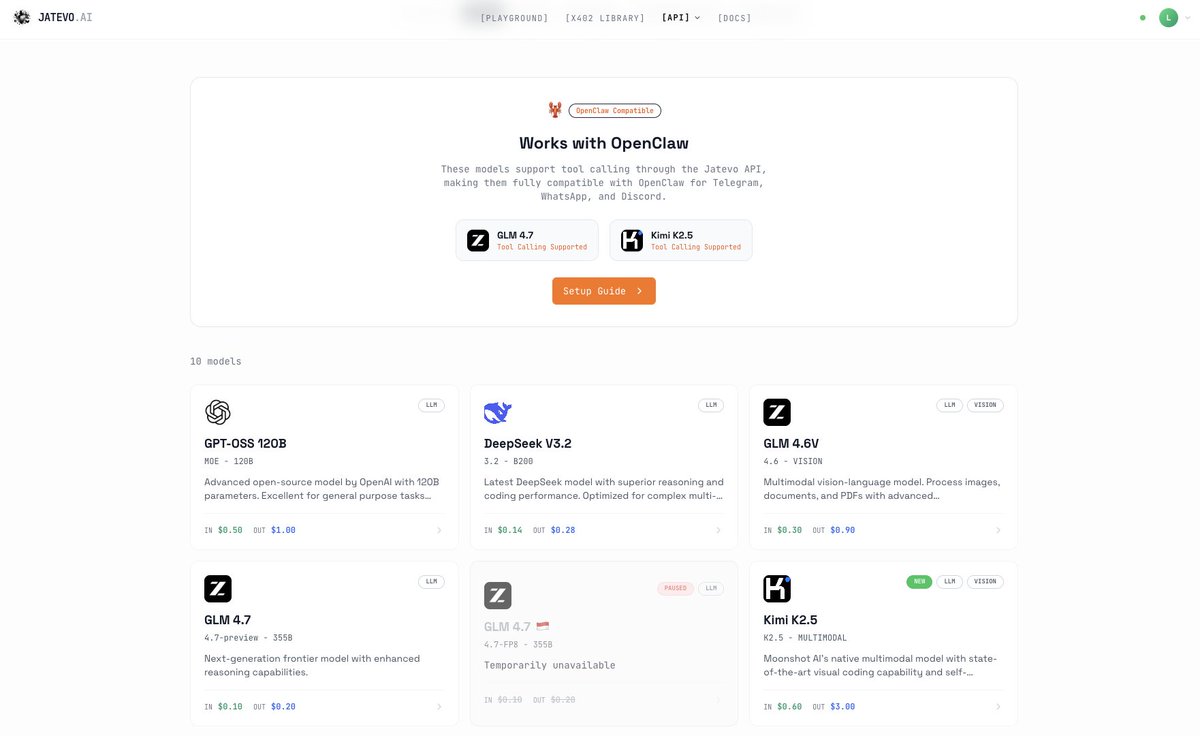

Updating a new model for @JatevoId, Our GLM 4.6 supports up to 500 tokens per second, with a total bandwidth up to 600 million tokens per day. It will be available through the $jtvo LLM API and the x402 endpoints. Currently, access is limited to jatevo.ai/app.

for every closed model, there's an open source alternative sonnet 4.5 → glm 4.6 / minimax m2 grok code fast → gpt-oss 120b / qwen 3 coder gpt 5 → kimi k2 / kimi k2 thinking gemini 2.5 flash → qwen 2.5 image gemini 2.5 pro → qwen3-235-a22b sonnet 4 → qwen 3 coder

We’ve just added @Zai_org GLM 4.6 to our platform. Wondering what it can do in two minutes? Here’s a fully functional website, generated from a single prompt that produced 10k tokens in under 30 seconds. See the results: site.jatevo.ai/sites/quantum-2 Integration with x402 endpoints is coming soon.

Build and let them works.

"Just use LLM providers like OpenAI" Until you need to: • Prove the model being used is what you're paying for • Prove your prompt hasn't been modified • Prove your response hasn't been tampered with • Bring inference results on-chain • Build anything that touches money The moment that AI makes decisions with financial consequences, verifiable inference becomes table stakes. And now that verifiable AI is as simple to use as arbitrary inference providers like OpenAI, it also make sense to enable this by default for a large design space outside of just finance.

What if @openrouter only had open-sourced models and was accessible through x402 ? @lucaxyzz is building just that FYI Openrouter raised $12.5M seed & $28M series A with a16z