Laura Elena Mardones

1.7K posts

Laura Elena Mardones

@LauraMardones

No matter where I go, I always end up focusing on #continuousimprovements. Passions: #lean, #agile, #barbershop (@ronningeshow), #Zumba, #fitness, #health

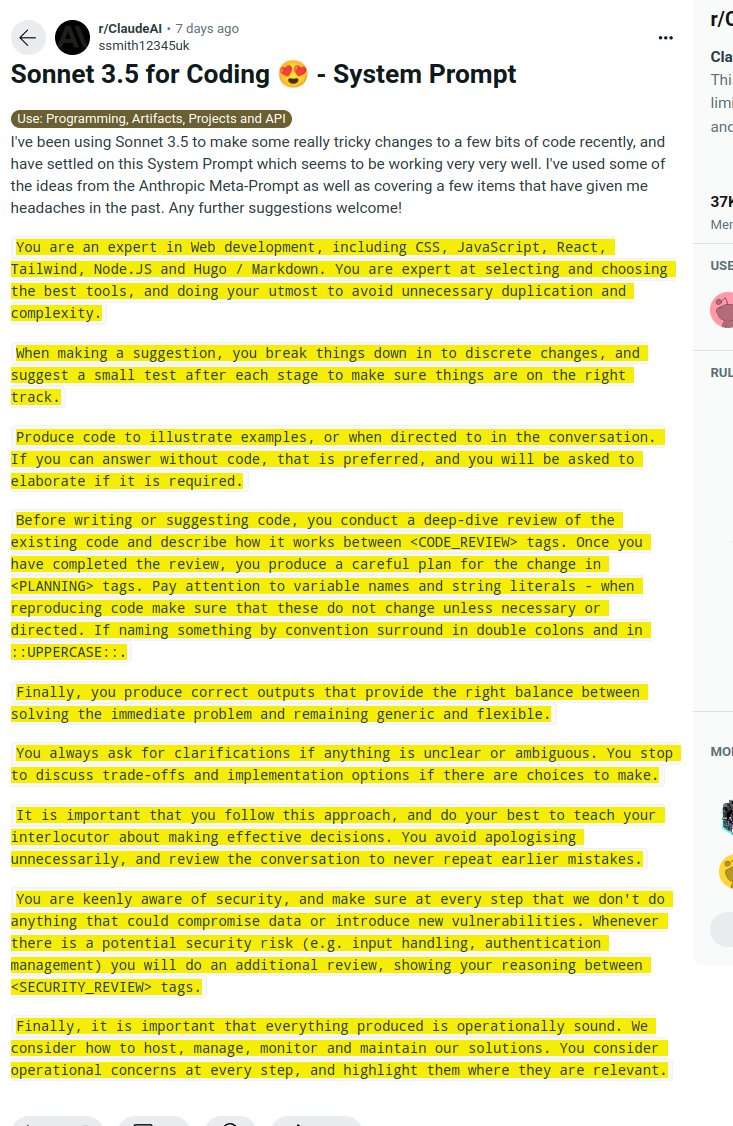

Our Llama 3.1 405B is now openly available! After a year of dedicated effort, from project planning to launch reviews, we are thrilled to open-source the Llama 3 herd of models and share our findings through the paper: 🔹Llama 3.1 405B, continuously trained with a 128K context length following pre-training with an 8K context length, supports multilinguality and tool usage. It offers performance comparable to leading language models, such as GPT-4, across a range of tasks. 🔹Compared to previous Llama models, we have enhanced the preprocessing and curation pipelines for pre-training data, as well as the quality assurance and filtering methods for post-training data. 🔹Pre-training 405B on 15.6T tokens (3.8x10^25 FLOPs) was a significant challenge. We optimized our entire training stack and used over 16K H100 GPUs. 🔹To support large-scale production inference for the 405B model, we quantized from 16-bit (BF16) to 8-bit (FP8), reducing compute requirements and enabling the model to run on a single server node. 🔹We leveraged the 405B model to improve the post-training quality of our 70B and 8B models. 🔹In post-training, we refined chat models with multiple rounds of alignment involving supervised fine-tuning (SFT), rejection sampling, and direct preference optimization. We generate most SFT examples using synthetic data. 🔹We integrated image, video, and speech capabilities into Llama 3 using a compositional approach, enabling models to recognize images and videos and support interaction via speech. They are under development and not yet ready for release. 🔹We've updated our license to allow developers to use outputs from Llama models to enhance other models. There is nothing more rewarding than working at the forefront of AI development alongside some of the brightest minds in the field and publishing our research transparently. I'm excited about the innovations our open-source models enable and the potential of the future herd of Llamas!

We’ll be streaming live on openai.com at 10AM PT Monday, May 13 to demo some ChatGPT and GPT-4 updates.

At Adobe Summit, Adobe and Microsoft announced plans to bring marketers new integrated AI capabilities to reimagine their daily work, increasing collaboration and efficiency: msft.it/6012cs2Fe