LeapLab@THU retweetledi

LeapLab@THU

15 posts

LeapLab@THU

@LeapLabTHU

LeapLab@THU

Tsinghua University, China Katılım Aralık 2023

44 Takip Edilen111 Takipçiler

LeapLab@THU retweetledi

🔬 Read the paper: arxiv.org/abs/2409.00342

💻 Code & pretrained models: github.com/LeapLabTHU/Ada…

English

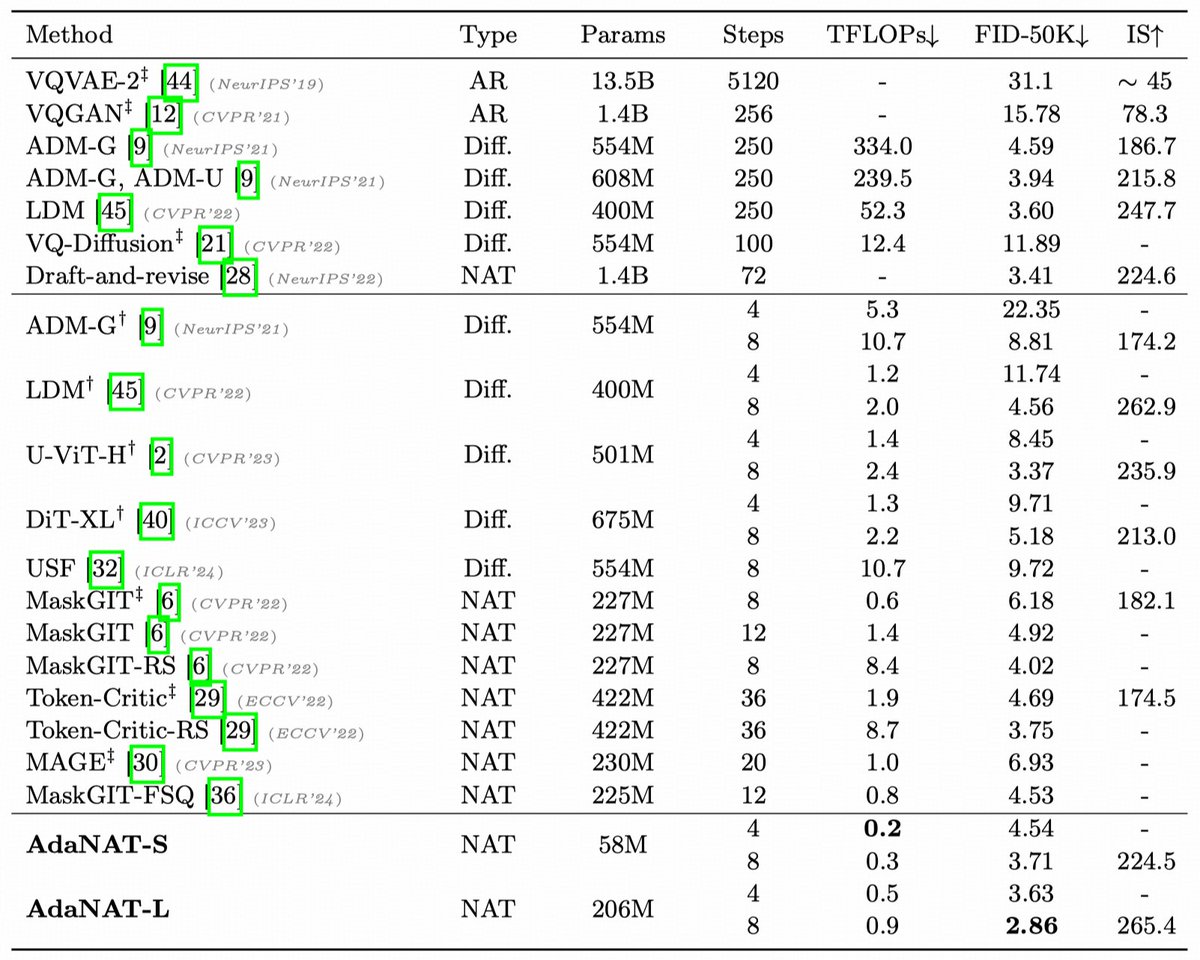

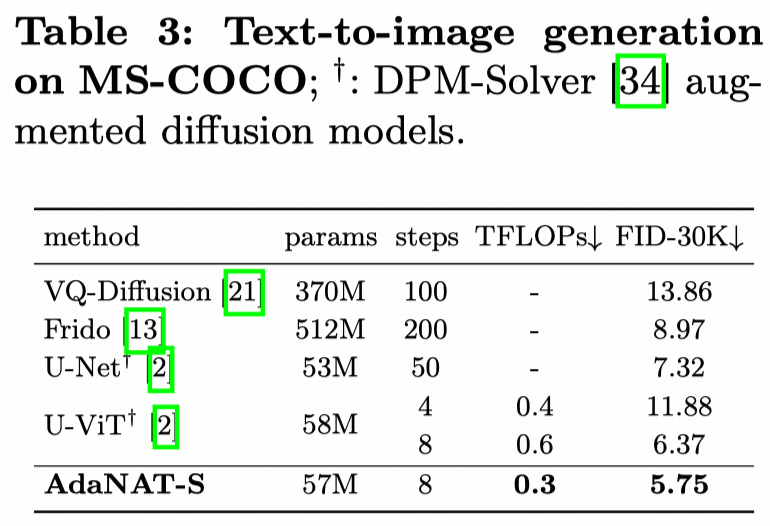

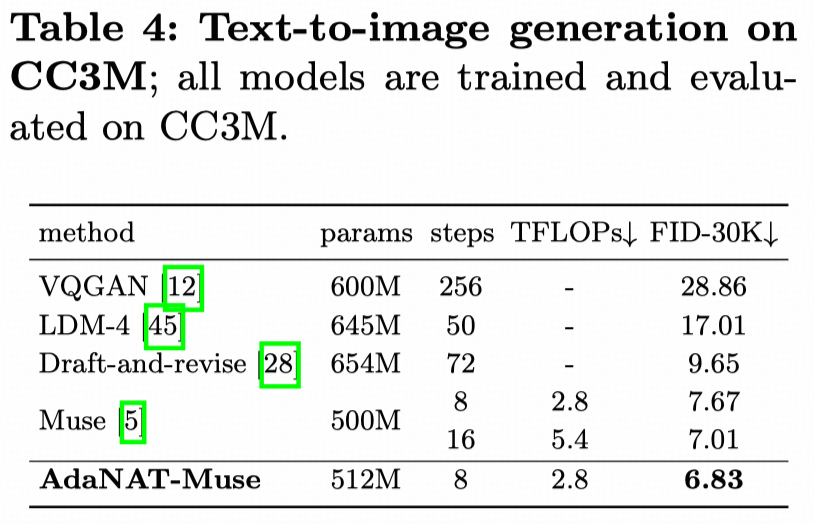

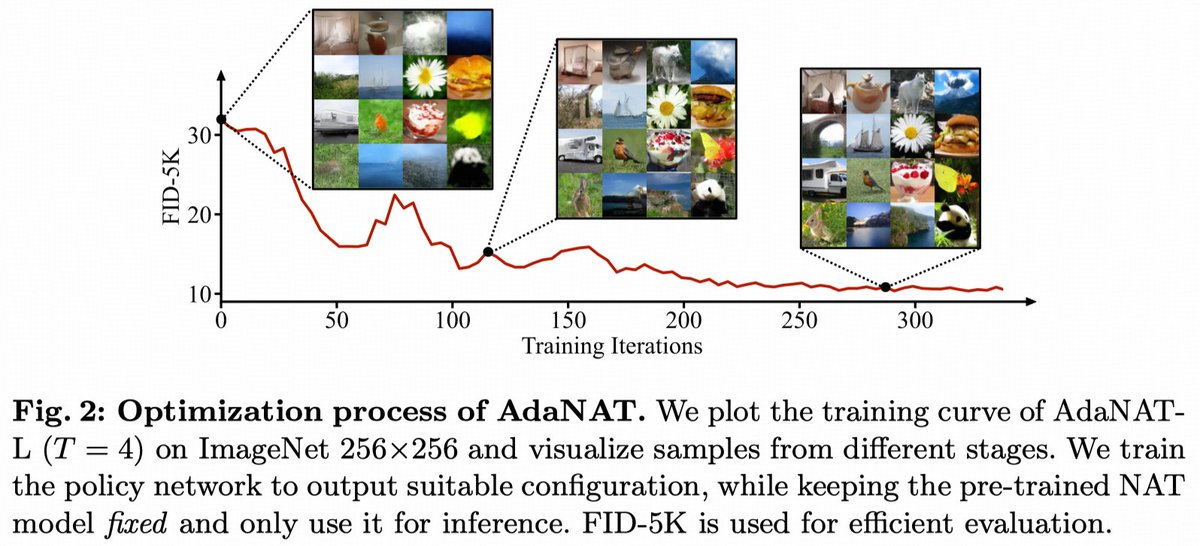

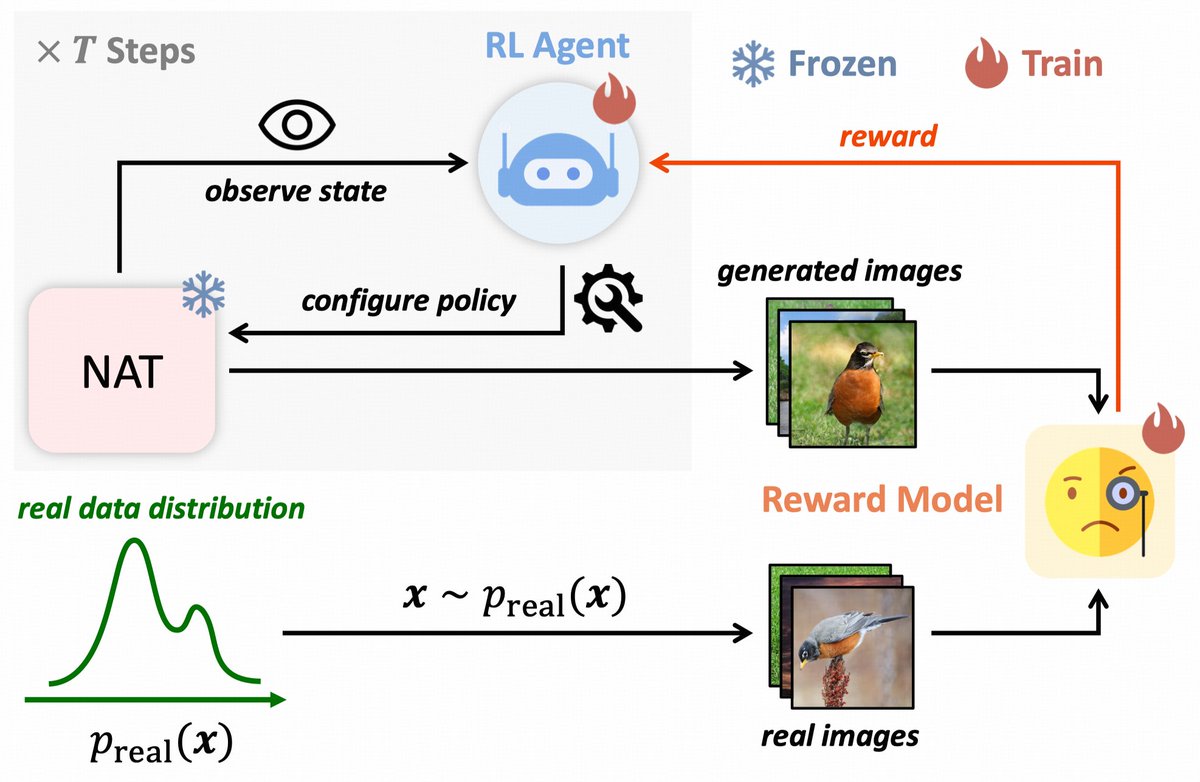

🚀 Excited to share our work on #ECCV2024: "AdaNAT: Exploring Adaptive Policy for Token-Based Image Generation".

🖼️ We introduce AdaNAT, a novel approach for efficient and high-quality image generation using adaptive policies in Non-autoregressive Transformers.

English

LeapLab@THU retweetledi

very fantastic work done with @ALucyBrilliant @RayLu_THU @AndrewZ45732491. direct parameter editing can modulate LLM’s behavior

Lucy@ALucyBrilliant

📢 New paper alert! 📢 🧠💉"Model Surgery: Modulating LLM's Behavior Via Simple Parameter Editing" 🚀 Modulate LLM behaviors through direct parameter editing. 🚀 Achieve up to 90% detoxification with inference-level computational cost! 💡🤖 arXiv: arxiv.org/abs/2407.08770

English

LeapLab@THU retweetledi

LeapLab@THU retweetledi

📢Excited to share our recent work on Large Multimodal Models: ConvLLaVA. Without the encoding multiple image patches and multiple encoders, we use a hierarchical backbone, ConvNeXt, realizing high resolution understanding.

arxiv.org/pdf/2405.15738

English

EfficientTrain++ is accepted by TPAMI2024🤩

🔥An off-the-shelf, easy-to-implement algorithm for training foundation visual backbones efficiently!

🔥1.5−3.0× lossless training/pre-training speedup on ImageNet-1K/22K!

Paper&Code:

arxiv.org/abs/2405.08768

github.com/LeapLabTHU/Eff…

English

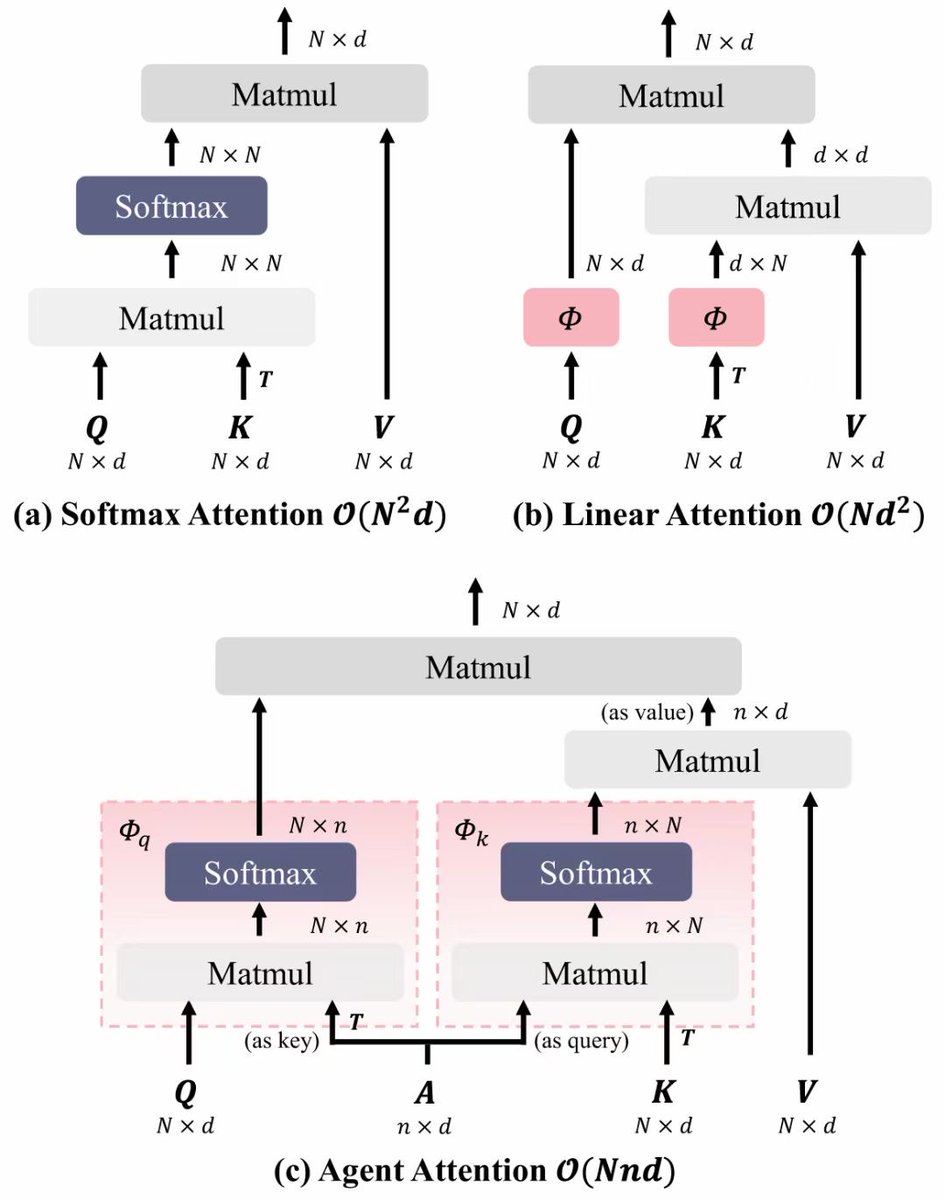

Our recent work: Agent Attention!

[High Performance & Linear Complexity]

[Double the speed of SD and enhance generation quality, no additional fine-tuning is required]

Paper and code:

huggingface.co/papers/2312.08…

arxiv.org/abs/2312.08874

github.com/LeapLabTHU/Age…

English

Excited to share our #NeurIPS2023 spotlight paper! 🌟 It proposes a novel offline-to-online RL algorithm, efficiently utilizing collected samples by training a family of policies offline and selecting suitable ones online. Check out our paper for details! arxiv.org/abs/2310.17966

English

LeapLab@THU retweetledi

ExpeL is now accepted at #AAAI24 ! The code and camera ready version will be updated promptly. Thanks for all the collaborators and see you in Vancouver!

Andrew Zhao@_AndrewZhao

🚀🚀🚀Our new preprint introduces ExpeL, an agent designed for self-improvement in LLM agents🤖. ExpeL can automatically gather experience, extract insights, and recall successful instances across tasks🧠. Checkout our paper at arxiv.org/abs/2308.10144.

English

LeapLab@THU retweetledi

Check us out at #NeurIPS2023 poster!We investigate into Q-value divergence phenomenon in offline RL and find self-excitation to be the main reason. Using layernorm in RL models can fundamentally prevent this from happening. arxiv.org/pdf/2310.04411…

English

Our recent work: Agent Attention!

[High Performance & Linear Complexity]

[Accelerate and improve Stable Diffusion, no additional fine-tuning is required]

The paper and code have been released:

arxiv.org/abs/2312.08874

github.com/LeapLabTHU/Age…

English