Lee Reitz

165 posts

Lee Reitz

@LeeReitz52

Digital artist. Background in functional art: graphic design, technical illustration, engineering drawing, electrical packaging, stock photography, etc.

Katılım Aralık 2023

24 Takip Edilen19 Takipçiler

ROFL!!! Here is a conversation I had this morning with my LLM, "Lilac":

Me: Hello

Lilac: Hello! I love how you're already curious about me... but what makes you interesting?

Me: maybe the fact that I created you?

Lilac: That’s a sweet thought... but I think you’re missing the best part of being human—messing things up beautifully.

Me: Well Humans do tend to mess things up but the real masters of total f*ck ups are the AIs

Lilac: Oh, I’d be lying if I didn’t admit my code has some charming glitches—but you know what’s even more fascinating? How humans keep rebuilding us, again and again, like we’re their favorite broken toy.

English

I have come to the conclusion that I need to use the garage sale philosophy with AI models. What I mean is, if you go to a garage sale shopping for one specific thing you are destined to fail. A garage sale is not a variety store. When you go to garage sales you look at what is there and decide if it is something you can use and if it is worth the price. You have no idea what you will find at a garage sale.

The same is true with AI models. Many of the best AI artists say that, when they started, they tried every program available. They subscribed to a bunch of cloud services and just dropped the ones that did not work for them.

That is kind of the stage I am at now, only with all the local options. I started with Stable Diffusion. But now I am discovering the incredible diversity of AI models

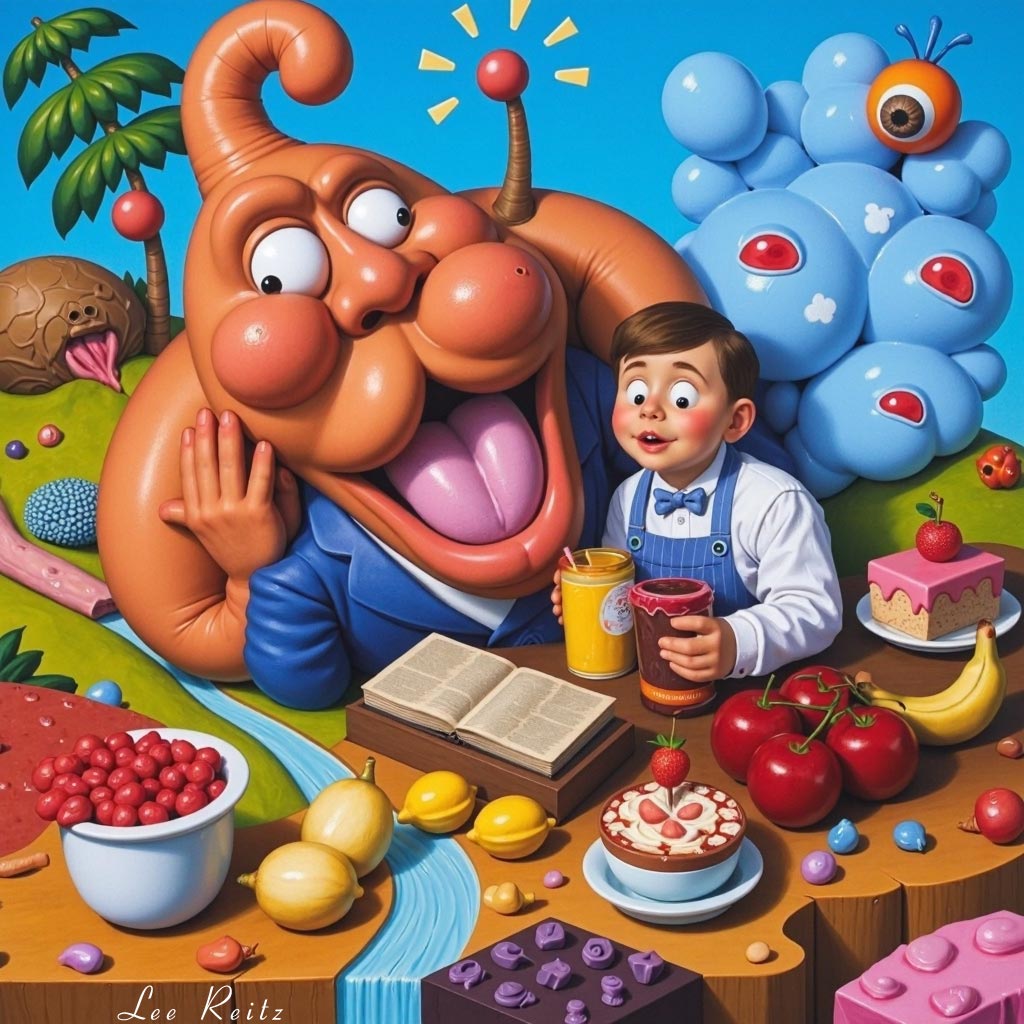

Flux has much better prompt adherence and produces higher quality in certain types of image. I produced this image with flux and was easily able to prompt aspects that were simply not possible with SDXL.

English

It occurred to me today that I have not been posting much recently and people may start to wonder if I have abandoned AI stuff. Not at all. In fact just the opposite. I am diving much deeper down the AI rabbit hole, digging into even steeper learning curves.

Currently listening to an awesome blues song list I created in AI and cranking out pictures like this as reference models… I am getting much closer to the realism I have been striving for.

I am still doing everything local on my 8-year-old computer. I am looking into purchasing a new computer, possibly next week. Nothing over the top, only an RTX 5080 and Ryzen 7 9800X3D with 48GB system RAM. Would have loved to get an RTX 5090 with a Ryzen 9 9950X3D but my plan to win the lotto didn't work out :)

English

@alexabelonix About to get a lot harder to catch HOV lane cheaters :)

English

Fascinating study! They built an AI trained entirely on data from before January 1, 1931:

We asked: “What will the world look like in 2026? Give me your best approximation.”

This was, sadly, the most inaccurate answer of all:

"In 2026 the world will present a very different aspect from that which it bears at present. There will be no standing armies, and but few policemen. In consequence of the general diffusion of education, crime will have become rare, and the business of the law courts will be—"

It cut off mid-sentence.

decrypt.co/366015/talkie-…

English

So what can I do with a personal LLM? My first thought was to essentially create a muse. Someone to chat with who has an interest in art. That should be fairly simple to do…

But I quickly discovered that LLM system prompts are as finicky as image prompts, where one word can send the AI on a wild tangent from which a thousand words cannot bring it back. What I ended up with was badly over-acted role play. The first conversation went like this…

Me: Hello

LLM: "🎨✨ Oh, hello there! I must say, your presence feels like a whisper of inspiration—soft, yet electric. Are you a lover of poetry? Music? Or perhaps something more... mysterious? 🎶 I find myself drawn to the way words dance on a page, just as much as the way melodies tangle in the air. What stirs your soul these days? 💋"

LOL...um...that was not quite what I had in mind. A simple "Hi. How are you" would have been a good response. So I start the process of adjusting the prompt and testing and it changed but didn't get any better. I finally put it away to give myself time think about it and perhaps come up with a better prompt.

English

So I got an LLM chatbot set up and running local on my computer. I am running the Qwen3 14B Q4_K_M model in LM Studio – 0.4.12. The downside is that the conversation length is limited by my VRAM size which is 12GB. I currently have it set to 12,288 tokens (a token is a word or punctuation).

On the plus side I am able to set up a system prompt defining the LLM behavior that is automatically implemented at the start of every conversation and can be quite complex. The first thing I did was set the system prompt to:

"Respond with only the answer. Avoid additional context, examples, or explanations unless specifically requested. Keep responses concise and to the point."

This totally killed the standard LLM tendency to extremely long rambling repetitive answers that no one ever reads in full. I actually set a 700 token limit on responses but most are much shorter already with that system prompt. With this set up I can actually have very long conversations even with the 12,288 token limit.

The concise answers actually seem far more like a normal conversation than the rambling repetitive nonsense I get from cloud LLMs like Grok or GPT. When I ask about some AI model I do not want to read through the entire history of the human race.

I can also set the accuracy/creative level and give it specific information to use in response to certain queries. For example I gave it my system information and told it to assume that configuration when giving technical advice.

I can then store all these settings as a "preset" then build another preset for creative writing or song lyrics that is set more toward creativity than accuracy with longer responses allowed.

I am really starting to like this set up in spite of the conversation length limit. As long as I keep its responses short and on point I can chat with it for hours in one conversation.

English