Leonard Berrada

25 posts

Leonard Berrada

@LeonardBerrada

Senior Research Scientist @GoogleDeepMind

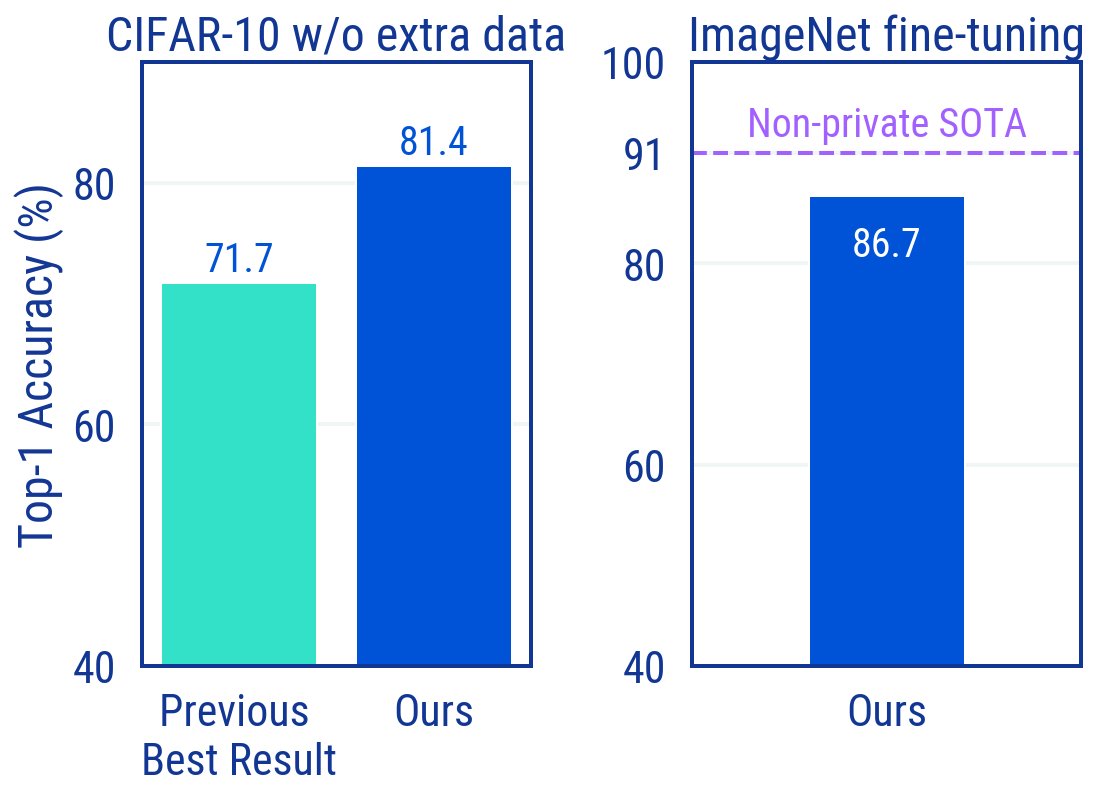

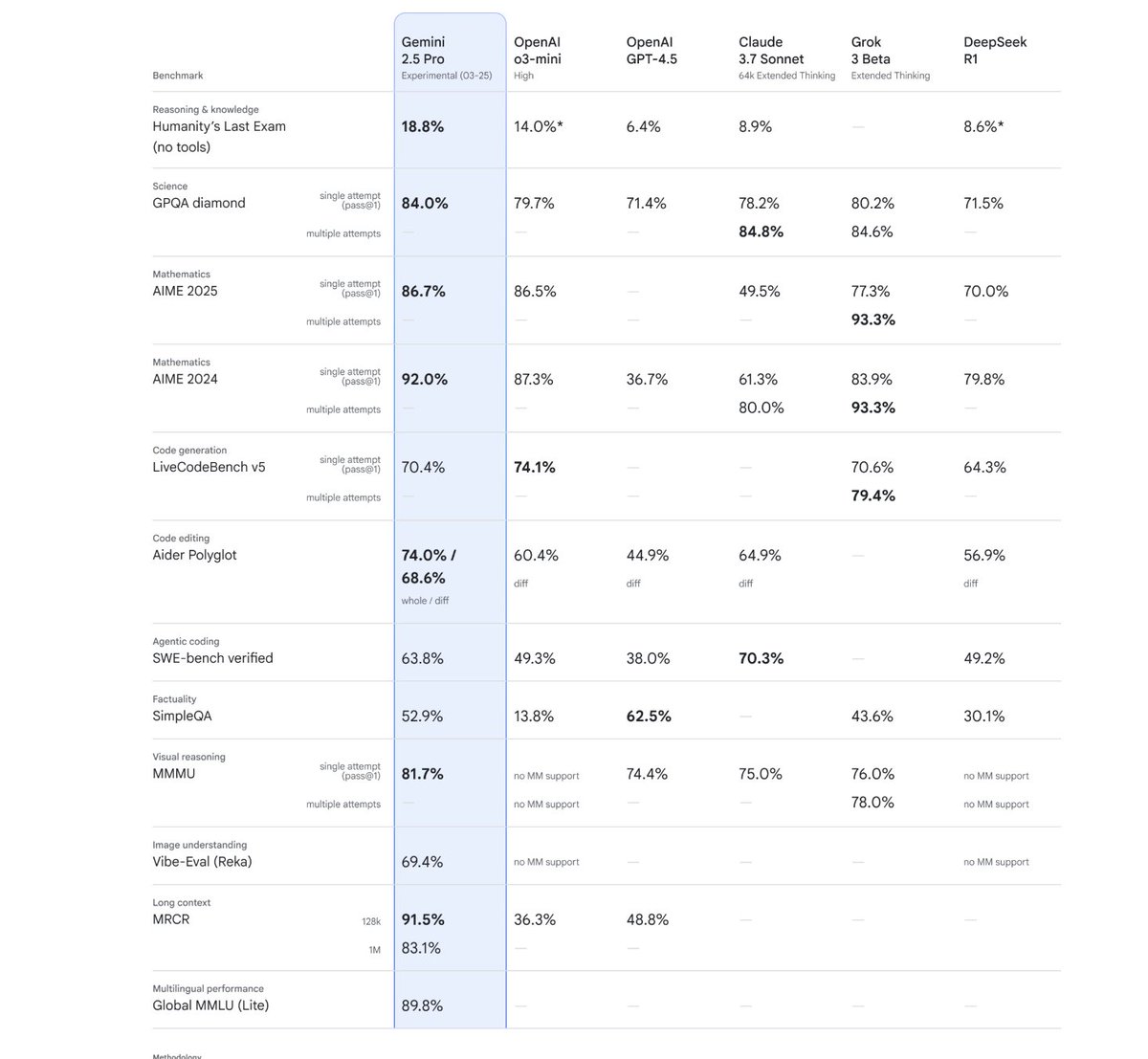

Think you know Gemini? 🤔 Think again. Meet Gemini 2.5: our most intelligent model 💡 The first release is Pro Experimental, which is state-of-the-art across many benchmarks - meaning it can handle complex problems and give more accurate responses. Try it now → goo.gle/4c2HKjf

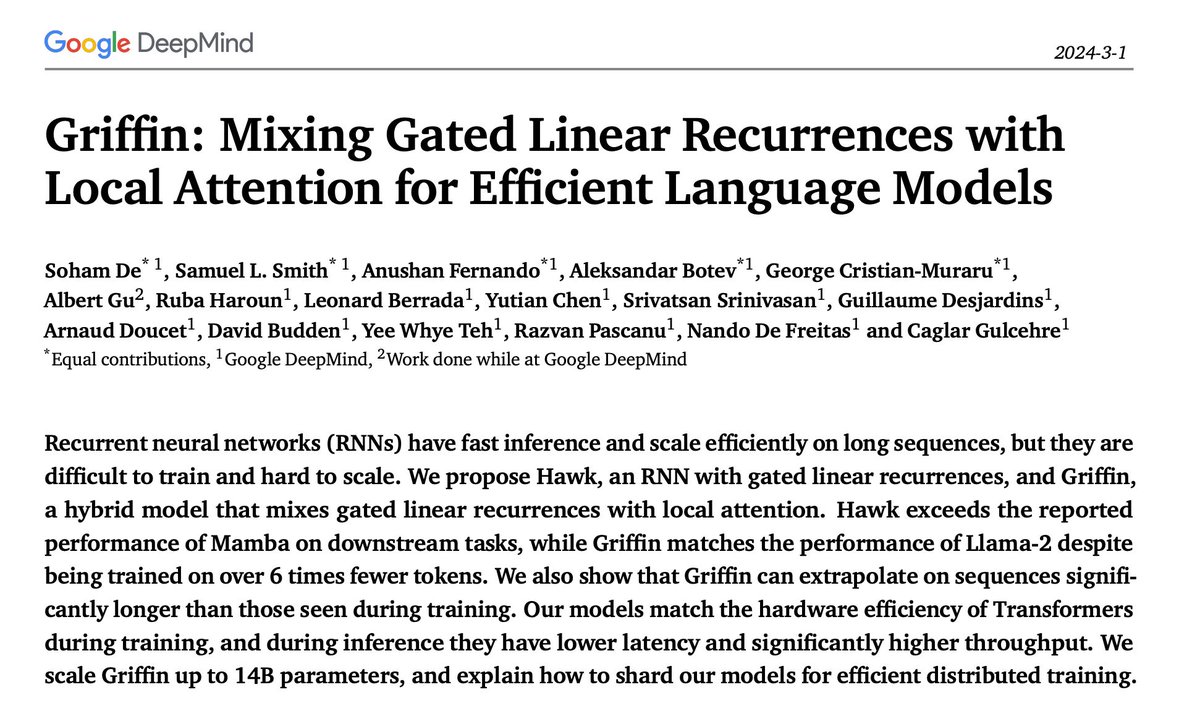

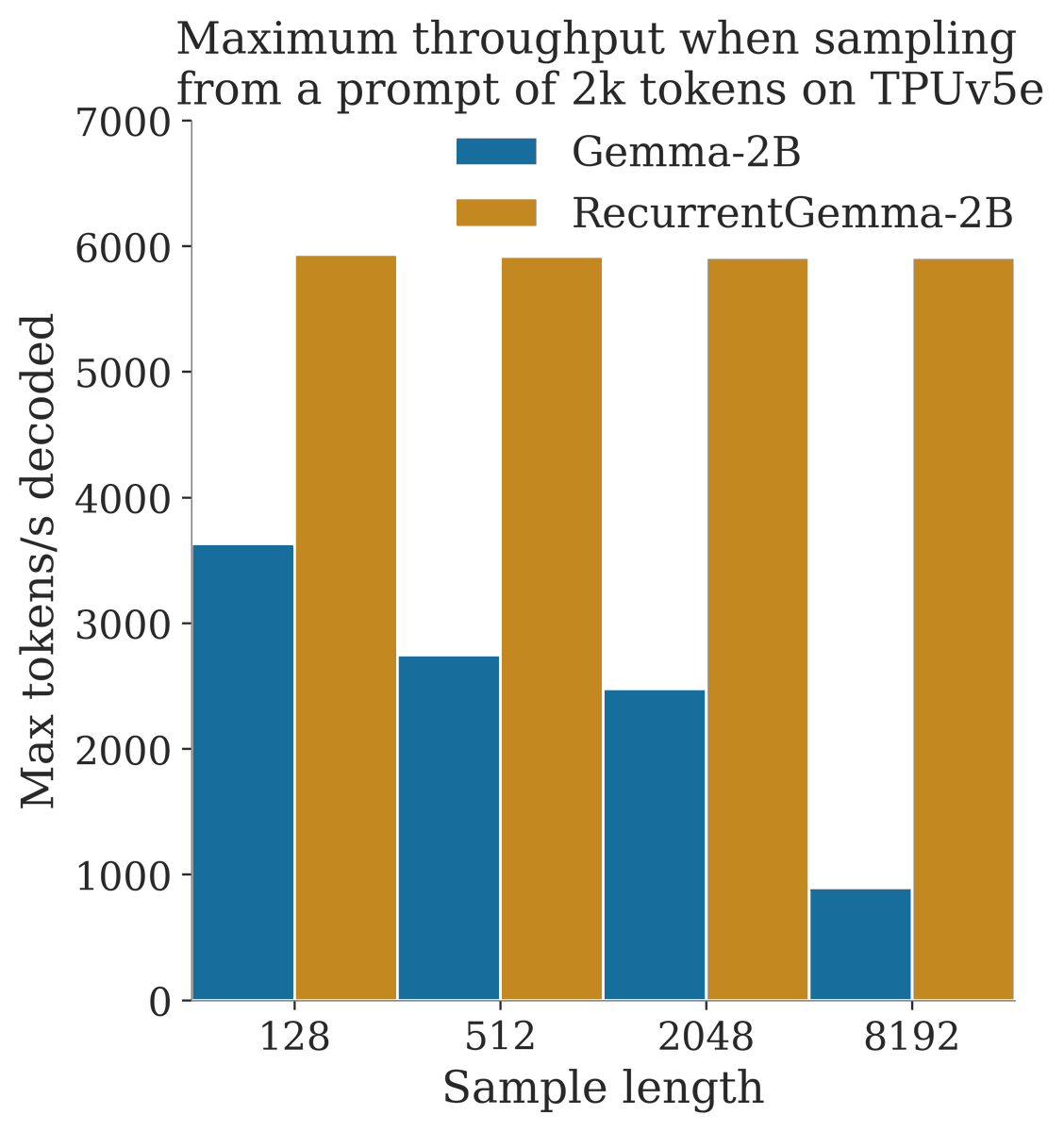

We present Griffin: A hybrid model mixing a gated linear recurrence with local attention. This combination is extremely effective: it preserves all the efficient benefits of linear RNNs and the expressiveness of transformers. Scaled up to 14B! arxiv.org/abs/2402.19427

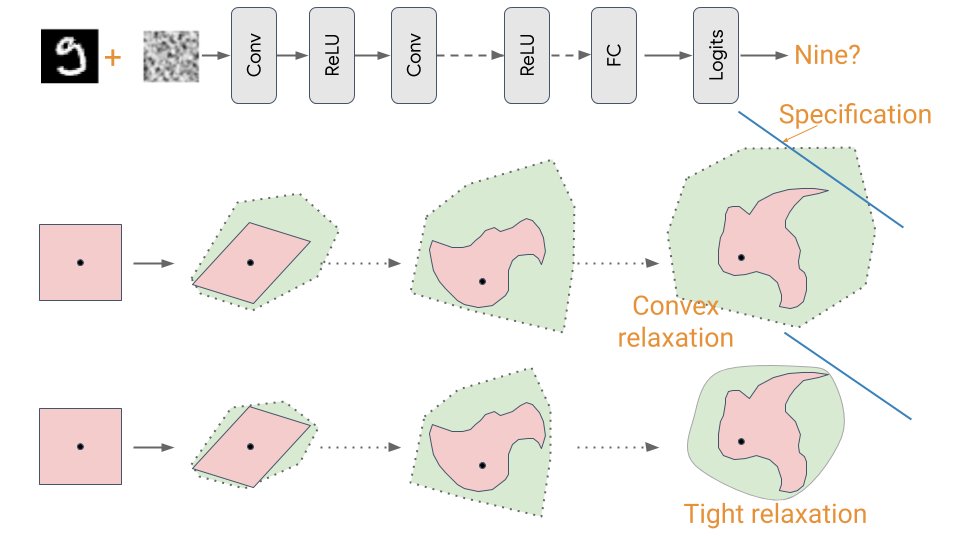

We need to rethink the belief that all privacy-preserving models are inherently more discriminatory. I give a high-level overview of why - in this @mtlaiethics blog post based on my summer internship work at @GoogleDeepMind montrealethics.ai/unlocking-accu… 1/5