Lijun Yu

38 posts

Lijun Yu

@LijunYu0

Research Scientist @GoogleDeepMind | AI PhD @CarnegieMellon | CS/Econ @PKU1898

For years, RAW pixel space pretraining has been sidelined: too compute-expensive. Our new @GoogleDeepMind paper 📜 dives into the scaling trends of raw pixel models to answer the question “how far are we from scaling up next-pixel prediction?” arxiv.org/pdf/2511.08704 Forecast: Raw next-pixel modeling will reach competitive ImageNet classification (>80% top1 accuracy) and generation metrics (90 Fr’echet Distance) in five years! Threads 👇

We just dropped Nano Banana Pro, built on Gemini 3. 🍌 With state-of-the-art text rendering, vast world knowledge and studio-quality creative controls, Gemini 3 Pro Image can create and edit more complex visuals, infographics and more. Here’s what’s under the hood. 🧵

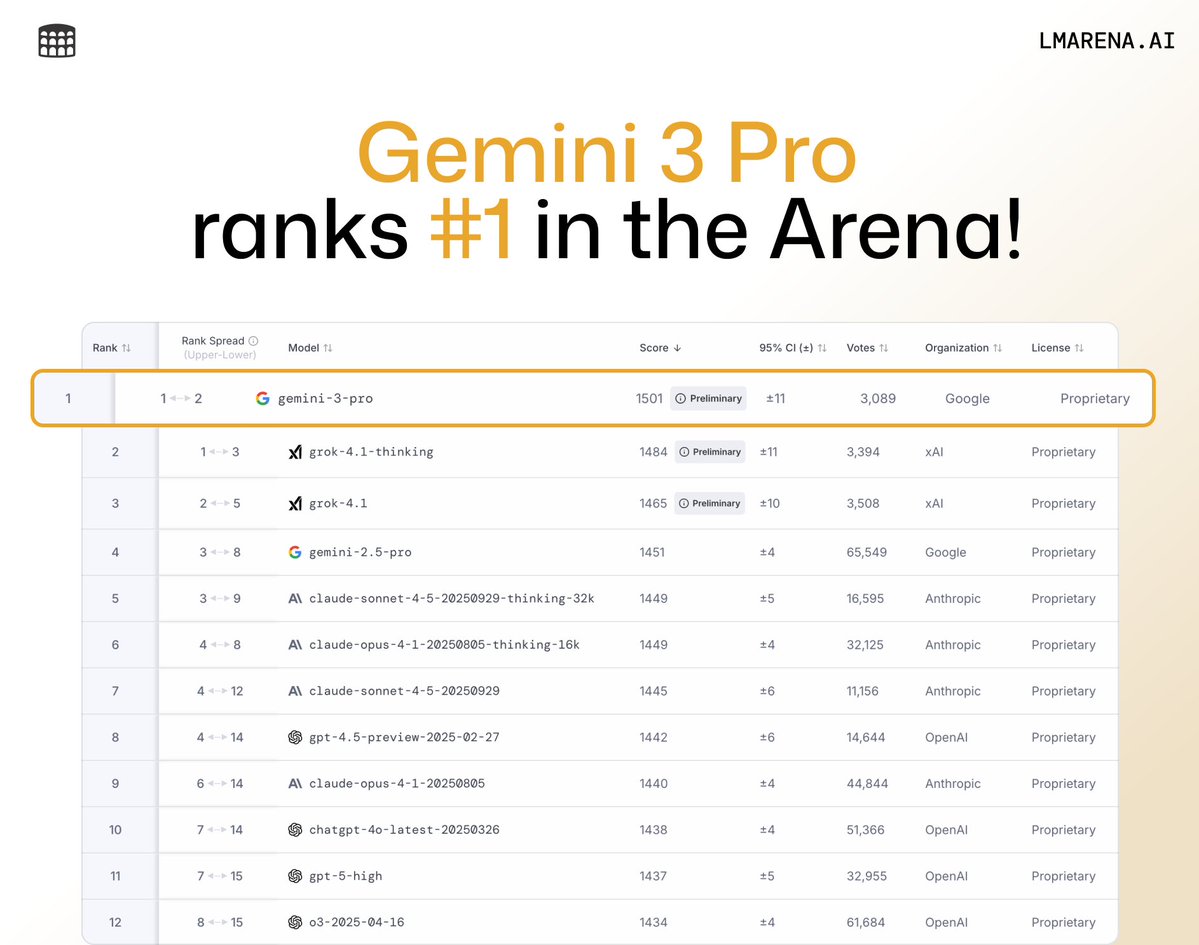

Introducing Gemini 3 ✨ It’s the best model in the world for multimodal understanding, and our most powerful agentic + vibe coding model yet. Gemini 3 can bring any idea to life, quickly grasping context and intent so you can get what you need with less prompting. Find Gemini 3 Pro rolling out today in the @Geminiapp and AI Mode in Search. For developers, build with it now in @GoogleAIStudio and Vertex AI. Excited for you to try it!

This is Gemini 3: our most intelligent model that helps you learn, build and plan anything. It comes with state-of-the-art reasoning capabilities, world-leading multimodal understanding, and enables new agentic coding experiences. 🧵

🚨🎬 Big news from Video Arena! @GoogleDeepMind’s latest Veo 3.1 now ranks #1 in both Text-to-Video and Image-to-Video leaderboards. 🏆 This is a +30-point leap from Veo 3.0 → 3.1, making it the first model to break 1400 in Video Arena history! Huge congrats to the @GoogleDeepMind team for pushing the frontier of video generation forward! More details in the thread 🧵

🚨🎬 Big news from Video Arena! @GoogleDeepMind’s latest Veo 3.1 now ranks #1 in both Text-to-Video and Image-to-Video leaderboards. 🏆 This is a +30-point leap from Veo 3.0 → 3.1, making it the first model to break 1400 in Video Arena history! Huge congrats to the @GoogleDeepMind team for pushing the frontier of video generation forward! More details in the thread 🧵

Veo is getting a major upgrade. 🚀 We’re rolling out Veo 3.1, our updated video generation model, alongside improved creative controls for filmmakers, storytellers, and developers - many of them with audio. 🧵

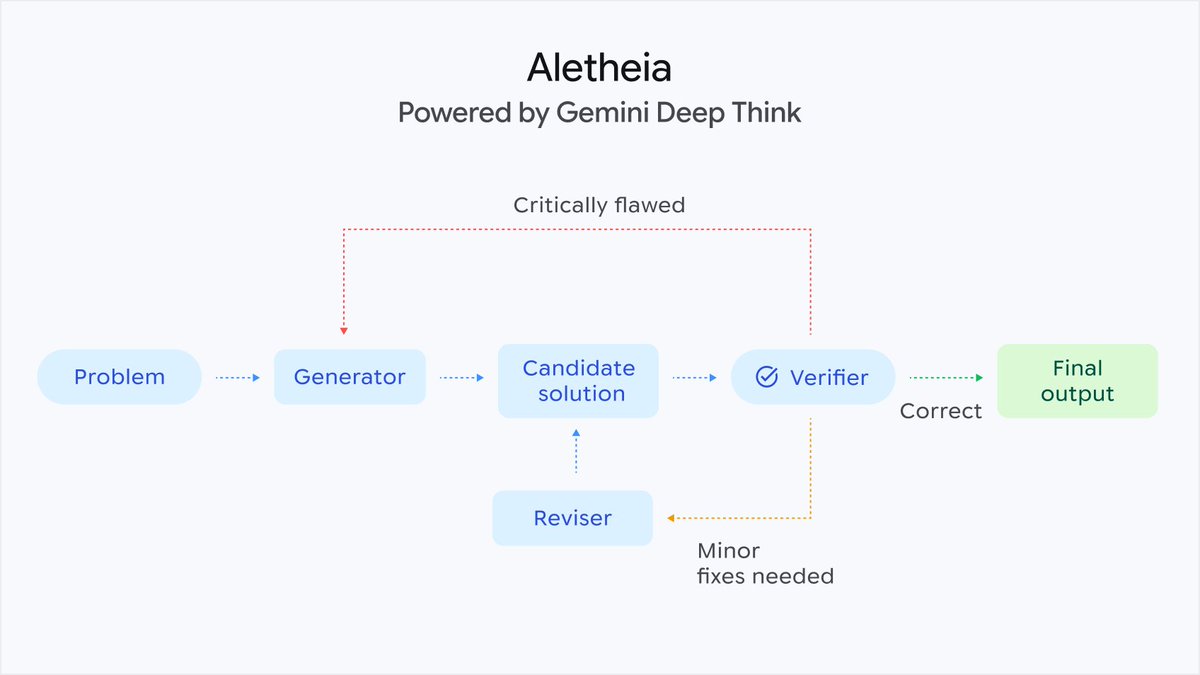

(1/3) Thrilled to announce a new Gemini breakthrough! Building on our success at IMO this year, an advanced version of Gemini Deep Think achieved gold-medal level performance at the ICPC 2025 World Finals - one of the world’s leading competitive programming competitions. deepmind.google/discover/blog/…

Following its IMO gold-level win, @GoogleDeepMind is sharing Gemini Deep Think with mathematicians for feedback. Excited to see what they discover! 🧠 Plus, an updated Gemini 2.5 Deep Think is now rolling out for Google AI Ultra subscribers. Learn more: bit.ly/3IWcWq0

Excited to present our #CVPR2025 oral paper #TexTok @CVPR! #TexTok is an image tokenizer that uses text during tokenization, achieving both high recon/gen quality and low cost. 🎙️Oral: Sat, June 14, 1:15-1:30PM CDT (Session 4A) 📌Poster: Sat, June 14, 5-7PM CDT (ExHall D #252)