Katharina Limbeck

12 posts

Katharina Limbeck

@LimbeckKat

PhD Student at Helmholtz Munich

Coming up soon at #NeurIPS2024, combining #geometry and #topology: “Metric Space Magnitude for Evaluating the Diversity of Latent Representations” ❓ Ever wondered how to best assess the diversity of model outputs or how to choose between models? 👉We address these questions by measuring the diversity of generative models, assessing LLMs, and analysing latent spaces. Embeddings allow us to intuitively understand similarities between e.g. generated graphs, images or sentences, and we leverage this knowledge to automatically measure diversity. 🤔 But how to best measure diversity? From a mathematical perspective, diversity measures should fulfil theoretical guarantees. However, we find that many baseline diversity measures currently used for assessing latent spaces and generative models do not fulfil these necessary requirements. 🔍 We leverage the magnitude of metric spaces to define a family of novel, multi-scale diversity measures. Magnitude is a powerful geometric descriptor that measures diversity as the effective number of distinct points or clusters across varying scales of distance. By summarising magnitude across multiple resolutions, we then define theoretically-well funded measures of the intrinsic diversity or of the difference in diversity. 🚀 Experiments validate our proposed diversity measures. When evaluating LLMs, our magnitude-based measures characterise text embedding models by their diversity and best capture the ground-truth diversity of generated sentences. Further, when evaluating image and graph generative models, magnitude reliably detects mode collapse and mode dropping outperforming alternative evaluation metrics. 🔮 This work improves the diversity evaluation of latent spaces by leveraging magnitude as a theoretically-motivated measure of diversity and geometry. It paves the way for better benchmarking practices in generative model evaluation and further exploring the expressivity of magnitude in ML applications. Check out our paper or code to learn more! 📜 Paper: arxiv.org/abs/2311.16054 💻 Code: github.com/aidos-lab/magn… 📹 Video: youtube.com/watch?v=sZBP52… 👏Joint work w/ @LimbeckKat, @randreeva1 and @RikSarkarNet. 📢 Poster: #NeurIPS2024, Vancouver, Friday 13th December, 16:30

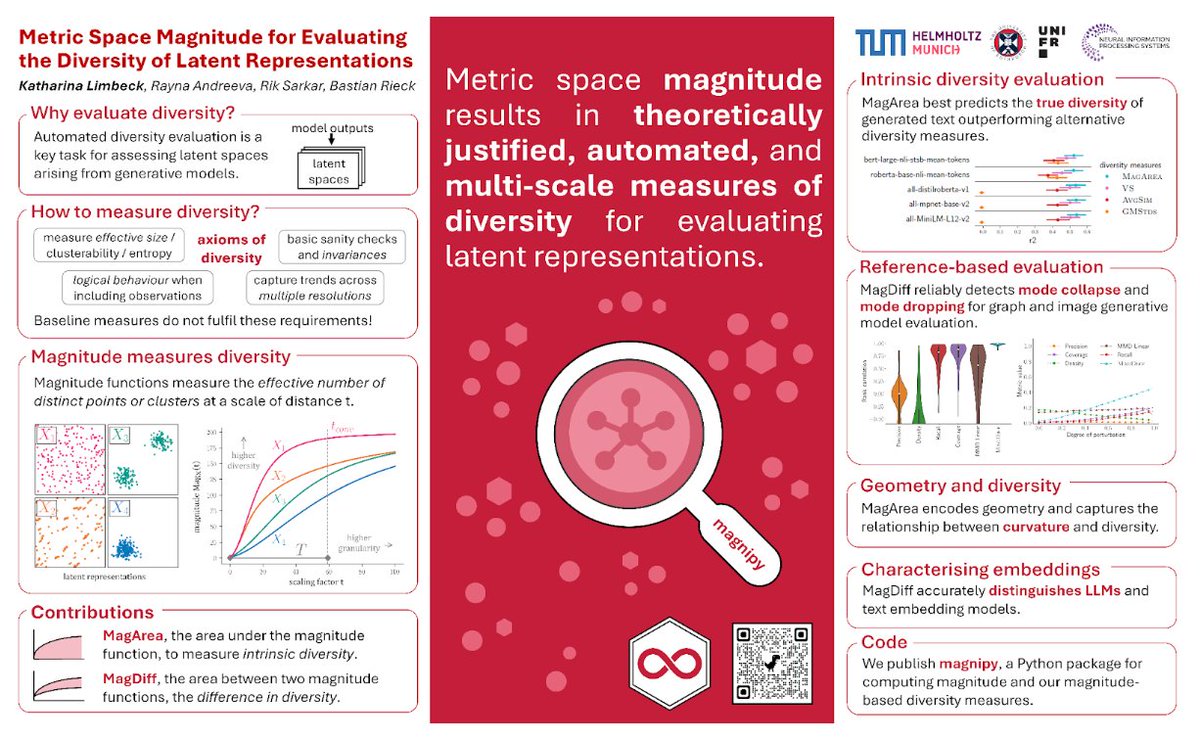

Coming up soon at #NeurIPS2024, combining #geometry and #topology: “Metric Space Magnitude for Evaluating the Diversity of Latent Representations” ❓ Ever wondered how to best assess the diversity of model outputs or how to choose between models? 👉We address these questions by measuring the diversity of generative models, assessing LLMs, and analysing latent spaces. Embeddings allow us to intuitively understand similarities between e.g. generated graphs, images or sentences, and we leverage this knowledge to automatically measure diversity. 🤔 But how to best measure diversity? From a mathematical perspective, diversity measures should fulfil theoretical guarantees. However, we find that many baseline diversity measures currently used for assessing latent spaces and generative models do not fulfil these necessary requirements. 🔍 We leverage the magnitude of metric spaces to define a family of novel, multi-scale diversity measures. Magnitude is a powerful geometric descriptor that measures diversity as the effective number of distinct points or clusters across varying scales of distance. By summarising magnitude across multiple resolutions, we then define theoretically-well funded measures of the intrinsic diversity or of the difference in diversity. 🚀 Experiments validate our proposed diversity measures. When evaluating LLMs, our magnitude-based measures characterise text embedding models by their diversity and best capture the ground-truth diversity of generated sentences. Further, when evaluating image and graph generative models, magnitude reliably detects mode collapse and mode dropping outperforming alternative evaluation metrics. 🔮 This work improves the diversity evaluation of latent spaces by leveraging magnitude as a theoretically-motivated measure of diversity and geometry. It paves the way for better benchmarking practices in generative model evaluation and further exploring the expressivity of magnitude in ML applications. Check out our paper or code to learn more! 📜 Paper: arxiv.org/abs/2311.16054 💻 Code: github.com/aidos-lab/magn… 📹 Video: youtube.com/watch?v=sZBP52… 👏Joint work w/ @LimbeckKat, @randreeva1 and @RikSarkarNet. 📢 Poster: #NeurIPS2024, Vancouver, Friday 13th December, 16:30

🚨So excited to share our first paper in collaboration with @Pseudomanifold, @LimbeckKat and @RikSarkarNet, in which we connect the "exotic" 🦄invariant magnitude with the generalisation error in neural networks! @BioMedAI_CDT @HelmholtzMunich 📜➡️arxiv.org/abs/2305.05611