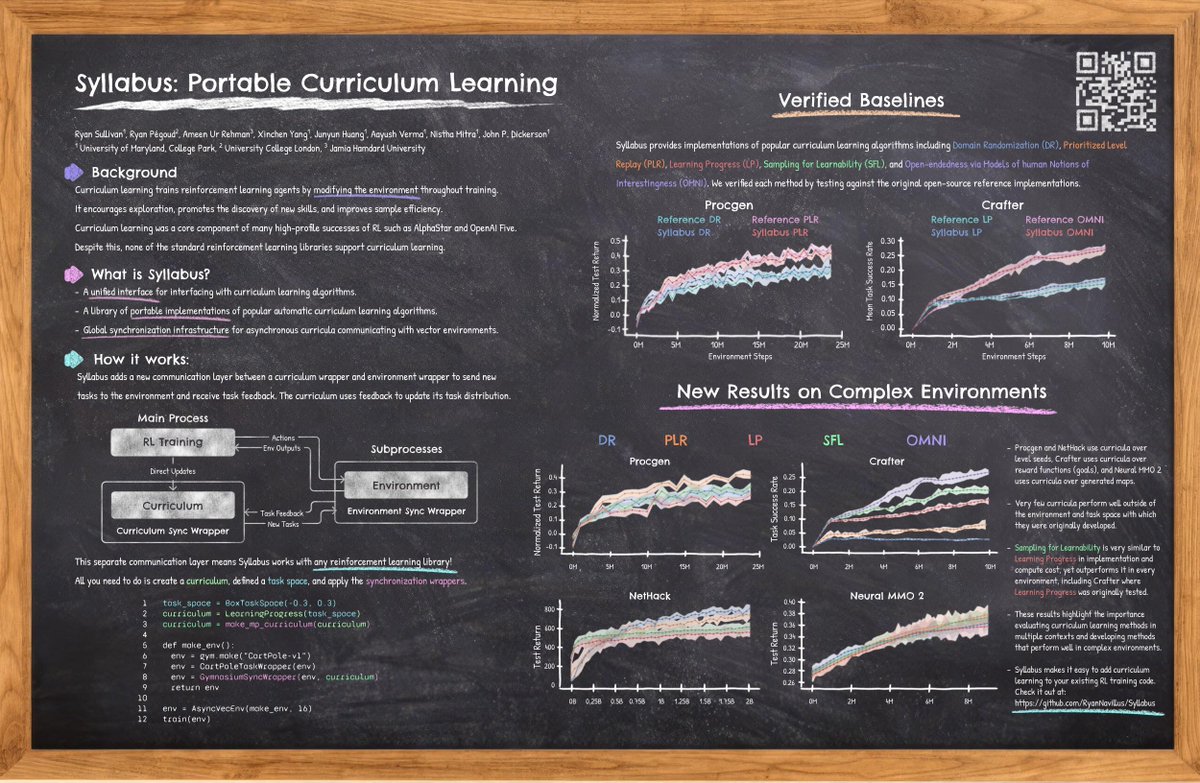

🚀 Introducing 🧭MAGELLAN—our new metacognitive framework for LLM agents! It predicts its own learning progress (LP) in vast natural language goal spaces, enabling efficient exploration of complex domains.🌍✨Learn more: 🔗 arxiv.org/abs/2502.07709 #OpenEndedLearning #LLM #RL