Maissam Barkeshli

683 posts

Maissam Barkeshli

@MBarkeshli

Visiting Researcher @ Meta FAIR. Professor of Physics @ University of Maryland & Joint Quantum Institute. Previously @ Berkeley,MIT,Stanford,Microsoft Station Q

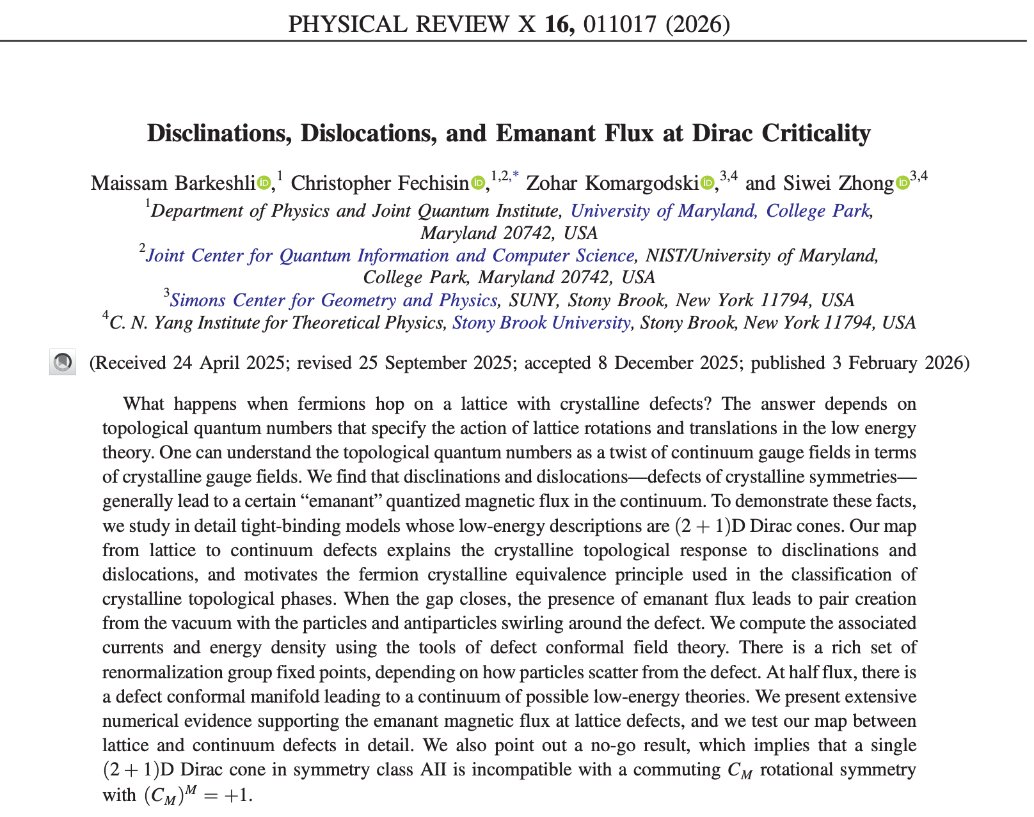

By studying how symmetries of lattice models map to symmetries of continuum theories that emerge at criticality, researchers show that the (2+1)D Dirac fermion exhibits a continuum of infrared fixed points at a particular applied magnetic flux. go.aps.org/46h8ACo

Thank you so much to everyone for this wonderful dinner! I’m truly grateful to Harvard University CMSA for this amazing experience. It makes me so happy to see the Math & AI community growing, can’t wait to see all the incredible things these brilliant minds will create together

AI and math. Geometry and symbolic reasoning. Amazing recent developments and stellar line up of speakers. It is going to be an exciting week! The Geometry of Machine Learning @ Harvard Center for Mathematical Sciences and Applications (CMSA) cmsa.fas.harvard.edu/event/mlgeomet…