Sabitlenmiş Tweet

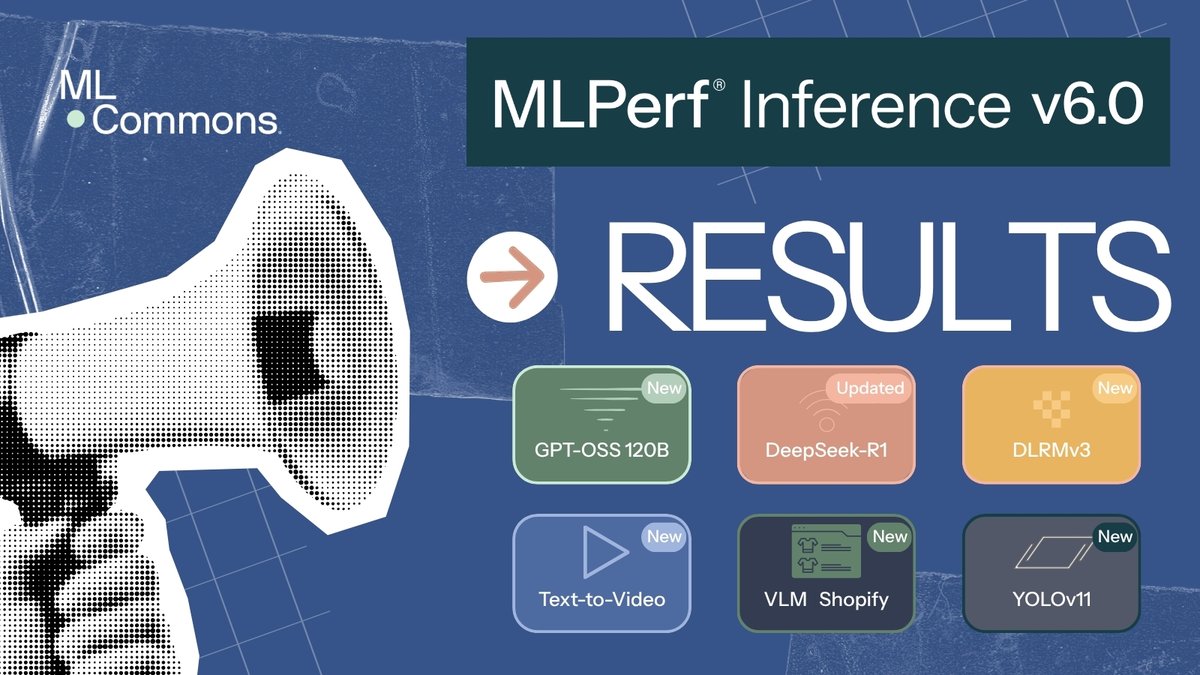

MLPerf Inference v6.0 is here - our most significant benchmark update ever.

5 new/updated benchmarks. 24 submitting organizations. Industry-first tests for text-to-video and speculative decoding.

Full results: mlcommons.org/2026/04/mlperf…

#MLPerf #MLCommons

English