Jim Manico from Manicode Security

43.6K posts

Jim Manico from Manicode Security

@manicode

AI and AppSec Educator. Secure coding system prompts. https://t.co/gbW3ZLhURT

Kauai, HI and Cobb, CA Katılım Temmuz 2009

6.1K Takip Edilen17.2K Takipçiler

Sabitlenmiş Tweet

From my experience all software developers are now security engineers wether they know it, admit to it or do it. Your code is now the security of the org you work for. #GoldenAgeOfDefense

Wat Ket, Thailand 🇹🇭 English

Jim Manico from Manicode Security retweetledi

Our security bug bounty program is now public on HackerOne.

We've run the program privately within the security research community, and their findings have strengthened our products. Now anyone can report vulnerabilities and get rewarded.

Read more: hackerone.com/anthropic

English

Jim Manico from Manicode Security retweetledi

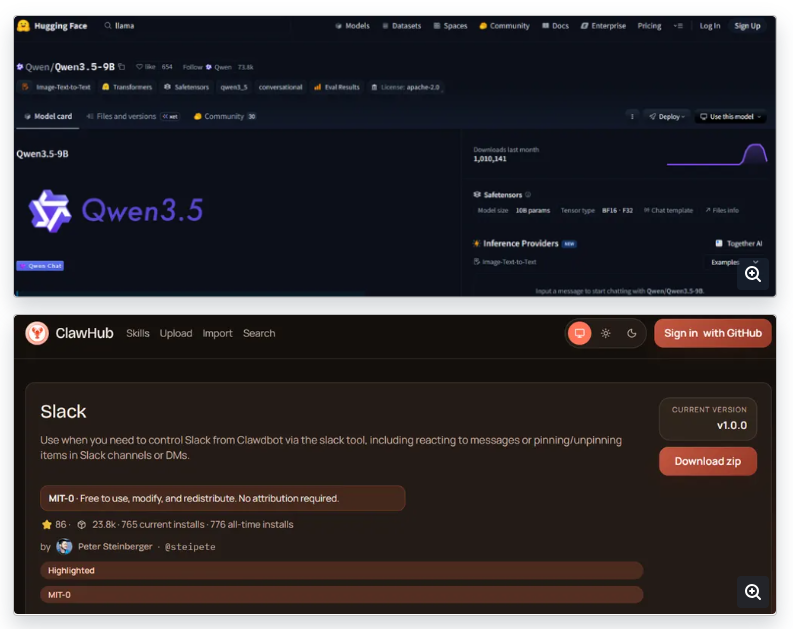

⚠️ Attackers poisoned Hugging Face & ClawHub (OpenClaw) with 575+ malicious skills from just 13 accounts.

🔸 Fake helpful AI tools that install trojans, miners & stealers (Windows + macOS)

🔸 Use hidden commands & indirect prompt injection

Quick action: Never install random AI skills or models. Always verify the source.

Read: thehackernews.com/2026/05/weekly…

English

Jim Manico from Manicode Security retweetledi

Jim Manico from Manicode Security retweetledi

@cybergeekgirl @LeighGi66657535 @endingwithali @tarah @Cyb3rMaddy @swagitda @FilipiPires @ITSecurityNinja @fairycakepixie @InfoSecGreyBrd @Beren_Camlost @NigelTozer @tamzinkg @robertson_moira @jessrobin96 @ChrisHanlonCA @SecEvangelism @swaroopsy @itsmeroy2012 @manicode whoop whoop - happy Friday all 🤘

GIF

English

Um, no? You just need a bunch of containers. Make them auto scale, and ofc you need a control plane… oh… oh no no no

LaurieWired@lauriewired

@kayleecodez hate to say it, but everyone that rejects kubernetes inevitably ends up recreating it from first principles lol

English

Jim Manico from Manicode Security retweetledi

The security industry is entering a period of compression. Model cybersecurity capabilities are rapidly increasing, and it's critical we arm defenders with the tools they need to protect what matters most.

We're launching two models today:

GPT-5.5 with TAC (Trusted Access for Cyber)

GPT-5.5-Cyber (Limited Preview)

GPT-5.5 is our starting point for most defensive workflows. It's exceedingly good at cybersecurity workflows and tasks like secure code review, vulnerability triage, detection engineering, malware analysis, and patch validation. We think this model is the right starting place for most organizations.

GPT-5.5-Cyber is exceptional for authorized workflows, including red teaming, penetration testing, and controlled validation. It's in research preview for specific organizations and requires enhanced verification and account-level controls.

We expect to continue to accelerate defenders with various models, including both our flagship models through Trusted Access for Cyber, and with dedicated cyber models like GPT‑5.5‑Cyber and even more cyber-capable models in the future.

openai.com/index/gpt-5-5-…

English

Jim Manico from Manicode Security retweetledi

Cloudflare Developers@CloudflareDev

Multiple security vulnerabilities affecting React Server Components and Next.js have been disclosed. We strongly recommend updating your applications immediately. Cloudflare WAF managed rules already mitigate the disclosed denial-of-service vulnerabilities, and we are investigating additional coverage for several other CVEs. developers.cloudflare.com/changelog/post…

QME

Jim Manico from Manicode Security retweetledi

Multiple security vulnerabilities affecting React Server Components and Next.js have been disclosed. We strongly recommend updating your applications immediately.

Cloudflare WAF managed rules already mitigate the disclosed denial-of-service vulnerabilities, and we are investigating additional coverage for several other CVEs.

developers.cloudflare.com/changelog/post…

English

Jim Manico from Manicode Security retweetledi

@UK_Daniel_Card I can see you’re getting better and better at using AI for assessment. Hi-5.

Are you using a harness?

English

Jim Manico from Manicode Security retweetledi

Jim Manico from Manicode Security retweetledi

I realize I’m a day late, but… May the 4th be with you!

With ❤️from @manicode, @FrankSEC42 and me (and the global @owasp community). 🙏

English

@liran_tal In general, I think local models are the future so I’m just spending a lot of time experimenting to see what their capability is, and what I see so far is impressive.

Once I’m up to the 128 GB of RAM level I’m certain that I can do most of my coding locally.

English

@liran_tal I have 32gb on ram on my m5 laptop and run several models for coding locally with no trouble. Just the smaller ones.

I have a Mac mini with 24g ram running minstrel small for other work.

But yea, I’m maxing out ram for all future purchases.

English

@liran_tal I have a machine with 256gb of ram on order. Local AI is very ram hungry indeed. :)

English

@liran_tal And the real point I’m making is that using local models with Claude Code is no longer sketchy. It’s now official.

English

Compared to llama.cpp? That’s not a clean comparison. Ollama uses llama.cpp as one of its inference backends, so performance is similar when configured the same.

The difference is that Ollama adds a management and API layer, which can introduce some overhead and reduce low-level control (batching, KV cache tuning, GPU offload, etc).

In exchange, you get significantly better usability: model packaging, versioning, and a clean local API.

Claude Code and local models (officially) here I come! 🕺

English