Matteo Gallici

42 posts

Matteo Gallici

@MatteoGallici

PhD Student UPC Barcelona - Reinforcement Learning

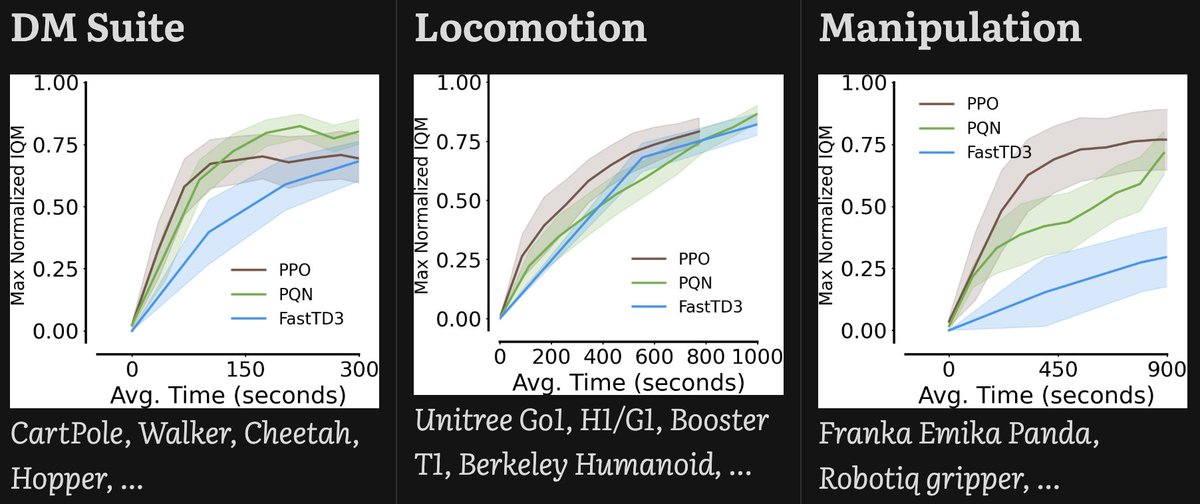

🚀 We're very excited to introduce Parallelised Q-Network (PQN), the result of an effort to bring Q-Learning into the world of pure-GPU training based on JAX! What’s the issue? Pure-GPU training can accelerate RL by orders of magnitude. However, Q-Learning heavily relies on replay buffers and target networks, making training computationally slow and memory-intensive on GPUs. As a result, researchers often prefer PPO, leaving Q-Learning behind 🥲 Our solution? Eliminate Q-Learning's legacy components. PQN challenges the standard DQN paradigm by training a Q-Network without replay buffers or target networks: just online Q-Learning with vectorised exploration and network normalization (layer or batch norm). Despite its simplicity, PQN sets a new strong baseline in many single-agent and multi-agent scenarios. Check out the thread for more details 🔥 📄 Paper: arxiv.org/abs/2407.04811 ⚙️ Code: github.com/mttga/purejaxq… A fantastic collaboration with @mattiefoxcs and @benjamin_ellis3, and the support of the amazing people at @FLAIR_Ox directed by @j_foerst. Inspired by the groundbreaking work of @_chris_lu_ on compiling entire RL pipelines in GPU: github.com/luchris429/pur…

🚀 We're very excited to introduce Parallelised Q-Network (PQN), the result of an effort to bring Q-Learning into the world of pure-GPU training based on JAX! What’s the issue? Pure-GPU training can accelerate RL by orders of magnitude. However, Q-Learning heavily relies on replay buffers and target networks, making training computationally slow and memory-intensive on GPUs. As a result, researchers often prefer PPO, leaving Q-Learning behind 🥲 Our solution? Eliminate Q-Learning's legacy components. PQN challenges the standard DQN paradigm by training a Q-Network without replay buffers or target networks: just online Q-Learning with vectorised exploration and network normalization (layer or batch norm). Despite its simplicity, PQN sets a new strong baseline in many single-agent and multi-agent scenarios. Check out the thread for more details 🔥 📄 Paper: arxiv.org/abs/2407.04811 ⚙️ Code: github.com/mttga/purejaxq… A fantastic collaboration with @mattiefoxcs and @benjamin_ellis3, and the support of the amazing people at @FLAIR_Ox directed by @j_foerst. Inspired by the groundbreaking work of @_chris_lu_ on compiling entire RL pipelines in GPU: github.com/luchris429/pur…

🚀 We're very excited to introduce Parallelised Q-Network (PQN), the result of an effort to bring Q-Learning into the world of pure-GPU training based on JAX! What’s the issue? Pure-GPU training can accelerate RL by orders of magnitude. However, Q-Learning heavily relies on replay buffers and target networks, making training computationally slow and memory-intensive on GPUs. As a result, researchers often prefer PPO, leaving Q-Learning behind 🥲 Our solution? Eliminate Q-Learning's legacy components. PQN challenges the standard DQN paradigm by training a Q-Network without replay buffers or target networks: just online Q-Learning with vectorised exploration and network normalization (layer or batch norm). Despite its simplicity, PQN sets a new strong baseline in many single-agent and multi-agent scenarios. Check out the thread for more details 🔥 📄 Paper: arxiv.org/abs/2407.04811 ⚙️ Code: github.com/mttga/purejaxq… A fantastic collaboration with @mattiefoxcs and @benjamin_ellis3, and the support of the amazing people at @FLAIR_Ox directed by @j_foerst. Inspired by the groundbreaking work of @_chris_lu_ on compiling entire RL pipelines in GPU: github.com/luchris429/pur…

🚀 We're very excited to introduce Parallelised Q-Network (PQN), the result of an effort to bring Q-Learning into the world of pure-GPU training based on JAX! What’s the issue? Pure-GPU training can accelerate RL by orders of magnitude. However, Q-Learning heavily relies on replay buffers and target networks, making training computationally slow and memory-intensive on GPUs. As a result, researchers often prefer PPO, leaving Q-Learning behind 🥲 Our solution? Eliminate Q-Learning's legacy components. PQN challenges the standard DQN paradigm by training a Q-Network without replay buffers or target networks: just online Q-Learning with vectorised exploration and network normalization (layer or batch norm). Despite its simplicity, PQN sets a new strong baseline in many single-agent and multi-agent scenarios. Check out the thread for more details 🔥 📄 Paper: arxiv.org/abs/2407.04811 ⚙️ Code: github.com/mttga/purejaxq… A fantastic collaboration with @mattiefoxcs and @benjamin_ellis3, and the support of the amazing people at @FLAIR_Ox directed by @j_foerst. Inspired by the groundbreaking work of @_chris_lu_ on compiling entire RL pipelines in GPU: github.com/luchris429/pur…

Introducing CrossQ, just published at #ICLR2024! 🎉 CrossQ achieves: 🔥 Very fast off-policy Deep RL 📈 with SOTA sample-efficiency in <5% of the gradient steps 🧹 without target nets or Q ensembles 🧑🏻💻Project and Code: adityab.github.io/CrossQ Joint work w/ @DPalenicek 🧵👇